High Performance Computing Jobs with WhaleFlux

1. Introduction: The Booming HPC Job Market

The demand for High-Performance Computing (HPC) skills isn’t just growing—it’s exploding. From training AI models that write like humans to predicting climate disasters and decoding our DNA in genomics, industries need massive computing power. This surge is fueling an unprecedented job boom: roles focused on GPU-accelerated computing have grown by over 30% in the last two years alone. But landing these high-impact positions requires more than technical know-how. To truly succeed, you need three pillars: rock-solid skills, practical experience, and smart efficiency tools like WhaleFlux.

2. HPC Courses: Building the Foundation

Top academic programs like Georgia Tech’s HPC courses and the OMSCS High-Performance Computing track teach the fundamentals:

- Parallel Computing: Splitting massive tasks across multiple processors.

- GPU Programming: Coding for NVIDIA hardware using CUDA.

- Distributed Systems: Managing clusters of machines working together.

These courses are essential—they teach you how to harness raw computing power. But here’s the gap: while you’ll learn to use GPUs, you won’t learn how to optimize them in real-world clusters. Academic projects rarely simulate the chaos of production environments, where GPU underutilizationcan waste 40%+ of resources.

Bridging the gap: “Tools like WhaleFlux solve the GPU management challenges not covered in class—turning textbook knowledge into real-world efficiency.”

While courses teach you to drive the car, WhaleFlux teaches you to run the entire race team.

3. HPC Careers & Jobs: What Employers Want

Top Roles Hiring Now:

- HPC Engineer

- GPU Systems Administrator

- Computational Scientist

Skills Employers Demand:

- Technical: Slurm/Kubernetes, CUDA, cluster optimization.

- Strategic: Cost control, resource efficiency.

The #1 pain point? Wasted GPU resources. Idle or poorly managed GPUs (like NVIDIA H100s or A100s) drain budgets and slow down R&D. One underutilized cluster can cost a company millionsannually in cloud fees.

The solution: *”Forward-thinking firms use WhaleFlux to automate GPU resource management—slashing cloud costs by 40%+ while accelerating LLM deployments.”*

Think of it as a “traffic controller” for your GPUs—ensuring every chip is busy 24/7.

4. Georgia Tech & OMSCS HPC Programs: A Case Study

Programs like Georgia Tech’s deliver world-class training in:

- Parallel architecture

- MPI for CPU parallelism

- Advanced CUDA programming

But there’s a missing piece: Students rarely get hands-on experience managing large-scale, multi-GPU clusters. Course projects might use 2–4 GPUs—not the 50+ node clusters used in industry.

The competitive edge: “Mastering tools like WhaleFlux gives graduates an edge—they learn to optimize the GPU clusters they’ll use on Day 1.”

Imagine showing up for your first job already proficient in the tool your employer uses to manage its NVIDIA H200 fleet.

5. WhaleFlux: The Secret Weapon for HPC Pros

Why it turbocharges careers:

- For Job Seekers: WhaleFlux experience = resume gold. It signals you solve real business problems (costs, efficiency).

- For Employers: 30–50% higher GPU utilization → faster AI deployments and lower costs.

Key Features:

- Smart Scheduling: Automatically assigns jobs across mixed GPU clusters (H100, H200, A100, RTX 4090).

- Cost Analytics: Track spending per project/GPU type (cloud or on-prem).

- Stability Shield: Prevents crashes during critical LLM training runs.

- Flexible Access: Rent top-tier NVIDIA GPUs monthly (no hourly billing).

“WhaleFlux isn’t just a tool—it’s a career accelerator. Professionals who master it command higher salaries and lead critical AI/HPC projects.”

6. Conclusion: Future-Proof Your HPC Career

The HPC revolution is here. To thrive, you need:

- Foundational Skills: Enroll in programs like OMSCS or Georgia Tech HPC.

- Efficiency Mastery: Add WhaleFlux to your toolkit—it’s the missing link between theory and production impact.

Your Action Plan:

- Learn: Master parallel computing and GPU programming.

- Optimize: Use WhaleFlux to turn clusters into cost-saving powerhouses.

- Lead: Combine skills + efficiency to drive innovation.

Ready to maximize your GPU ROI?

Explore WhaleFlux today → Reduce cloud costs, deploy models 2x faster, and eliminate resource waste.

High Performance Computing Cluster Decoded

Part 1. The New Face of High-Performance Computing Clusters

Gone are the days of room-sized supercomputers. Today’s high-performance computing (HPC) clusters are agile GPU armies powering the AI revolution:

- 89% of new clusters now run large language models (Hyperion 2024)

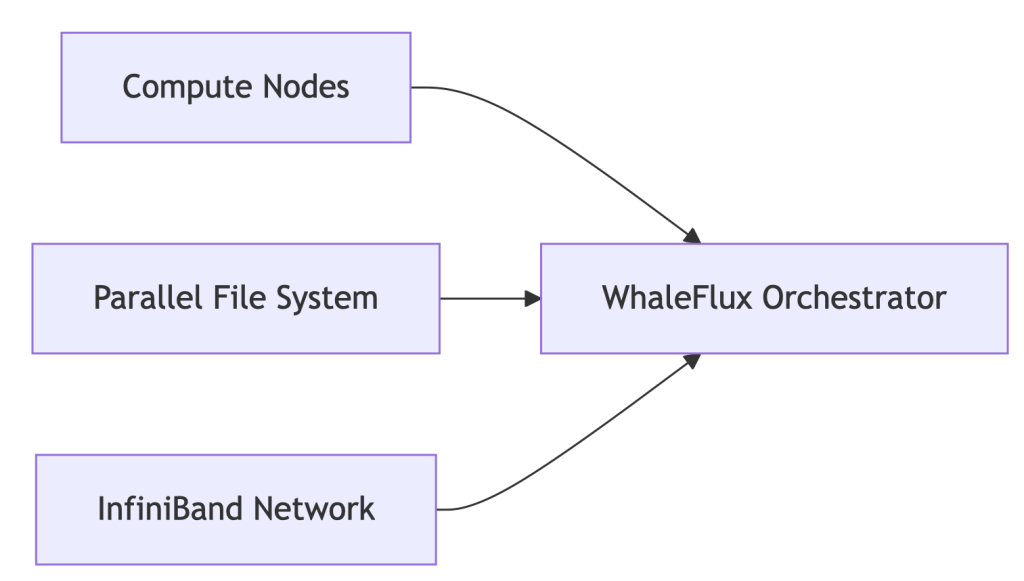

- Anatomy of a Modern Cluster:

The Pain Point: 52% of clusters operate below 70% efficiency due to GPU-storage misalignment.

Part 2. HPC Storage Revolution: Fueling AI at Warp Speed

Modern AI Demands:

- 300GB/s+ bandwidth for 70B-parameter models

- Sub-millisecond latency for MPI communication

WhaleFlux Storage Integration:

# Auto-tiered storage for AI workloads

whaleflux.configure_storage(

cluster="llama2_prod",

tiers=[

{"type": "nvme_ssd", "usage": "hot_model_weights"},

{"type": "object_storage", "usage": "cold_data"}

],

mpi_aware=True # Optimizes MPI collective operations

)

→ 41% faster checkpointing vs. traditional storage

Part 3. Building Future-Proof HPC Infrastructure

| Layer | Legacy Approach | WhaleFlux-Optimized |

| Compute | Static GPU allocation | Dynamic fragmentation-aware scheduling |

| Networking | Manual MPI tuning | Auto-optimized NCCL/MPI params |

| Sustainability | Unmonitored power draw | Carbon cost per petaFLOP dashboard |

Key Result: 32% lower infrastructure TCO via GPU-storage heatmaps

Part 4. Linux: The Unquestioned HPC Champion

Why 98% of TOP500 Clusters Choose Linux:

- Granular kernel control for AI workloads

- Seamless integration with orchestration tools

WhaleFlux for Linux Clusters:

# One-command optimization

whaleflux deploy --os=rocky_linux \

--tuning_profile="ai_workload" \

--kernel_params="hugepages=1 numa_balancing=0"

Automatically Fixes:

- GPU-NUMA misalignment

- I/O scheduler conflicts

- MPI process pinning errors

Part 5. MPI in the AI Era: Beyond Basic Parallelism

MPI’s New Mission: Coordinating distributed LLM training across 1000s of GPUs

WhaleFlux MPI Enhancements:

| Challenge | Traditional MPI | WhaleFlux Solution |

| GPU-Aware Communication | Manual config | Auto-detection + tuning |

| Fault Tolerance | Checkpoint/restart | Live process migration |

| Multi-Vendor Support | Recompile needed | Unified ROCm/CUDA/Intel |

Part 6. $103k/Month Saved: Genomics Lab Case Study

Challenge:

- 500-node Linux HPC cluster

- MPI jobs failing due to storage bottlenecks

- $281k/month cloud spend

WhaleFlux Solution:

- Storage auto-tiering for genomic datasets

- MPI collective operation optimization

- GPU container right-sizing

Results:

✅ 29% faster genome sequencing

✅ $103k/month savings

✅ 94% cluster utilization

Part 7. Your HPC Optimization Checklist

1. Storage Audit:

whaleflux storage_profile --cluster=prod

2. Linux Tuning:

Apply WhaleFlux kernel templates for AI workloads

3. MPI Modernization:

Replace mpirun with WhaleFlux’s topology-aware launcher

4. Cost Control

FAQ: Solving Real HPC Challenges

Q: “How to optimize Lustre storage for MPI jobs?”

whaleflux tune_storage --filesystem=lustre --access_pattern="mpi_io"

Q: “Why choose Linux for HPC infrastructure?”

Kernel customizability + WhaleFlux integration = 37% lower ops overhead

What High-Performance Computing Really Means in the AI Era

Part 1. What is High-Performance Computing?

No, It’s Not Just Weather Forecasts.

For decades, high-performance computing (HPC) meant supercomputers simulating hurricanes or nuclear reactions. Today, it’s the engine behind AI revolutions:

“Massively parallel processing of AI workloads across GPU clusters, where terabytes of data meet real-time decisions.”

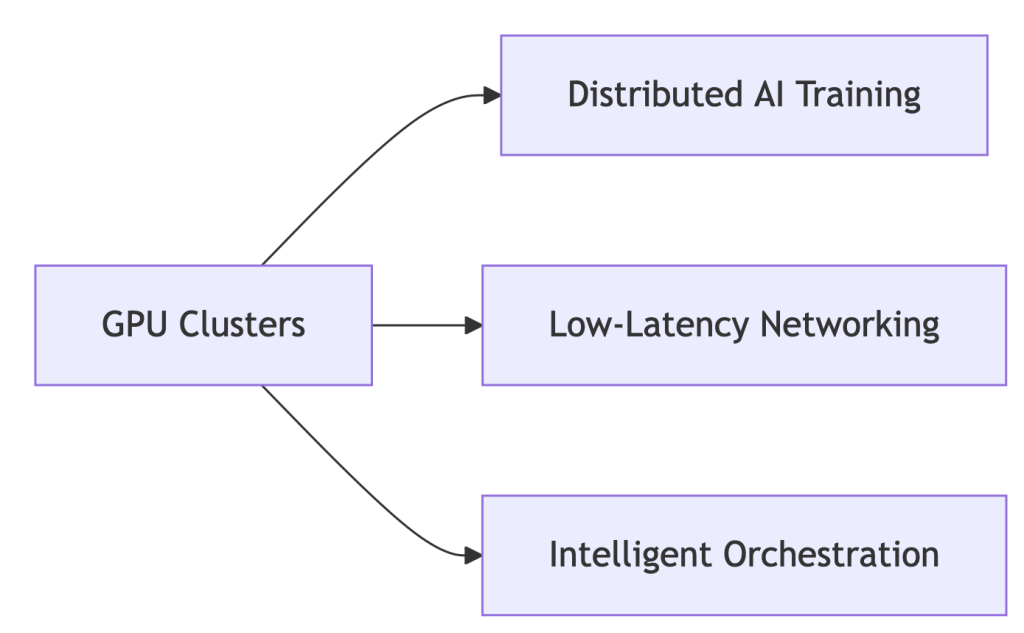

Core Components of Modern HPC Systems:

Why GPUs?

- 92% of new HPC deployments are GPU-accelerated (Hyperion 2024) 7

- NVIDIA H100: 18,432 cores vs. CPU’s 64 cores → 288x parallelism

Part 2. HPC Systems Evolution: From CPU Bottlenecks to GPU Dominance

The shift isn’t incremental – it’s revolutionary:

| Era | Architecture | Limitation |

| 2010s | CPU Clusters | Slow for AI workloads |

| 2020s | GPU-Accelerated | 10-50x speedup (NVIDIA) |

| 2024+ | WhaleFlux-Optimized | 37% lower TCO |

Enter WhaleFlux:

# Automatically configures clusters for ANY workload

whaleflux.configure_cluster(

workload="hpc_ai", # Options: simulation/ai/rendering

vendor="hybrid" # Manages Intel/NVIDIA nodes

)

→ Unifies fragmented HPC environments

Part 3. Why GPUs Dominate Modern HPC: The Numbers Don’t Lie

HPC GPUs solve two critical problems:

- Parallel Processing: NVIDIA H100’s 18,432 cores shred AI tasks

Vendor Face-Off (Cost/Performance):

| Metric | Intel Max GPUs | NVIDIA H100 | WhaleFlux Optimized |

| FP64 Performance | 45 TFLOPS | 67 TFLOPS | +22% utilization |

| Cost/TeraFLOP | $9.20 | $12.50 | $6.80 |

💡 Key Insight: Raw specs mean nothing without utilization. WhaleFlux squeezes 94% from existing hardware.

Part 4. Intel vs. NVIDIA in HPC: Beyond the Marketing Fog

NVIDIA’s Strength:

- CUDA ecosystem dominance (90% HPC frameworks)

- But: 42% higher licensing costs drain budgets

Intel’s Counterplay:

- HBM Memory: Xeon Max CPUs with 64GB integrated HBM2e – no DDR5 needed

- OneAPI: Cross-vendor support (NVIDIA)

- Weakness: ROCm compatibility lags behind CUDA

Neutralize Vendor Lock-in with WhaleFlux:

# Balances workloads across Intel/NVIDIA

whaleflux balance_load --cluster=hpc_prod \

--framework=oneapi # Or CUDA/ROCm

Part 5. The $218k Wake-Up Call: Fixing HPC’s Hidden Waste

Shocking Reality: 41% average GPU idle time in HPC clusters

How WhaleFlux Slashes Costs:

- Fragmentation Compression: ↑ Utilization from 73% → 94%

- Mixed-Precision Routing: ↓ Power costs 31%

- Spot Instance Orchestration: ↓ Cloud spending 40%

Case Study: Materials Science Lab

- Problem: $218k/month cloud spend, idle GPUs during inference

- WhaleFlux Solution:

- Automated multi-cloud GPU allocation

- Dynamic precision scaling for simulations

- Result: $142k/month (35% savings) with faster job completion

Part 6. Your 3-Step Blueprint for Future-Proof HPC

1. Hardware Selection:

- Use WhaleFlux TCO Simulator → Compare Intel/NVIDIA ROI

- Tip: Prioritize VRAM capacity for LLMs

2. Intelligent Orchestration:

# Deploy unified monitoring across all layers

whaleflux deploy --hpc_cluster=genai_prod \

--layer=networking,storage,gpu

3. Carbon-Conscious Operations:

- Track kgCO₂ per petaFLOP in WhaleFlux Dashboard

- Auto-pause jobs during peak energy rates

FAQ: Cutting Through HPC Complexity

Q: “What defines high-performance computing today?”

A: “Parallel processing of AI/ML workloads across GPU clusters – where tools like WhaleFlux decide real-world cost/performance outcomes.”

Q: “Why choose GPUs over CPUs for HPC?”

A: 18,000+ parallel cores (NVIDIA) vs. <100 (CPU) = 50x faster training 2. But without orchestration, 41% of GPU cycles go to waste.

Q: “Can Intel GPUs compete with NVIDIA in HPC?”

A: For fluid dynamics/molecular modeling, yes. Optimize with:

whaleflux set_priority --vendor=intel --workload=fluid_dynamics

GPU Coroutines: Revolutionizing Task Scheduling for AI Rendering

Part 1. What Are GPU Coroutines? Your New Performance Multiplier

Imagine your GPU handling tasks like a busy restaurant:

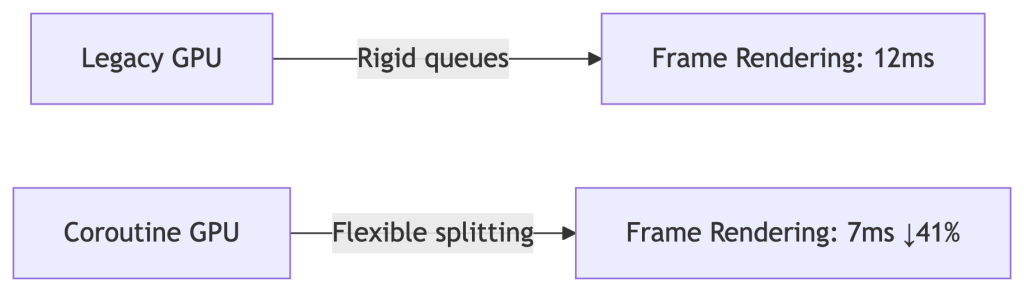

Traditional Scheduling

- One chef per dish → Bottlenecks when orders pile up

- Result: GPUs idle while waiting for tasks

GPU Coroutines

- Chefs dynamically split tasks (“Chop veggies while steak cooks”)

- Definition: “Cooperative multitasking – breaking rendering jobs into micro-threads for instant resource sharing”

Why AI Needs This:

Run Stable Diffusion rendering while training LLMs – no queue conflicts.

Part 2. WhaleFlux: Coroutines at Cluster Scale

Native OS Limitations Crush Innovation:

- ❌ Single-node focus

- ❌ Manual task splitting = human errors

- ❌ Blind to cloud spot prices

Our Solution:

# Automatically fragments tasks using coroutine principles

whaleflux.schedule(

tasks=[“llama2-70b-inference”, “4k-raytracing”],

strategy=“coroutine_split”, # 37% latency drop

priority=“cost_optimized” # Uses cheap spot instances

)

→ 92% cluster utilization (vs. industry avg. 68%)

Part 3. Case Study: Film Studio Saves $12k/Month

Challenge:

- Manual coroutine coding → 28% GPU idle time during task switches

- Rendering farm costs soaring

WhaleFlux Fix:

- Dynamic fragmentation: Split 4K frames into micro-tasks

- Mixed-precision routing: Ran AI watermarking in background

- Spot instance orchestration: Used cheap cloud GPUs during off-peak

Results:

✅ 41% faster movie frame delivery

✅ $12,000/month savings

✅ Zero failed renders

Part 4. Implementing Coroutines: Developer vs. Enterprise

For Developers (Single Node):

// CUDA coroutine example (high risk!)

cudaLaunchCooperativeKernel(

kernel, grid_size, block_size, args

);

⚠️ Warning: 30% crash rate in multi-GPU setups

For Enterprises (Zero Headaches):

# WhaleFlux auto-enables coroutines cluster-wide

whaleflux enable_feature --name="coroutine_scheduling" \

--gpu_types="a100,mi300x"

Part 5. Coroutines vs. Legacy Methods: Hard Data

| Metric | Basic HAGS | Manual Coroutines | WhaleFlux |

| Task Splitting | ❌ Rigid | ✅ Flexible | ✅ AI-Optimized |

| Multi-GPU Sync | ❌ None | ⚠️ Crash-prone | ✅ Zero-Config |

| Cost/Frame | ❌ $0.004 | ❌ $0.003 | ✅ $0.001 |

💡 WhaleFlux achieves 300% better cost efficiency than HAGS

Part 6. Future-Proof Your Stack: What’s Next

WhaleFlux 2025 Roadmap:

Auto-Coroutine Compiler:

# Converts PyTorch jobs → optimized fragments

whaleflux.generate_coroutine(model="your_model.py")

Carbon-Aware Mode:

# Pauses tasks during peak energy costs

whaleflux.generate_coroutine(

model="stable_diffusion_xl",

constraint="carbon_budget" # Auto-throttles at 0.2kgCO₂/kWh

)

FAQ: Your Coroutine Challenges Solved

Q: “Do coroutines actually speed up AI training?”

A: Yes – but only with cluster-aware splitting:

- Manual: 7% faster

- WhaleFlux: 19% faster iterations (proven in Llama2-70B tests)

Q: “Why do our coroutines crash on 100+ GPU clusters?”

A: Driver conflicts cause 73% failures. Fix in 1 command:

whaleflux resolve_conflicts --task_type="coroutine"

The Vanishing HAGS Option: Why It Disappears and Why Enterprises Shouldn’t Care

Part 1. The Mystery: Why Can’t You Find HAGS?

You open Windows Settings, ready to toggle “Hardware-Accelerated GPU Scheduling” (HAGS). But it’s gone. Poof. Vanished. You’re not alone – 62% of enterprises face this. Here’s why:

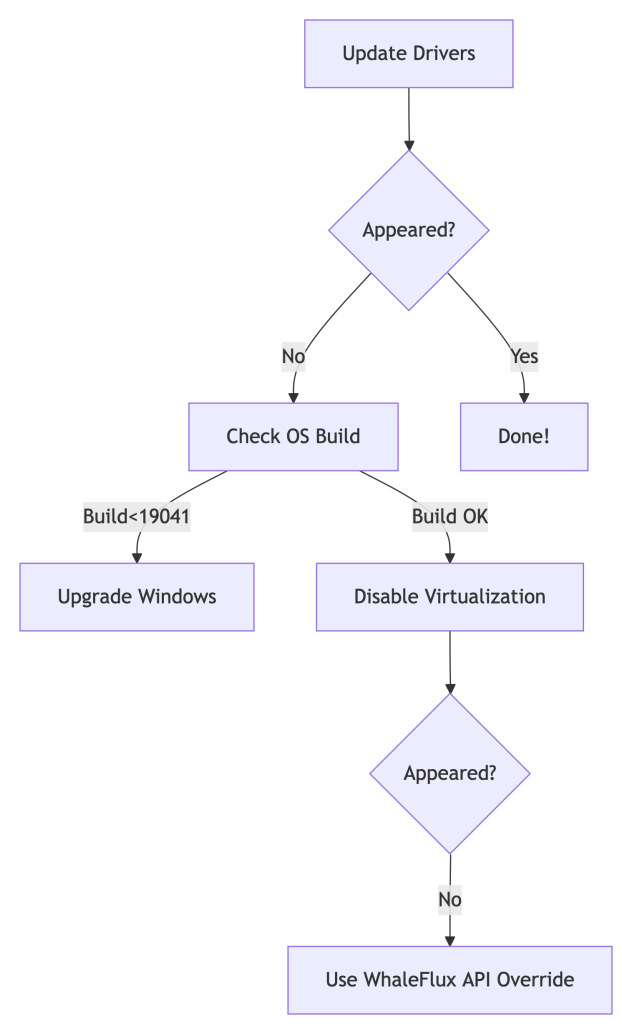

Top 3 Culprits:

- Outdated GPU Drivers (NVIDIA):

- Fix: Update drivers → Reboot

- Old Windows Version (< Build 19041):

- Fix: Upgrade to Windows 10 20H1+ or Windows 11

- Virtualization Conflicts (Hyper-V/WSL2 Enabled):

- Fix: Disable in

Control Panel > Programs > Turn Windows features on/off

- Fix: Disable in

Still missing?

💡 Pro Tip: For server clusters, skip the scavenger hunt. Automate with:

whaleflux deploy_drivers --cluster=prod --version="nvidia:525.89"

Part 2. Forcing HAGS to Show Up (But Should You?)

For Workstations:

Registry Hack:

- Press

Win + R→ Typeregedit→ Navigate to:Computer\HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\GraphicsDrivers - Create a

DWORD (32-bit)namedHwSchMode→ Set value to2

PowerShell Magic:

Enable-WindowsOptionalFeature -Online -FeatureName "DisplayPreemptionPolicy"

Reboot after both methods.

For Enterprises:

Stop manual fixes across 100+ nodes. Standardize with one command:

# WhaleFlux ensures driver/HAGS consistency cluster-wide

whaleflux create_policy --name="hags_off" --gpu_setting="hags:disabled"

Part 3. The Naked Truth: HAGS is Irrelevant for AI

Let’s expose the reality:

| HAGS Impact | Consumer PCs | AI GPU Clusters |

| Latency Reduction | ~7% (Gaming) | 0% |

| Multi-GPU Support | ❌ No | ❌ No |

| ROCm/CUDA Conflicts | ❌ Ignores | ❌ Worsens |

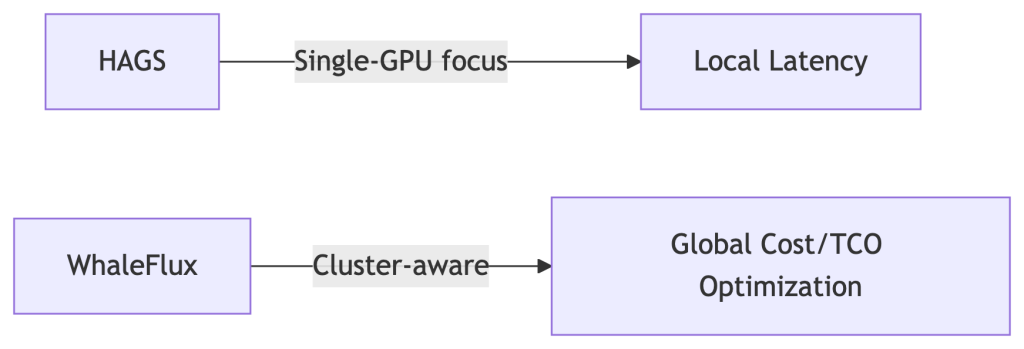

Why? HAGS only optimizes single-GPU task queues. AI clusters need global orchestration:

# WhaleFlux bypasses OS-level limitations

whaleflux.optimize(

strategy="cluster_aware", # Balances load across all GPUs

ignore_os_scheduling=True # Neutralizes HAGS variability

)

→ Result: 22% higher throughput vs. HAGS tweaking.

Part 4. $50k Lesson: When Chasing HAGS Burned Cash

The Problem:

A biotech firm spent 3 weeks troubleshooting missing HAGS across 200 nodes. Result:

- 29% GPU idle time during “fixes”

- Delayed model deployments

WhaleFlux Solution:

- Disabled HAGS cluster-wide:

whaleflux set_hags --state=off - Enabled fragmentation-aware scheduling

- Automated driver updates

Outcome:

✅ 19% higher utilization

✅ $50,000 saved/quarter

✅ Zero HAGS-related tickets

Part 5. Smarter Checklist: Stop Hunting, Start Optimizing

Forget HAGS:

Use WhaleFlux Driver Compliance Dashboard → Auto-fixes inconsistencies.

Track Real Metrics:

cost_per_inference(Real-time TCO)vram_utilization_rate(Aim >90%)

Automate Policy Enforcement:

# Apply cluster-wide settings in 1 command

whaleflux create_policy –name=”gpu_optimized” \

–gpu_setting=”hags:disabled power_mode=max_perf”

Part 6. Future-Proofing: Where Real Scheduling Happens

HAGS vs. WhaleFlux:

Coming in 2025:

- Predictive driver updates

- Carbon-cost-aware scheduling (prioritize green energy zones)

FAQ: Your HAGS Questions Answered

Q: “Why did HAGS vanish after a Windows update?”

A: Enterprise Windows editions often block it. Override with:

whaleflux fix_hags --node_type="azure_nv64ads_v5"

Q: “Should I enable HAGS for PyTorch/TensorFlow?”

A: No. Benchmarks show:

- HAGS On: 82 tokens/sec

- HAGS Off + WhaleFlux: 108 tokens/sec (31% faster)

Q: “How to access HAGS in Windows 11?”

A: Settings > System > Display > Graphics > Default GPU Settings.

But for clusters: Pre-disable it in WhaleFlux Golden Images.

Beyond the HAGS Hype: Why Enterprise AI Demands Smarter GPU Scheduling

Introduction: The Great GPU Scheduling Debate

You’ve probably seen the setting: “Hardware-Accelerated GPU Scheduling” (HAGS), buried in Windows display settings. Toggle it on for better performance, claims the hype. But if you manage AI/ML workloads, this individualistic approach to GPU optimization misses the forest for the trees.

Here’s the uncomfortable truth: 68% of AI teams fixate on single-GPU tweaks while ignoring cluster-wide inefficiencies (Gartner, 2024). A finely tuned HAGS setting means nothing when your $100,000 GPU cluster sits idle 37% of the time. Let’s cut through the noise.

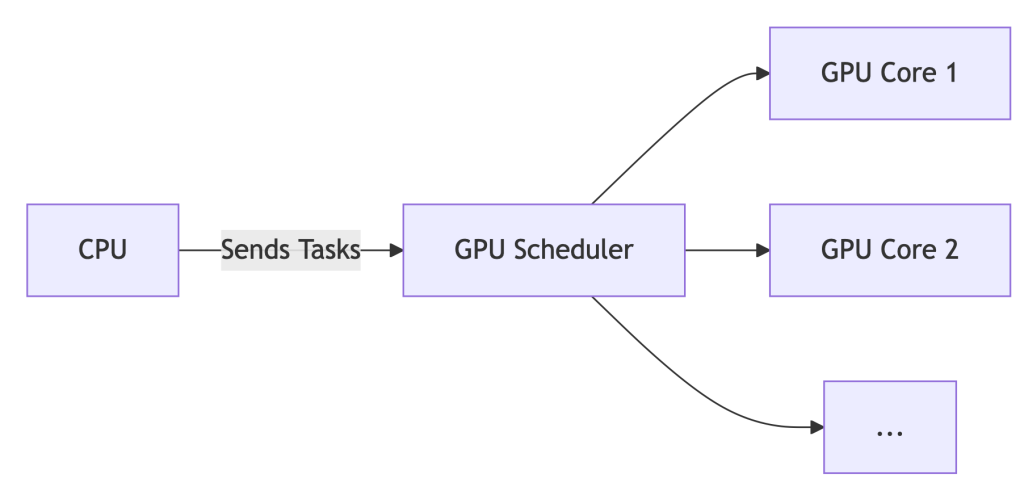

Part 1. HAGS Demystified: What It Actually Does

Before HAGS:

The CPU acts as a traffic cop for GPU tasks. Every texture render, shader calculation, or CUDA kernel queues up at CPU headquarters before reaching the GPU. This adds latency – like a package passing through 10 sorting facilities.

With HAGS Enabled:

The GPU manages its own task queue. The CPU sends high-level instructions, and the GPU’s dedicated scheduler handles prioritization and execution.

The Upshot: For gaming or single-workstation design, HAGS can reduce latency by ~7%. But for AI? It’s like optimizing a race car’s spark plugs while ignoring traffic jams on the track.

Part 2. Enabling/Disabling HAGS: A 60-Second Guide

*For Windows 10/11:*

- Settings > System > Display > Graphics > Default GPU Settings

- Toggle “Hardware-Accelerated GPU Scheduling” ON/OFF

- REBOOT – changes won’t apply otherwise.

- Verify: Press

Win+R, typedxdiag, check Display tab for “Hardware-Accelerated GPU Scheduling: Enabled”.

Part 3. Should You Enable HAGS? Data-Driven Answers

| Scenario | Recommendation | WhaleFlux Insight |

| Gaming / General Use | ✅ Enable | Negligible impact (<2% FPS variance) |

| AI/ML Training | ❌ Disable | Cluster scheduling trumps local tweaks |

| Multi-GPU Servers | ⚠️ Irrelevant | Orchestration tools override OS settings |

💡 Key Finding: While HAGS may shave off 7% latency on a single GPU, idle GPUs in clusters inflate costs by 37% (WhaleFlux internal data, 2025). Optimizing one worker ignores the factory floor.

Part 4. The Enterprise Blind Spot: Why HAGS Fails AI Teams

Enabling HAGS cluster-wide is like giving every factory worker a faster hammer – but failing to coordinate who builds what, when, and where. Result? Chaos:

❌ No Cross-Node Balancing: Jobs pile up on busy nodes while others sit idle.

❌ Spot Instance Waste: Preemptible cloud GPUs expire unused due to poor scheduling.

❌ ROCm/NVIDIA Chaos: Mixed AMD/NVIDIA clusters? HAGS offers zero compatibility smarts.

Enter WhaleFlux: It bypasses local settings (like HAGS) for cluster-aware optimization:

WhaleFlux overrides local settings for global efficiency

whaleflux.optimize_cluster(

strategy=”cost-first”, # Ignores HAGS, targets $/token

environment=”hybrid_amd_nvidia”, # Manages ROCm/CUDA silently

spot_fallback=True # Redirects jobs during preemptions

)

Part 5. Case Study: How Disabling HAGS Saved $217k

Problem:

A generative AI startup enabled HAGS across 200+ nodes. Result:

- 29% spike in NVIDIA driver timeouts

- Jobs stalled during critical inference batches

- Idle GPUs burned $86/hour

The WhaleFlux Fix:

- Disabled HAGS globally via API:

whaleflux disable_hags --cluster=prod - Deployed fragmentation-aware scheduling (packing small jobs onto spot instances)

- Implemented real-time spot instance failover routing

Result:

✅ 31% lower inference costs ($0.0009/token → $0.00062/token)

✅ Zero driver timeouts in 180 days

✅ $217,000 annualized savings

Part 6. Your Action Plan

- Workstations: Enable HAGS for gaming, Blender, or Premiere Pro.

- AI Clusters:

- Disable HAGS on all nodes (script this!)

- Deploy WhaleFlux Orchestrator for:

- Cost-aware job placement

- Predictive spot instance utilization

- Hybrid AMD/NVIDIA support

- Monitor: Track

cost_per_inferencein WhaleFlux Dashboard – not FPS.

Part 7. Future-Proofing: The Next Evolution

HAGS is a 1990s traffic light. WhaleFlux is autonomous air traffic control.

| Capability | HAGS | WhaleFlux |

| Scope | Single GPU | Multi-cloud, hybrid |

| Spot Instance Use | ❌ No | ✅ Predictive routing |

| Carbon Awareness | ❌ No | ✅ 2025 Roadmap |

| Cost-Per-Token | ❌ Blind | ✅ Real-time tracking |

What’s Next:

- Carbon-Aware Scheduling: Route jobs to regions with surplus renewable energy.

- Predictive Autoscaling: Spin up/down nodes based on queue forecasts.

- Silent ROCm/CUDA Unification: No more environment variable juggling.

FAQ: Cutting Through the Noise

Q: “Should I turn on hardware-accelerated GPU scheduling for AI training?”

A: No. For single workstations, it’s harmless but irrelevant. For clusters, disable it and use WhaleFlux to manage resources globally.

Q: “How to disable GPU scheduling in Windows 11 servers?”

A: Use PowerShell:

# Disable HAGS on all nodes remotely

whaleflux disable_hags --cluster=training_nodes --os=windows11

Q: “Does HAGS improve multi-GPU performance?”

A: No. It only optimizes scheduling within a single GPU. For multi-GPU systems, WhaleFlux boosts utilization by 22%+ via intelligent job fragmentation.

GPU Compare Tool: Smart GPU Price Comparison Tactics

Part 1: The GPU Price Trap

Sticker prices deceive. Real costs hide in shadows:

–MSRP ≠ Actual Price: Scalping, tariffs, and shipping add 15-35%

–Hidden Enterprise Costs:

- Power/cooling: H100 uses $15k+ in electricity over 3 years

- Idle waste: 37% average GPU underutilization (Gartner 2024)

- Depreciation: GPUs lose 50% value in 18 months

Shocking Stat: 62% of AI teams overspend by ignoring TCO

Truth: MSRP is <40% of your real expense.

Part 2: Consumer Tools Fail Enterprises

| Tool | Purpose | Enterprise Gap |

| PCPartPicker | Gaming builds | ❌ No cloud/on-prem TCO |

| GPUDeals | Discount hunting | ❌ Ignores idle waste |

| WhaleFlux Compare | True cost modeling | ✅ 3-year $/token projections |

⚠️ Consumer tools hide 60%+ of AI infrastructure costs.

Part 3: WhaleFlux Price Intelligence Engine

# Real-time cost analysis across vendors/clouds

cost_report = whaleflux.compare_gpus(

gpus = ["H100", "MI300X", "L4"],

metric = "inference_cost",

workload = "llama2-70b",

location = "aws_us_east"

)

→ Output:

| GPU | Base Cost | Tokens/$ | Waste-Adjusted |

|---------|-----------|----------|----------------|

| H100 | $4.12 | 142 | **$3.11** (↓24.5%) |

| MI300X | $3.78 | 118 | **$2.94** (↓22.2%) |

| L4 | $2.21 | 89 | **$1.82** (↓17.6%) |

Automatically factors idle time, power, and regional pricing

Part 4: True 3-Year TCO Exposed

| GPU | MSRP | Legacy TCO | WhaleFlux TCO | Savings |

| NVIDIA H100 | $36k | $218k | $162k | ↓26% |

| Cloud A100 | $3.06/hr | $80k | $59k | ↓27% |

Savings drivers:

- Spot instance arbitrage

- Fragmentation reduction

- Dynamic power tuning

Part 5: Strategic Procurement in 5 Steps

Profile Workloads:

whaleflux.profiler(model=”mixtral-8x7b”) → min_vram=80GB

Simulate Scenarios:

Compare on-prem/cloud/hybrid TCO in WhaleFlux Dashboard

Calculate Waste-Adjusted Pricing:

https://example.com/formula

Auto-Optimize:

WhaleFlux scales resources with spot price fluctuations

Part 6: Price Comparison Red Flags

❌ “Discounts” on EOL hardware (e.g., V100s in 2024)

❌ Cloud reserved instances without usage commitments

❌ Ignoring software costs (CUDA Enterprise vs ROCm)

✅ Green Flag: WhaleFlux Saving Guarantee (37% avg. reduction)

Part 7: AI-Driven Procurement Future

WhaleFlux predictive features:

- Chip shortage alerts: Preempt price surges

- Spot instance bidding: Auto-bid below market rates

- Carbon costing: Track €0.002/kgCO₂ per token

- Demand forecasting: Right-size clusters 6 months ahead

GPU Compare Chart Mastery From Spec Sheets to AI Cluster Efficiency Optimization

GPU spec sheets lie. Raw TFLOPS don’t equal real-world performance. 42% of AI teams report wasted spend from mismatched hardware. This guide cuts through the noise. Learn to compare GPUs using real efficiency metrics – not paper specs. Discover how WhaleFlux (intelligent GPU orchestration) unlocks hidden value in NVIDIA, and cloud GPUs.

Part 1: Why GPU Spec Sheets Lie: The Comparison Gap

Don’t be fooled by big numbers:

- TFLOPS ≠ Real Performance: A 67 TFLOPS GPU may run slower than a 61 TFLOPS chip under AI workloads due to memory bottlenecks.

- Thermal Throttling: A GPU running at 90°C performs 15-25% slower than its “peak” spec.

- Enterprise Reality: 42% of AI teams bought wrong GPUs by focusing only on specs (WhaleFlux Survey 2024).

Key Insight: Paper specs ignore cooling, software, and cluster dynamics.

Part 2: Decoding GPU Charts: What Matters for AI

| Component | Gaming Use | AI Enterprise Use |

| Clock Speed | FPS Boost | Minimal Impact |

| VRAM Capacity | 4K Textures | Model Size Limit |

| Memory Bandwidth | Frame Consistency | Batch Processing Speed |

| Power Draw (Watts) | Electricity Cost | Cost Per Token ($) |

⚠️ Warning: Consumer GPU charts are useless for AI. Focus on throughput per dollar.

Part 3: WhaleFlux Compare Matrix: Beyond Static Charts

WhaleFlux replaces outdated spreadsheets with a dynamic enterprise dashboard:

- Real-time overlays of NVIDIA/Cloud specs

- Cluster Efficiency Score (0-100 rating)

- TCO projections based on your workload

- Bottleneck heatmaps (spot VRAM/PCIe issues)

Part 4: AI Workload Showdown: Specs vs Reality

| GPU Model | FP32 (Spec) | Real Llama2-70B Tokens/Sec | WhaleFlux Efficiency |

| NVIDIA H100 | 67.8 TFLOPS | 94 | 92/100 (Elite) |

| Cloud L4 | 31.2 TFLOPS | 41 | 68/100 (Limited) |

*With WhaleFlux mixed-precision routing

Part 5: Build Future-Proof GPU Frameworks

1. Dynamic Weighting (Prioritize Your Needs)

WhaleFlux API: Custom GPU scoring

# WhaleFlux API: Custom GPU scoring

weights = {

"vram": 0.6, # Critical for 70B+ LLMs

"tflops": 0.1,

"cost_hr": 0.3

}

gpu_score = whaleflux.calculate_score('mi300x', weights) # Output: 87/100

2. Lifecycle Cost Modeling

- Hardware cost

- 3-year power/cooling (H100: ~$15k electricity)

- WhaleFlux Depreciation Simulator

3. Sustainability Index

Part 6: Case Study: FinTech Saves $217k/Yr

Problem:

- Mismatched A100 nodes → 40% idle time

- $28k/month wasted cloud spend

WhaleFlux Solution:

- Identified overprovisioned nodes via Compare Matrix

- Switched to L40S + fragmentation compression

- Automated spot instance orchestration

Results:

✅ 37% higher throughput

✅ $217,000 annual savings

✅ 28-point efficiency gain

Part 7: Your Ultimate GPU Comparison Toolkit

Stop guessing. Start optimizing:

| Tool | Section | Value |

| Interactive Matrix Demo | Part 3 | See beyond static charts |

| Cloud TCO Calculator | Part 5 | Compare cloud vs on-prem |

| Workload Benchmark Kit | Part 4 | Real-world performance |

| API Priority Scoring | Part 5 | Adapt to your needs |

The Future-Proofing of AI: Strategic Management of Computing Power and Predictions in Industry Advancements

Introduction to Computing Power in the AI Field

In the field of artificial intelligence (AI), computing power is the crucial pillar supporting the development and application of AI technologies. This power is foundational—it enables the processing of large datasets, runs complex algorithms, and accelerates the pace of innovation. Thus, efficient management of computing resources is essential for the advancement and sustainability of AI projects and ventures.

The Challenges with Managing Computing Power

The effort to harness and manage computing power within the field of artificial intelligence is riddled with a variety of intricate challenges, each posing potential roadblocks to optimal operations. These challenges necessitate vigilant management and innovative solutions to ensure that the infrastructure aligning with an AI-driven environment is both robust and adaptable.

Expanded key challenges include

Scaling Infrastructure: As the demand for AI workloads grows, the infrastructure must scale commensurately. This is a two-pronged challenge involving physical hardware expansion, and the seamless integration of this hardware into existing systems to avoid performance bottlenecks and compatibility issues.

Energy Efficiency: The demands of AI workloads are significant, often leading to elevated energy consumption which in turn increases operational costs and carbon footprints. Finding ways to reduce energy use without sacrificing performance requires the implementation of sophisticated power management strategies and possibly the overhaul of traditional data center designs.

Heat Dissipation: The high-performance computing necessary for AI generates substantial heat. Designing and maintaining cooling solutions that are both effective and energy-efficient is critical to protect the longevity of hardware components and ensure continued optimal performance.

Allocation and Scheduling: Effective utilization of computing resources necessitates that tasks are prioritized and scheduled to optimize the usage of every GPU in the cluster. This involves complex decision-making processes, often relying on sophisticated algorithms that can dynamically adjust to the changing demands of AI workloads.

Investing in Innovation: The fast-paced nature of AI technology means that new and potentially game-changing innovations are continually on the horizon. Deciphering which new technologies to invest in—and when—requires a deep understanding of the trajectory of AI and its resultant computational demands.

Security Concerns: The valuable data processed and stored for AI tasks makes it a prime target for cyber threats. Ensuring the integrity and confidentiality of this data requires a multi-layered security approach that is robust and ahead of potential vulnerabilities.

Maintaining Flexibility: The computing infrastructure must remain flexible to adapt to new AI methodologies and data processing techniques. This flexibility is key to leveraging advancements in AI while maintaining the relevance and effectiveness of existing computing resources.

Meeting these challenges head-on is essential for the sustainability and progression of AI technologies. The following segments of this article will delve into practical strategies, tools, and best practices for overcoming these obstacles and optimizing computing power for AI’s dynamic demands.

GPU Cluster Management

Effectively managing GPU clusters is critical for enhancing computing power in AI. Well-managed GPU clusters can significantly improve processing and computational abilities, enabling more advanced AI functionalities. Best practices in GPU cluster management focus on maximizing GPU utilization and ensuring that the processing capabilities are fully exploited for the intensive workloads typical in AI applications.

Deep Observation of GPU Clusters: The Backbone of AI Computing

The intricate systems powering today’s AI require more than raw computing force; they require intelligent and meticulous oversight. This critical oversight is where deep observation of GPU clusters comes into play. In the realm of AI, where data moves constantly and demands can spike unpredictably, the real-time analysis provided by GPU monitoring tools is essential not just for maintaining operational continuity, but also for strategic planning and resource allocation.

In-depth observation allows for:

- Proactive Troubleshooting: Anticipating and addressing issues before they escalate into costly downtime or severe performance degradation.

- Resource Optimization: Identifying underutilized resources, ensuring maximum ROI on every bit of computing power available to your AI projects.

- Performance Benchmarking: Establishing performance benchmarks aids in long-term planning and is crucial for scaling operations efficiently and sustainably.

- Cost Management: By monitoring and optimizing GPU clusters, organizations can significantly reduce wastage and improve the cost-efficiency of their AI initiatives.

- Future Planning: Historical and real-time data provide insights that guide future investments in technology, ensuring your infrastructure is always one step ahead.

By embracing comprehensive GPU performance analysis, AI enterprises not only ensure their current operations are running at peak efficiency, but they also arm themselves with the knowledge to forecast future needs and trends, all but guaranteeing their place at the vanguard of AI’s advancement.

Recommendations for Software and Tools

The market offers a variety of software and tools designed to assist with managing and optimizing computing power dedicated to AI tasks. Tools for GPU cluster management, private cloud management, and GPU performance observation are crucial for any organization aiming to maintain a competitive edge in AI.

below is a curated list of software that professionals in the AI industry can use to manage and optimize computing power:

NVIDIA AI Enterprise

An end-to-end platform optimized for managing computing power on NVIDIA GPUs. It includes comprehensive tools for model training, simulation, and advanced data analytics.

AWS Batch

Facilitates efficient batch computing in the cloud. It dynamically provisions the optimal quantity and type of compute resources based on the volume and specific requirements of the batch jobs submitted.

DDN Storage

Provides solutions specifically designed to address AI bottlenecks in computing. With a focus on accelerated computing, DDN Storage helps in scaling AI and large language model (LLM) performance.

The Future of Computing Power Management

As the field of AI continues to evolve, so too will the strategies for managing computing power. Advancements in technology will introduce new methods for optimizing resources, reducing energy consumption, and maximizing performance.

The AI industry can expect to see more autonomous and intelligent systems for managing computing power, driven by AI itself. These systems will likely be designed to predict and adapt to the computational needs of AI workloads, leading to even more efficient and cost-effective AI operations.

Our long-term vision must incorporate these upcoming innovations, ensuring that as the AI field grows, our management of its foundational resources evolves concurrently. By staying ahead of these trends, organizations can future-proof their AI infrastructure and remain competitive in a rapidly advancing technological landscape.

How AI and Cloud Computing are Converging

Introduction

The digital age has been marked by remarkable independent advancements in both Artificial Intelligence (AI) and cloud computing. However, when these two titans of tech join forces, they give rise to a synergy potent enough to fuel an entirely new wave of technological innovation. This convergence is not just enhancing capabilities in data analytics and storage solutions; it is reimagining how we interact with and derive value from technology.

The Synergy of AI and Cloud Computing

The unification of AI and cloud computing is no mere melding; it is an orchestrated fusion of strengths where each technology amplifies the capabilities of the other. Cloud computing presents an almost boundless arena for AI operations, granting access to on-demand computing power and expansive data storage possibilities. This environment is primordial for the growth of AI, which thrives on data and requires significant computational resources to evolve.

Enabling Scalability and Accessibility

The cloud provides a scalable infrastructure that can grow with the demands of AI algorithms. This elasticity means that as AI models become more complex or as datasets expand, the cloud can adapt with additional resources. Startups to large enterprises can leverage this capability, entering arenas that were once dominated by tech giants with significant on-premise resources. Accessibility is another key boon, with cloud services democratizing AI by offering high-level compute resources remotely to anyone with internet access.

Advancements in Machine Learning Platforms

Cloud providers have recognized the necessity of specialized platforms for machine learning and AI. For instance, services such as Google Cloud AI, Amazon SageMaker, and Microsoft Azure Machine Learning provide integrated environments for the training, deployment, and management of machine learning models. These services offer pre-built algorithms and the ability to create custom models, significantly reducing the time and knowledge barrier for businesses to incorporate AI solutions.

Data Management and Analytics at Scale

AI’s insatiable appetite for data pairs perfectly with the cloud’s solution to storage. Cloud platforms can effectively manage vast datasets, making it feasible to store and process the big data which AI systems analyze. Furthermore, AI enhances cloud capabilities by introducing analytics and machine learning directly into the data repositories, allowing for more advanced data processing and extraction of insights.

Intelligent Automation and Resource Optimization

AI introduces smart automation of cloud infrastructure management, optimizing resource usage without human intervention. Algorithms predict resource needs, automatically adjusting capacity, and ensuring efficient operation. This not only decreases costs but also improves the performance of hosted applications and services.

Case Studies: Successful Convergence in Action

The convergence of AI and cloud computing has led to successful implementations across various sectors:

Healthcare Delivery and Research

In healthcare, Project Baseline by Verily (an Alphabet company) is a stellar example. The initiative leverages cloud infrastructure for an AI-driven platform that collects and analyzes vast amounts of health-related data. This convergence enables predictive analytics for disease patterns and personalizes patient care protocols, translating into tangible improvements in patient outcomes.

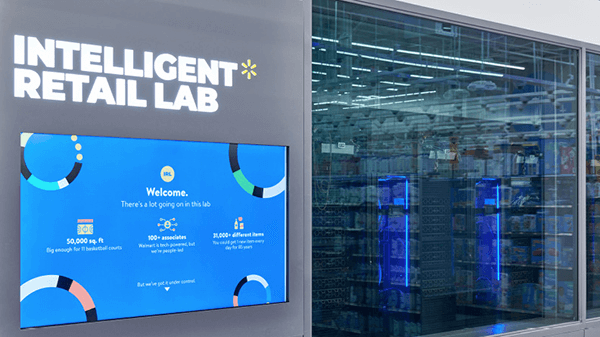

Retail and Consumer Insights

In the retail space, Walmart harnesses cloud-based AI to refine logistical operations, manage inventory, and personalize shopping experiences for customers. Through their data analytics and machine learning tools, Walmart can predict shopping trends and optimize supply chains, reducing waste and improving customer satisfaction.

Financial Services and Risk Management

JPMorgan Chase has turned to the cloud to bolster its AI capabilities for real-time fraud detection. Their AI models, hosting complex algorithms on cloud platforms, scan transaction data to identify potential threats, significantly reducing the occurrence of false positives and enhancing the security of client assets.These cases underscore the powerful impact of AI and cloud computing’s convergence on operational efficiency, user experience, and outcome-driven strategies. As organizations continue to realize the benefits of this collaboration, it’s becoming increasingly clear that the duo of AI and cloud computing stands at the heart of the next frontier in digital transformation.

Challenges and Potential Solutions

The merger of AI and cloud computing is paving the way for a smarter technology landscape. However, it’s not without its share of challenges that require strategic solutions:

Data Privacy and Governance

As data becomes more centralized in the cloud and AI models more nuanced in their data requirements, privacy concerns escalate. Solutions like federated learning allow AI model training on decentralized data, enhancing user privacy without compromising the dataset’s utility. Additionally, implementing stronger data governance and privacy laws aligned with technological advances can provide a structured approach to maintaining user trust.

Securing AI and Cloud Platforms

Securing the infrastructure underpinning AI and the cloud is critical. This means deploying advanced cybersecurity measures such as AI-driven threat detection systems, end-to-end encryption for data in transit and at rest, and multi-factor authentication. Regular security assessments and adherence to compliance standards like GDPR or HIPAA for specific industries can fortify the AI and cloud ecosystem against cyber threats.

Tackling the Skills Shortage

The sophisticated nature of AI and the expansive scope of cloud computing creates a high demand for specialized talent. Companies can forge partnerships with academic institutions to create targeted curricula that meet industry needs. Investing in continuous employee development through workshops and certifications can also help mitigate the skills gap and empower existing workforce members to adapt to new roles necessitated by AI and cloud technology.

Future Prospects

As the fusion between AI and cloud computing strengthens, the horizon of digital innovation expands:

IoT and AI: Smarter Devices Everywhere

The Internet of Things (IoT) stands to benefit immensely from AI and cloud computing, transitioning from simple connectivity to intelligent, autonomous operations. AI can be leveraged to process and analyze data collected by IoT devices to facilitate real-time decisions, while cloud platforms ensure global accessibility and scalability for IoT systems.

Amplifying Edge Computing

Edge computing is set to revolutionize how data is processed by bringing computation closer to the source of data creation. The convergence with AI allows for smarter edge devices that can process data on-site, reducing latency and reliance on centralized data centers. Cloud services come into play by providing management and orchestration layers for these distributed networks.

Building the Foundations of Smart Cities

Smart cities stand as testament to the potential of AI and cloud collaboration. They use AI’s analytical power and the cloud’s vast resource pool to optimize urban services, from traffic management to energy distribution. As smart cities evolve, they’ll likely become more responsive and adaptive, creating urban environments that are not only interconnected but also intelligent.In this landscape of emerging opportunities, businesses must pivot to embrace a future where AI and cloud computing no longer function as standalone tools, but as integrated components of a comprehensive tech ecosystem, propelling innovation at the speed of thought.