GPU spec sheets lie. Raw TFLOPS don’t equal real-world performance. 42% of AI teams report wasted spend from mismatched hardware. This guide cuts through the noise. Learn to compare GPUs using real efficiency metrics – not paper specs. Discover how WhaleFlux (intelligent GPU orchestration) unlocks hidden value in NVIDIA, and cloud GPUs.

Part 1: Why GPU Spec Sheets Lie: The Comparison Gap

Don’t be fooled by big numbers:

- TFLOPS ≠ Real Performance: A 67 TFLOPS GPU may run slower than a 61 TFLOPS chip under AI workloads due to memory bottlenecks.

- Thermal Throttling: A GPU running at 90°C performs 15-25% slower than its “peak” spec.

- Enterprise Reality: 42% of AI teams bought wrong GPUs by focusing only on specs (WhaleFlux Survey 2024).

Key Insight: Paper specs ignore cooling, software, and cluster dynamics.

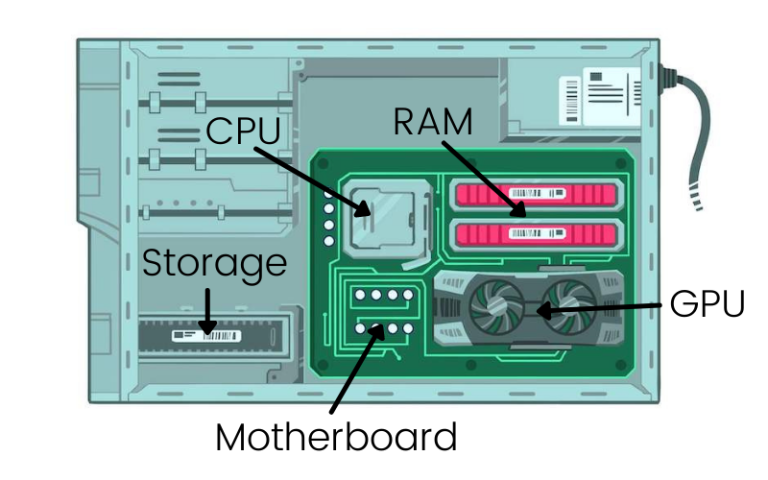

Part 2: Decoding GPU Charts: What Matters for AI

| Component | Gaming Use | AI Enterprise Use |

| Clock Speed | FPS Boost | Minimal Impact |

| VRAM Capacity | 4K Textures | Model Size Limit |

| Memory Bandwidth | Frame Consistency | Batch Processing Speed |

| Power Draw (Watts) | Electricity Cost | Cost Per Token ($) |

⚠️ Warning: Consumer GPU charts are useless for AI. Focus on throughput per dollar.

Part 3: WhaleFlux Compare Matrix: Beyond Static Charts

WhaleFlux replaces outdated spreadsheets with a dynamic enterprise dashboard:

- Real-time overlays of NVIDIA/Cloud specs

- Cluster Efficiency Score (0-100 rating)

- TCO projections based on your workload

- Bottleneck heatmaps (spot VRAM/PCIe issues)

Part 4: AI Workload Showdown: Specs vs Reality

| GPU Model | FP32 (Spec) | Real Llama2-70B Tokens/Sec | WhaleFlux Efficiency |

| NVIDIA H100 | 67.8 TFLOPS | 94 | 92/100 (Elite) |

| Cloud L4 | 31.2 TFLOPS | 41 | 68/100 (Limited) |

*With WhaleFlux mixed-precision routing

Part 5: Build Future-Proof GPU Frameworks

1. Dynamic Weighting (Prioritize Your Needs)

WhaleFlux API: Custom GPU scoring

# WhaleFlux API: Custom GPU scoring

weights = {

"vram": 0.6, # Critical for 70B+ LLMs

"tflops": 0.1,

"cost_hr": 0.3

}

gpu_score = whaleflux.calculate_score('mi300x', weights) # Output: 87/100

2. Lifecycle Cost Modeling

- Hardware cost

- 3-year power/cooling (H100: ~$15k electricity)

- WhaleFlux Depreciation Simulator

3. Sustainability Index

Part 6: Case Study: FinTech Saves $217k/Yr

Problem:

- Mismatched A100 nodes → 40% idle time

- $28k/month wasted cloud spend

WhaleFlux Solution:

- Identified overprovisioned nodes via Compare Matrix

- Switched to L40S + fragmentation compression

- Automated spot instance orchestration

Results:

✅ 37% higher throughput

✅ $217,000 annual savings

✅ 28-point efficiency gain

Part 7: Your Ultimate GPU Comparison Toolkit

Stop guessing. Start optimizing:

| Tool | Section | Value |

| Interactive Matrix Demo | Part 3 | See beyond static charts |

| Cloud TCO Calculator | Part 5 | Compare cloud vs on-prem |

| Workload Benchmark Kit | Part 4 | Real-world performance |

| API Priority Scoring | Part 5 | Adapt to your needs |