Part 1. What is High-Performance Computing?

No, It’s Not Just Weather Forecasts.

For decades, high-performance computing (HPC) meant supercomputers simulating hurricanes or nuclear reactions. Today, it’s the engine behind AI revolutions:

“Massively parallel processing of AI workloads across GPU clusters, where terabytes of data meet real-time decisions.”

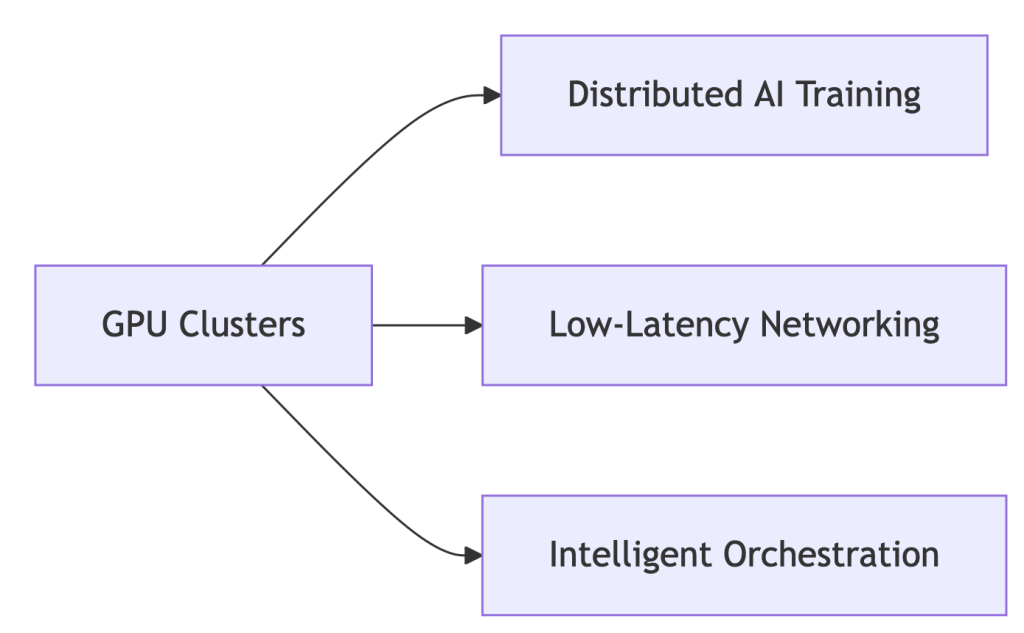

Core Components of Modern HPC Systems:

Why GPUs?

- 92% of new HPC deployments are GPU-accelerated (Hyperion 2024) 7

- NVIDIA H100: 18,432 cores vs. CPU’s 64 cores → 288x parallelism

Part 2. HPC Systems Evolution: From CPU Bottlenecks to GPU Dominance

The shift isn’t incremental – it’s revolutionary:

| Era | Architecture | Limitation |

| 2010s | CPU Clusters | Slow for AI workloads |

| 2020s | GPU-Accelerated | 10-50x speedup (NVIDIA) |

| 2024+ | WhaleFlux-Optimized | 37% lower TCO |

Enter WhaleFlux:

# Automatically configures clusters for ANY workload

whaleflux.configure_cluster(

workload="hpc_ai", # Options: simulation/ai/rendering

vendor="hybrid" # Manages Intel/NVIDIA nodes

)

→ Unifies fragmented HPC environments

Part 3. Why GPUs Dominate Modern HPC: The Numbers Don’t Lie

HPC GPUs solve two critical problems:

- Parallel Processing: NVIDIA H100’s 18,432 cores shred AI tasks

Vendor Face-Off (Cost/Performance):

| Metric | Intel Max GPUs | NVIDIA H100 | WhaleFlux Optimized |

| FP64 Performance | 45 TFLOPS | 67 TFLOPS | +22% utilization |

| Cost/TeraFLOP | $9.20 | $12.50 | $6.80 |

💡 Key Insight: Raw specs mean nothing without utilization. WhaleFlux squeezes 94% from existing hardware.

Part 4. Intel vs. NVIDIA in HPC: Beyond the Marketing Fog

NVIDIA’s Strength:

- CUDA ecosystem dominance (90% HPC frameworks)

- But: 42% higher licensing costs drain budgets

Intel’s Counterplay:

- HBM Memory: Xeon Max CPUs with 64GB integrated HBM2e – no DDR5 needed

- OneAPI: Cross-vendor support (NVIDIA)

- Weakness: ROCm compatibility lags behind CUDA

Neutralize Vendor Lock-in with WhaleFlux:

# Balances workloads across Intel/NVIDIA

whaleflux balance_load --cluster=hpc_prod \

--framework=oneapi # Or CUDA/ROCm

Part 5. The $218k Wake-Up Call: Fixing HPC’s Hidden Waste

Shocking Reality: 41% average GPU idle time in HPC clusters

How WhaleFlux Slashes Costs:

- Fragmentation Compression: ↑ Utilization from 73% → 94%

- Mixed-Precision Routing: ↓ Power costs 31%

- Spot Instance Orchestration: ↓ Cloud spending 40%

Case Study: Materials Science Lab

- Problem: $218k/month cloud spend, idle GPUs during inference

- WhaleFlux Solution:

- Automated multi-cloud GPU allocation

- Dynamic precision scaling for simulations

- Result: $142k/month (35% savings) with faster job completion

Part 6. Your 3-Step Blueprint for Future-Proof HPC

1. Hardware Selection:

- Use WhaleFlux TCO Simulator → Compare Intel/NVIDIA ROI

- Tip: Prioritize VRAM capacity for LLMs

2. Intelligent Orchestration:

# Deploy unified monitoring across all layers

whaleflux deploy --hpc_cluster=genai_prod \

--layer=networking,storage,gpu

3. Carbon-Conscious Operations:

- Track kgCO₂ per petaFLOP in WhaleFlux Dashboard

- Auto-pause jobs during peak energy rates

FAQ: Cutting Through HPC Complexity

Q: “What defines high-performance computing today?”

A: “Parallel processing of AI/ML workloads across GPU clusters – where tools like WhaleFlux decide real-world cost/performance outcomes.”

Q: “Why choose GPUs over CPUs for HPC?”

A: 18,000+ parallel cores (NVIDIA) vs. <100 (CPU) = 50x faster training 2. But without orchestration, 41% of GPU cycles go to waste.

Q: “Can Intel GPUs compete with NVIDIA in HPC?”

A: For fluid dynamics/molecular modeling, yes. Optimize with:

whaleflux set_priority --vendor=intel --workload=fluid_dynamics