How AI is Transforming Healthcare: 2025 Trends and Real-World Applications

I. Introduction: The New Era of Healthcare Intelligence

AI is no longer future speculation—it’s actively diagnosing diseases and personalizing treatments today. Across the globe, hospitals and research institutions are witnessing remarkable transformations: algorithms that can detect cancers from medical images with accuracy rivaling human experts, systems that can predict patient deterioration hours before clinical symptoms appear, and tools that are slashing drug development timelines from years to months. This isn’t science fiction; this is the current state of healthcare AI.

The healthcare AI revolution is creating better patient outcomes while generating unprecedented computational demands. Every breakthrough in medical AI comes with a hidden requirement: massive computing power to process enormous datasets, train complex models, and deliver real-time insights. The sophisticated algorithms that promise to revolutionize patient care require infrastructure that can handle their complexity and scale.

In this article, we’ll explore cutting-edge applications transforming patient care and examine the robust infrastructure needed to power them responsibly. Because in healthcare, where lives are on the line, reliable computational power isn’t just a technical requirement—it’s a medical necessity.

II. Real-World AI Applications Revolutionizing Healthcare

The theoretical potential of AI in healthcare has become practical reality across multiple domains. These applications are already making a measurable difference in patient care and medical research:

Medical Imaging Analysis: AI-assisted radiology and pathology

AI systems are now routinely analyzing X-rays, CT scans, and MRIs, helping radiologists detect abnormalities faster and with greater accuracy. In pathology, AI algorithms can examine tissue samples for cancerous cells, processing thousands of images in the time a human pathologist might review dozens. These systems aren’t replacing doctors but augmenting their capabilities, serving as incredibly thorough second opinions that never experience fatigue.

Drug Discovery Acceleration: From target identification to clinical trials

Pharmaceutical companies are using AI to dramatically shorten drug development cycles. Machine learning models can analyze biological data to identify promising drug candidates, predict how molecules will interact with targets in the body, and even design new compounds with specific therapeutic properties. This acceleration is particularly crucial in responding to emerging health threats, where traditional drug development timelines are unacceptably long.

Personalized Treatment Plans: Genomics and patient data integration

By analyzing a patient’s genetic information alongside their medical history and current health status, AI systems can help doctors develop truly personalized treatment plans. These systems can predict how individuals will respond to different medications, identify their risk factors for various conditions, and recommend preventative measures tailored to their specific biology.

Operational Efficiency: Hospital workflow optimization and administrative automation

Beyond clinical applications, AI is transforming healthcare operations. Intelligent systems are optimizing staff schedules, predicting patient admission rates to better manage resources, and automating administrative tasks like billing and documentation. This allows healthcare providers to focus more resources on patient care while reducing operational costs.

III. The Computational Challenge Behind Healthcare AI

Beneath these revolutionary applications lies a formidable computational challenge that many healthcare organizations underestimate until they begin their AI journey:

Massive data requirements: Medical images, genomic sequences, and patient records

Healthcare datasets are among the largest and most complex in any industry. A single hospital can generate terabytes of data daily from medical imaging alone. Genomic sequencing produces enormous files for each patient, while electronic health records accumulate decades of detailed patient history. Training AI models on these datasets requires not just substantial storage but immense processing power to extract meaningful patterns.

Need for rapid processing: Time-sensitive diagnostics and research timelines

In many healthcare scenarios, speed matters critically. An AI system that takes hours to analyze a stroke patient’s brain scan provides little clinical value. Similarly, drug discovery research moves competitively, where faster computation can literally translate to lives saved. These time-sensitive applications demand high-performance computing infrastructure that can deliver results when they’re needed.

Regulatory compliance: Secure, reliable, and auditable computing environments

Healthcare AI operates under strict regulatory frameworks like HIPAA that mandate rigorous data protection, system reliability, and complete auditability. Computational infrastructure must ensure data security without compromising performance, maintain detailed access logs, and provide the stability required for clinical applications where downtime isn’t just inconvenient—it could impact patient care.

These challenges create a difficult balancing act for healthcare organizations: they need cutting-edge computational power, but they also require the security, reliability, and compliance features that general-purpose cloud solutions often struggle to provide.

IV. WhaleFlux: Powering Healthcare AI with Reliable GPU Infrastructure

This is where WhaleFlux serves as a critical enabler for healthcare AI initiatives. WhaleFlux provides the computational backbone that healthcare organizations need to deploy AI applications confidently and effectively, balancing performance with the unique requirements of the medical field.

WhaleFlux is an intelligent GPU resource management tool designed specifically for demanding AI applications, and it offers several key benefits for healthcare organizations:

Guaranteed availability for critical research and diagnostics

Unlike general-purpose cloud platforms where resources might be reclaimed or performance might vary, WhaleFlux provides dedicated access to computational resources. This guaranteed availability is crucial for healthcare applications where interrupted training jobs could delay research breakthroughs, and inconsistent inference performance could impact diagnostic accuracy.

Secure, compliant infrastructure for sensitive health data

WhaleFlux is built with the security requirements of healthcare data in mind. The platform provides the isolation, encryption, and access controls needed to handle protected health information while maintaining the performance necessary for complex AI workloads. This allows healthcare organizations to leverage their data for AI innovation without compromising on security or compliance.

Cost-effective scaling for research projects and production systems

Through intelligent resource optimization across multi-GPU clusters, WhaleFlux helps healthcare organizations maximize their computational investment. The platform’s efficient scheduling and load balancing ensure that expensive GPU resources are fully utilized, whether for research experiments or production diagnostic systems.

The WhaleFlux platform supports a range of NVIDIA GPUs tailored to different healthcare workloads. The flagship NVIDIA H100 and H200 cards provide the raw power needed for massive drug discovery simulations and foundation model training. The reliable A100 serves as an excellent balance of performance and stability for production medical imaging systems. For research and development work, the RTX 4090 offers tremendous value for prototyping new algorithms and processing smaller datasets.

V. Healthcare AI Success Stories: From Research to Reality

The transformative potential of healthcare AI becomes most evident when examining real-world implementations:

Case study 1: Medical research institution accelerating drug discovery

A prominent medical research foundation was struggling with computational limitations in their Alzheimer’s disease research. Their molecular simulation experiments were taking weeks to complete, dramatically slowing their search for promising therapeutic compounds. After implementing WhaleFlux with NVIDIA H100 GPUs, they achieved a 7x speedup in their simulation workflows. What previously took 21 days now completes in just 3, allowing researchers to explore more therapeutic possibilities and iterate more quickly on promising leads.

Case study 2: Hospital network improving diagnostic accuracy

A regional hospital network implemented an AI-assisted diagnostic system for detecting lung cancer in CT scans. Initially running on undersized computational infrastructure, the system took minutes to process each scan and frequently queued scans during peak hours. After migrating to WhaleFlux with A100 GPUs, processing time dropped to seconds, enabling real-time analysis. Radiologists reported higher confidence in their diagnoses, and the system identified several early-stage cancers that might otherwise have been missed.

Case study 3: Genomics company personalizing cancer treatments

A genomics startup specializing in personalized cancer treatment needed to process whole-genome sequencing data quickly enough to inform treatment decisions. Their existing infrastructure required days to analyze each genome, creating unacceptable delays for patients with aggressive cancers. By leveraging WhaleFlux’s optimized genomic analysis pipelines on H200 clusters, they reduced analysis time to under six hours, enabling oncologists to make data-informed treatment decisions while there was still time to adjust approaches.

VI. 2025 Healthcare AI Trends: What’s Next and How to Prepare

As we look toward the near future, several emerging trends are poised to further transform healthcare delivery:

Predictive analytics and preventative care models

The next wave of healthcare AI will shift from reactive to proactive care. Systems will increasingly analyze patterns across population health data, genetic predispositions, and individual health metrics to predict disease risks before symptoms appear. This will enable truly preventative medicine, where interventions occur before conditions develop or progress.

AI-powered surgical assistance and robotics

Surgical AI is advancing beyond current robotic assistance systems. Next-generation platforms will provide real-time guidance during procedures, alert surgeons to potential complications before they become critical, and even automate certain aspects of surgeries with superhuman precision. These systems will make complex procedures safer and more accessible.

Integrated health monitoring and continuous care

The combination of wearable devices, in-home sensors, and AI analysis will create continuous health monitoring systems that extend care beyond clinical settings. These systems will detect subtle changes in health status, provide personalized health recommendations, and alert care teams when intervention is needed, fundamentally changing chronic disease management.

Each of these trends shares a common requirement: increasingly sophisticated computational infrastructure. The AI models powering these advances are growing more complex, requiring more data and more processing power. Healthcare organizations preparing for these innovations need infrastructure that can scale with their ambitions while maintaining the reliability required for clinical applications.

VII. Building Your Healthcare AI Strategy: A Practical Framework

Success in healthcare AI requires more than just technical implementation—it demands a thoughtful strategy that aligns technology with clinical needs. Here’s a practical framework for healthcare organizations embarking on their AI journey:

Assessing organizational readiness and use case prioritization

Begin by honestly evaluating your organization’s data maturity, technical expertise, and clinical workflows. Identify use cases that offer clear clinical or operational value while matching your current capabilities. Early wins with well-scoped projects build momentum for more ambitious initiatives.

Computational infrastructure planning: Balancing performance and compliance

Select infrastructure that meets both your performance requirements and your compliance obligations. Consider not just raw computational power but also data security, system reliability, and integration with existing clinical systems. The infrastructure should support both current projects and anticipated future needs.

Implementation roadmap: From pilot projects to organization-wide deployment

Start with focused pilot projects that demonstrate value quickly while limiting risk. Use these initial implementations to build expertise, establish best practices, and generate evidence of ROI. Then systematically expand successful pilots while continuously evaluating and refining your approach.

How WhaleFlux’s flexible purchase/rental model supports evolving healthcare needs

WhaleFlux’s approach to computational resources aligns perfectly with this strategic framework. Our purchase option provides stability and cost-effectiveness for proven production workloads, while our rental model (with a minimum one-month commitment) offers flexibility for research projects and pilot implementations. This allows healthcare organizations to match their computational investment to their implementation stage, avoiding overcommitment during exploration while ensuring adequate resources for scaling successful initiatives.

Conclusion: Responsible AI for Better Health Outcomes

As we’ve seen throughout this exploration, healthcare AI’s potential to transform patient outcomes is virtually limitless, but realizing this potential depends on robust, reliable infrastructure. The most innovative algorithms and the most comprehensive datasets deliver little value without the computational foundation to bring them to life in clinical settings.

The right computational partner accelerates innovation while ensuring the reliability and security that healthcare applications demand. In an industry where system failures can have serious consequences, computational infrastructure must be judged not just by its performance but by its stability, security, and compliance with medical standards.

Ready to harness AI’s transformative potential for your healthcare organization? Start your healthcare AI journey with WhaleFlux’s purpose-built GPU solutions today. Explore our healthcare-optimized configurations and discover how our reliable computational infrastructure can power your AI initiatives while meeting the unique requirements of the medical field. Don’t let computational limitations constrain your ability to improve patient care—let WhaleFlux provide the foundation your healthcare AI initiatives need to succeed.

A Comprehensive Guide for AI Developers

Introduction

Artificial Intelligence is no longer a technology of the future; it’s the engine of our present. From crafting human-like text with large language models to enabling self-driving cars, AI is reshaping industries at a breathtaking pace. At the heart of this revolution is a special kind of engineer: the artificial intelligence developer. These are the architects of intelligence, the ones who turn complex algorithms into real-world solutions.

Yet, for all the excitement, the path of an AI developer is often paved with significant hurdles. Many teams find themselves grappling with the very infrastructure that powers their innovation: the GPU clusters. The challenges are all too familiar—sky-high cloud computing bills that drain budgets, frustrating delays in model training, and unpredictable instability when deploying these sophisticated models. The immense computational power required, especially for large language models, can become a bottleneck, slowing down progress and inflating costs.

This is where the need for intelligent infrastructure management becomes critical. What if you could focus more on designing groundbreaking AI and less on managing the complex hardware it runs on? This is precisely the problem WhaleFlux is designed to solve. WhaleFlux is an intelligent GPU resource management tool built specifically for AI enterprises. By optimizing how multi-GPU clusters are used, it directly addresses the core pain points of modern AI development, helping businesses significantly reduce cloud costs while dramatically speeding up the deployment and enhancing the stability of their large language models. Let’s explore how the modern AI developer can thrive by leveraging such smart tools.

Section 1: Understanding the Role of an Artificial Intelligence Developer

So, what does an artificial intelligence developer actually do? In essence, they are part data scientist, part software engineer, and part innovator. Their work involves a multi-stage process: they first define the problem an AI should solve, then gather and prepare massive datasets, design and select appropriate neural network architectures, train these models on powerful hardware, and finally, deploy them into production environments where they can deliver real value.

The most demanding part of this workflow, particularly for training and inference with large language models, is the computational heavy-lifting. Tasks like processing billions of text parameters or generating high-resolution images require parallel processing on a massive scale. This is where GPUs (Graphics Processing Units) come in. Unlike standard CPUs, GPUs have thousands of cores that can handle multiple calculations simultaneously, making them perfectly suited for the matrix and vector operations fundamental to deep learning.

However, simply having access to GPUs isn’t enough. The real challenge lies in using them efficiently. An AI developer might have a cluster of powerful GPUs at their disposal, but if those resources are poorly managed—if some GPUs sit idle while others are overloaded, or if jobs are queued unnecessarily—the entire development cycle suffers. Inefficient GPU usage directly translates into longer training times, missed deadlines, and wasted money. This inefficiency is the gap that WhaleFlux aims to close, ensuring that the valuable compute power available is fully and intelligently utilized.

Section 2: Key Steps in How to Develop Artificial Intelligence

To understand where tools like WhaleFlux add the most value, it’s helpful to walk through the fundamental steps of creating an AI model. While each project is unique, most follow a similar lifecycle.

- Data Preparation: This is the foundational step. AI developers collect, clean, and label vast amounts of data. The old adage “garbage in, garbage out” is especially true in AI. This stage requires significant storage and data processing power, but it’s the next steps where GPU demand skyrockets.

- Model Training: This is the most computationally intensive phase. Here, the AI model learns patterns from the prepared data. For a large language model, this involves feeding it terabytes of text and adjusting billions of internal parameters over and over again. This process can take weeks or even months on a single GPU. High-performance GPUs like the NVIDIA H100 or A100 are essential here, as their specialized tensor cores accelerate these calculations exponentially.

- Testing and Evaluation: Once trained, the model must be rigorously tested on unseen data to evaluate its accuracy, bias, and performance. This often involves running multiple inference jobs and can still require substantial GPU power, especially for complex models.

- Deployment: Finally, the trained model is deployed into a live environment—a website, an app, or an API—where it can serve users. This deployment phase requires not just power, but also remarkable stability and scalability to handle fluctuating user requests without crashing or slowing down.

Throughout this entire lifecycle, from the intensive training runs to the critical deployment stage, the reliance on high-performance GPUs is constant. Any bottleneck or instability in the GPU cluster can derail the project. WhaleFlux streamlines this entire process by acting as an intelligent orchestrator for your multi-GPU cluster. It ensures that during training, all available GPUs are used to their fullest capacity, drastically reducing training time. During deployment, it manages the load intelligently, preventing any single GPU from becoming a point of failure and ensuring your models remain stable and responsive for end-users.

Section 3: Common Challenges for Artificial Intelligence Developers in GPU Management

Despite having access to powerful hardware, AI teams frequently run into three major problems related to GPU management.

First is underutilized GPU clusters. It’s surprisingly common for expensive GPUs to sit idle due to poor job scheduling. Imagine a team with a cluster of eight NVIDIA A100 GPUs. Without intelligent management, one developer might accidentally lock all eight GPUs for a small job that only needs one, while another developer’s critical training job sits in the queue. Studies have shown that average GPU utilization in many clusters can be as low as 30%, meaning 70% of a company’s expensive compute investment is being wasted.

Second, soaring cloud expenses are a constant headache. Leading cloud providers charge a premium for on-demand GPU instances. When utilization is low, companies are essentially pouring money down the drain. Furthermore, the “pay-by-the-second” model, while flexible, can lead to shockingly high bills if a training job runs longer than expected or if resources are not promptly released after use.

Third, instability in model deployments can damage user trust and product reliability. When a deployed model suddenly experiences a spike in user traffic, an inflexible GPU resource allocation can cause slow response times or even total service outages. For a business relying on an AI-powered chatbot or recommendation engine, this instability directly impacts the bottom line and brand reputation.

These aren’t minor inconveniences; they are fundamental barriers that slow down AI innovation. They force developers to spend their time on DevOps and infrastructure firefighting instead of on core algorithm development. This is the critical juncture where WhaleFlux serves as a powerful remedy. By implementing intelligent resource allocation and automated scheduling, WhaleFlux ensures that every GPU in your cluster is working efficiently. It dynamically assigns workloads based on availability and priority, eliminating idle resources and queue times. This directly translates into lower cloud costs and a much more stable, reliable environment for deploying models, effectively breaking down the barriers that hinder AI development.

Section 4: How WhaleFlux Empowers AI Developers with Smart GPU Solutions

WhaleFlux is designed from the ground up to give AI developers a decisive edge. It operates as the intelligent control layer for your GPU infrastructure, built with features that directly tackle the challenges we’ve discussed.

Its core functionality rests on three pillars:

- Intelligent Scheduling: WhaleFlux automatically queues and dispatches AI workloads to the most appropriate GPUs in the cluster. It understands job priorities and resource requirements, ensuring that high-priority training jobs don’t get stuck behind less critical tasks. This eliminates manual assignment and dramatically boosts overall cluster productivity.

- Dynamic Load Balancing: When serving models in production, WhaleFlux doesn’t let any single GPU become a bottleneck. It distributes incoming inference requests evenly across the cluster, ensuring consistent performance and high availability even during traffic spikes.

- Comprehensive Monitoring: The platform provides a clear, real-time dashboard showing the health and utilization of every GPU. This gives teams full visibility into their resource consumption, helping them identify inefficiencies and make data-driven decisions.

The benefits for AI developers are immediate and substantial. Cost savings are realized through drastically improved utilization; you get more work done from the same set of GPUs, reducing the need to rent additional expensive instances. Improved deployment speed is achieved because the streamlined pipeline from training to deployment means models get to production faster. Most importantly, increased stability for large language models becomes the new normal, as the intelligent load balancing prevents crashes and ensures a smooth user experience.

To support these capabilities, WhaleFlux offers a curated fleet of top-tier NVIDIA GPUs, including the flagship NVIDIA H100 and H200 for the most demanding training workloads, the versatile A100 for a balance of performance and efficiency, and the powerful RTX 4090 for robust inference and mid-range training. We believe in providing flexible access to this power. Companies can either purchase these GPUs for their on-premise data centers or rent them through our platform. To maintain cluster stability and prevent the fragmentation that harms performance, our rental model is committed, with a minimum term of one month, ensuring dedicated, reliable resources for your serious AI projects.

Section 5: Practical Tips for AI Developers Using WhaleFlux

Integrating a powerful tool like WhaleFlux into your workflow is straightforward, but a few strategic steps can maximize its impact.

First, match the GPU to the task. Not every job requires the most powerful chip. Use the NVIDIA H100 or H200 for your most intensive, company-scale large language model training. For fine-tuning models or handling high-volume inference, the A100 or even the RTX 4090 can be a more cost-effective choice without sacrificing performance. WhaleFlux’s monitoring tools can help you analyze your workloads and make the right choice.

Second, use the scheduler proactively. Don’t just submit jobs blindly. Define the resource requirements and priorities for your training runs. By telling WhaleFlux what you need, it can optimally pack jobs into the cluster, ensuring your resources are used 24/7.

Consider the experience of a mid-sized AI startup, “Nexus AI,” that was struggling to deploy their new conversational AI model. Their training times were slow due to resource contention among their team of ten developers, and their weekly cloud bills were unsustainable. After integrating WhaleFlux, they saw a change within the first billing cycle. By using WhaleFlux’s intelligent scheduling on a rented cluster of NVIDIA A100s, they eliminated their internal queue and reduced their average model training time by 40%. Furthermore, the stability of their deployed model improved dramatically, with response times during peak hours dropping by 60%. Their cloud costs were cut in half, allowing them to re-invest those savings into further research and development.

This example shows that “how to develop artificial intelligence” is no longer just about writing better code. It’s about building a smarter, more efficient development infrastructure. WhaleFlux makes the entire process more efficient, reliable, and cost-effective, freeing developers to focus on what they do best: innovation.

Conclusion

The journey of an artificial intelligence developer is filled with immense potential, but it is also fraught with infrastructure-related challenges. Managing GPU resources efficiently is a critical, yet often overwhelming, task that can dictate the success or failure of AI initiatives. The hurdles of high costs, slow deployment, and system instability are real, but they are not insurmountable.

As we’ve seen, smart GPU management tools like WhaleFlux provide a reliable and powerful path to overcome these hurdles. By optimizing multi-GPU cluster utilization, WhaleFlux directly empowers AI developers and their enterprises to achieve more with less—less cost, less delay, and less complexity. It fosters an environment where innovation can thrive, unencumbered by the limitations of the underlying infrastructure.

Are you ready to accelerate your AI development, reduce your cloud spend, and deploy your models with confidence? It’s time to stop letting GPU management slow you down. Visit the WhaleFluxwebsite today to learn more about how our smart GPU solutions can transform your workflow. Explore our range of NVIDIA H100, H200, A100, and RTX 4090 GPUs and discover the flexible purchase and rental options designed to fuel your long-term AI ambitions.

Edge Artificial Intelligence: The Complete Guide to Deploying AI Where It Matters Most

I. Introduction: The Rise of Intelligent Edge Computing

Imagine an autonomous vehicle making split-second decisions to avoid obstacles, a smart factory detecting manufacturing defects in real-time, or a medical device analyzing patient vitals instantly without cloud dependency. These aren’t futuristic concepts—they’re real-world applications of edge artificial intelligence that are transforming industries today. This revolutionary approach moves AI processing from centralized cloud data centers directly to the devices where data is generated, enabling immediate insights and actions without latency or bandwidth constraints.

Edge artificial intelligence represents a fundamental shift in how we deploy and benefit from artificial intelligence. Instead of sending data to distant servers for processing, edge AI runs algorithms locally on devices—from smartphones and sensors to specialized hardware in factories and vehicles. This paradigm enables intelligent systems that can see, hear, understand, and respond to their environment in real-time, without constant internet connectivity.

This comprehensive guide explores the world of artificial intelligence at the edge, examining its transformative benefits, implementation challenges, and strategic considerations. We’ll also demonstrate how WhaleFlux provides the essential development infrastructure that enables teams to build, optimize, and deploy sophisticated edge AI solutions efficiently and cost-effectively.

II. What is Edge Artificial Intelligence?

At its core, edge artificial intelligence represents a fundamental architectural shift from cloud-dependent AI systems to localized, real-time intelligent processing. Where traditional AI relies on sending data to powerful remote servers for analysis, edge AI brings the computational power directly to the source of data generation. This approach transforms ordinary devices into intelligent systems capable of making autonomous decisions without external processing.

The key characteristics that define edge AI systems include:

Low Latency Decision-Making

By processing data locally, edge AI systems eliminate the round-trip time to cloud servers, enabling immediate responses. This is crucial for applications where milliseconds matter, such as autonomous vehicles detecting pedestrians or industrial robots avoiding collisions. The elimination of network latency means decisions happen in real-time, creating systems that can respond to their environment instantaneously.

Reduced Bandwidth Requirements

Edge AI significantly minimizes the need for continuous data transmission to the cloud. Instead of streaming high-volume sensor data 24/7, only processed results, alerts, or occasional model updates need to be transmitted. This not only reduces bandwidth costs but also makes AI practical in bandwidth-constrained environments like remote locations or mobile applications.

Enhanced Privacy and Data Security

Sensitive data never leaves the local device, addressing critical privacy concerns and regulatory requirements. Medical devices can process patient information locally, surveillance systems can identify threats without transmitting video footage, and industrial systems can protect proprietary processes while still benefiting from AI capabilities.

Operation Without Constant Internet Connectivity

Edge AI systems function reliably even when network connections are unavailable or intermittent. This ensures continuous operation in challenging environments—from offshore platforms and rural areas to moving vehicles and remote field operations. The intelligence travels with the device, independent of cloud infrastructure.

Contrasting edge AI with cloud-based systems reveals complementary rather than competing approaches. Cloud AI excels at training complex models, processing massive historical datasets, and serving applications that aren’t latency-sensitive. Edge AI specializes in real-time inference, privacy-sensitive applications, and environments where connectivity cannot be guaranteed. The most effective AI strategies often combine both, using the cloud for training and updates while deploying optimized models to the edge for real-time execution.

III. The Driving Forces Behind Artificial Intelligence at the Edge

Several powerful trends are accelerating the adoption of edge artificial intelligence across industries, each addressing specific limitations of cloud-only approaches while unlocking new capabilities.

Real-Time Requirements

Many modern applications simply cannot tolerate the latency of cloud round-trips. Autonomous vehicles must process sensor data and make driving decisions within milliseconds. Industrial automation systems need instant responses to ensure worker safety and manufacturing quality. Medical diagnostic devices must provide immediate analysis during critical procedures. In these scenarios, artificial intelligence at the edge isn’t just convenient—it’s essential for functionality and safety.

Bandwidth and Cost Optimization

The exponential growth of data from IoT devices, cameras, and sensors makes continuous cloud transmission impractical and expensive. A single high-definition camera can generate over 1TB of data per day—transmitting this to the cloud would be cost-prohibitive for most applications. Edge AI processes this data locally, sending only meaningful insights or compressed information, typically reducing bandwidth requirements by 90% or more while maintaining full analytical capabilities.

Privacy and Security

Increasingly stringent data protection regulations (GDPR, HIPAA, CCPA) and growing consumer privacy concerns make local data processing particularly attractive. Edge AI enables compliance by design—personal data, proprietary processes, and sensitive information remain secure on local devices. This approach is becoming mandatory in healthcare, finance, and any application handling personally identifiable information.

Reliability and Resilience

Systems that must function regardless of network conditions naturally gravitate toward edge AI. Agricultural equipment in remote fields, mining operations underground, emergency response systems during disasters, and military applications in contested environments all require AI capabilities that cannot be disrupted by connectivity issues. Edge AI provides autonomous intelligence that works consistently in any environment.

IV. Key Challenges in Edge AI Implementation

While the benefits of edge AI are compelling, successful implementation requires overcoming several significant technical and operational challenges.

Hardware Limitations

The fundamental constraint of edge AI lies in the balance between computational requirements and physical limitations. Edge devices must deliver meaningful AI performance while operating within strict power budgets, thermal envelopes, and size constraints. This creates an ongoing tension between model sophistication and practical deployment—the most accurate AI model is useless if it cannot run on available edge hardware. Developers must navigate complex trade-offs between performance, power consumption, cost, and physical form factors.

Model Optimization

Creating AI models that deliver adequate accuracy while meeting edge resource constraints represents a major technical challenge. Full-sized models trained in data centers typically require significant memory and computational resources that simply aren’t available on edge devices. Techniques like model pruning, quantization, knowledge distillation, and neural architecture search become essential but require specialized expertise. The optimization process often involves multiple iterations of training, compression, and validation to maintain accuracy while reducing computational demands.

Development Complexity

Building effective edge AI solutions demands expertise across multiple domains—machine learning, embedded systems, hardware design, and domain-specific knowledge. Teams must understand both the AI algorithms and the target deployment environment, including processor architectures, memory hierarchies, and power management. This interdisciplinary requirement makes edge AI development particularly challenging and often lengthens development cycles as teams navigate unfamiliar technical territory.

Scalability Issues

Managing AI models across thousands or millions of edge devices introduces operational complexity that many organizations underestimate. Model updates must be deployed efficiently without disrupting service, performance must be monitored across diverse environments, and security patches need to reach all devices promptly. The distributed nature of edge deployments makes traditional centralized management approaches inadequate, requiring new tools and processes for effective large-scale operation.

V. The Development Bottleneck: Why Edge AI Needs Powerful Infrastructure

A common misconception about artificial intelligence at the edge is that because the final deployment uses resource-constrained devices, the development process is similarly lightweight. In reality, the opposite is true—creating efficient, high-performing edge AI models demands more intensive computational resources and sophisticated development workflows than many cloud AI projects.

This training paradox emerges because developing optimized edge models requires extensive experimentation, hyperparameter tuning, and iterative optimization. Each round of model compression, quantization, or architecture search needs retraining and validation to ensure accuracy isn’t compromised. What begins as a straightforward model development project can quickly evolve into hundreds of training cycles as teams search for the optimal balance between performance and efficiency.

The infrastructure requirements for effective edge AI development are substantial. Teams need powerful GPU resources for rapid training iterations, robust testing environments that simulate edge conditions, and sophisticated tooling for model analysis and optimization. Without adequate infrastructure, development cycles stretch from days to months, innovation slows, and time-to-market increases significantly.

This infrastructure challenge is particularly acute because edge AI development often involves exploring multiple model architectures and optimization techniques simultaneously. Teams might need to compare traditional CNNs with more efficient architectures like MobileNets or SqueezeNets, experiment with different quantization approaches, and validate performance across various hardware targets—all requiring substantial computational resources.

VI. WhaleFlux: Accelerating Edge AI Development

Developing sophisticated edge artificial intelligence solutions requires the kind of iterative training and optimization that demands high-performance computing resources typically unavailable to most development teams. The constant cycle of training, compression, validation, and deployment creates computational demands that can overwhelm traditional development infrastructure and significantly delay project timelines.

WhaleFlux provides the essential GPU infrastructure that edge AI teams need to rapidly develop, test, and optimize their models before deployment. By removing computational constraints from the development process, WhaleFlux enables teams to focus on innovation rather than infrastructure management. The platform understands that creating efficient edge models requires extensive experimentation—exactly the kind of workload that benefits from scalable, high-performance computing resources.

So what exactly is WhaleFlux? It’s an intelligent GPU resource management platform specifically optimized for AI development workloads. While the final deployment of edge AI happens on resource-constrained devices, WhaleFlux provides the powerful foundation needed during the development phase. The platform enables faster iteration and better optimization for edge AI models through dedicated high-performance computing, ensuring that teams can explore more approaches, validate more thoroughly, and deliver higher-quality solutions in less time.

VII. How WhaleFlux Supports Edge AI Innovation

WhaleFlux addresses the unique challenges of edge AI development through several key capabilities that accelerate innovation while controlling costs.

Rapid Model Development

Access to clusters of high-performance GPUs including NVIDIA H100, H200, A100, and RTX 4090enables edge AI teams to run multiple training experiments simultaneously, dramatically reducing iteration time. Instead of waiting days for model training to complete, researchers can test new architectures, hyperparameters, and optimization techniques in hours. This accelerated experimentation cycle is crucial for finding the optimal balance between model accuracy and efficiency that defines successful edge deployments.

Efficient Optimization Workflow

The powerful GPU resources provided by WhaleFlux enable quick cycles of model compression, quantization, and pruning while maintaining accuracy. Teams can experiment with different optimization strategies in parallel, comparing results across multiple approaches to identify the most effective techniques for their specific use case. This comprehensive optimization process—often too computationally expensive for most organizations to undertake thoroughly—becomes practical and efficient with WhaleFlux’s scalable infrastructure.

Simulation and Testing

Before deploying models to physical edge devices, WhaleFlux provides robust infrastructure for simulating edge environments and validating model performance at scale. Teams can test their optimized models against large datasets that represent real-world conditions, identify edge cases, and validate reliability across diverse scenarios. This simulation capability reduces the risk of deployment failures and ensures models perform correctly in their target environments.

Cost-Effective Development

Through monthly rental options, WhaleFlux provides predictable pricing for sustained edge AI development projects. Unlike hourly cloud services that create unpredictable costs during intensive development phases, WhaleFlux’s model aligns with the reality of AI development workflows. Teams can maintain consistent access to the resources they need without worrying about budget overruns, making sophisticated edge AI development accessible to organizations of all sizes.

VIII. Real-World Applications of Edge Artificial Intelligence

The practical impact of edge AI is already visible across numerous industries, delivering tangible benefits through intelligent, localized processing.

Smart Manufacturing

Factories are deploying edge AI for real-time quality control, identifying defects as products move along assembly lines. Predictive maintenance systems analyze equipment vibrations and temperatures to anticipate failures before they cause downtime. These applications require immediate processing—stopping a production line to send video to the cloud for analysis simply isn’t practical. Edge AI enables milliseconds response times that transform manufacturing efficiency and quality.

Autonomous Vehicles

Self-driving cars represent one of the most demanding edge AI applications, requiring processing of multiple high-resolution sensor streams in real-time. Object detection, path planning, and collision avoidance must happen instantaneously, without reliance on cloud connectivity. The computational demands of these systems are enormous, yet they must operate within strict power and thermal constraints—exactly the challenge that edge AI hardware and optimization techniques are designed to address.

Healthcare Devices

Medical applications benefit tremendously from edge AI’s combination of real-time processing and privacy preservation. Portable ultrasound devices can provide immediate analysis during emergency procedures, continuous glucose monitors can adjust insulin delivery automatically, and wearable ECG patches can detect arrhythmias as they occur. These applications demonstrate how edge AI saves lives by providing instant insights while protecting sensitive patient data.

Retail and Surveillance

Smart retail systems use edge AI to analyze customer behavior while preserving privacy, security systems can identify threats without transmitting sensitive footage, and inventory management systems can track stock levels in real-time. These applications showcase edge AI’s ability to deliver business intelligence while addressing privacy concerns and reducing operational costs through localized processing.

IX. Conclusion: Building the Future of Intelligent Edge Systems

Edge artificial intelligence is fundamentally transforming how we deploy and benefit from AI, enabling real-time, localized processing that unlocks new capabilities across industries. From manufacturing and healthcare to transportation and retail, intelligent edge systems are delivering immediate insights, enhanced privacy, and reliable operation without constant connectivity. This paradigm shift represents one of the most significant trends in modern computing, bringing AI capabilities to environments where cloud-dependent approaches simply cannot function.

However, developing effective edge AI solutions requires powerful infrastructure for training and optimization. The paradox of edge AI development—that creating efficient models for resource-constrained devices demands substantial computational resources—means that teams need access to high-performance computing to innovate effectively. Without adequate infrastructure, development cycles stretch unacceptably, optimization becomes superficial, and time-to-market increases dramatically.

WhaleFlux provides the essential GPU resources that edge AI teams need to innovate faster and deploy with confidence. By removing computational constraints from the development process, WhaleFlux enables teams to focus on what matters most: creating sophisticated AI solutions that deliver real value in edge environments. The platform’s combination of high-performance hardware, intelligent resource management, and predictable pricing makes advanced edge AI development accessible to organizations of all sizes, democratizing capabilities that were previously available only to well-resourced technology giants.

As edge artificial intelligence continues to evolve and expand into new domains, having the right development infrastructure will increasingly determine which organizations lead in innovation and which struggle to keep pace. With solutions like WhaleFlux providing the computational foundation for edge AI development, teams can build the intelligent systems that will define our future—systems that see, understand, and respond to the world around them in real-time, wherever they’re needed most.

New Frontiers in AI: Scaling Up with the Latest AI Infrastructure Advances

Introduction

Artificial Intelligence (AI) infrastructure is the confluence of layered technologies that enable machine learning algorithms to be trained, run, and implemented. It is an amalgamation of cutting-edge computational hardware, expansive data storage capabilities, and nuanced networking that acts as the nervous system for AI applications. These combine to form an ecosystem capable of handling the intensive workloads synonymous with AI, facilitating the rapid processing and analysis of vast data sets in real-time.

Core Components of Modern AI Infrastructure

Compute power in AI infrastructure is increasingly reliant on GPUs for parallel processing capabilities and TPUs that offer specialized processing for neural network machine learning. Next-generation storage solutions like NVMe (Non-Volatile Memory Express) SSDs allow for faster data access speeds, critical for feeding data-hungry AI models. Networking technologies, including high-speed fiber connections and 5G, are integral for the low-latency transfer of large data sets and real-time analytics.

A harmonious orchestration between these components is crucial, as it allows for the seamless integration of AI models into various applications, from predictive analytics to autonomous vehicles, ensuring that latency does not hinder performance.

Trends and Innovations in AI Infrastructure

Hardware Innovations: GPUs and TPUs Leading the Charge

In the hardware domain, innovation is spearheaded by GPUs and TPUs, which are rapidly evolving to address the complex computation needs of AI. NVIDIA’s latest series of GPUs introduces significant improvements in parallel processing, making them ideal for training deep neural networks. TPUs, designed by Google, are tailored for the high-volume, low-latency processing required by large-scale AI applications. These TPUs are increasingly becoming part of the cloud AI infrastructure, granting businesses access to powerful AI compute resources on demand.

Software Frameworks and APIs: The Tools for Democratizing AI

On the software front, frameworks like TensorFlow and PyTorch offer open-source libraries for machine learning that drastically simplify the development of AI models. In combination with robust APIs, such as NVIDIA’s CUDA or Intel’s oneAPI, developers are empowered to customize their AI solutions and optimize performance across various hardware architectures. This democratization of AI development tools is drastically lowering the barrier to entry for AI innovation and enabling a broader range of scientists, engineers, and entrepreneurs to contribute to the AI revolution.

AI Deployment Trends: Cloud Services, Edge AI, and Decentralization

AI infrastructure is undergoing a significant transformation with the rise of cloud AI services, edge computing, and decentralized architectures. Cloud AI services, like AWS’s SageMaker, Azure AI, and Google AI Platform, simplify the process of deploying AI solutions by providing scalable compute resources and managed services. Edge AI brings intelligence processing closer to the source of data generation, enabling real-time decision-making and reducing reliance on centralized data centers. Decentralization further aids in improving the resilience and privacy of AI systems by distributing processing across multiple nodes.

Challenges and Considerations

Scaling AI infrastructure faces the challenge of maintaining the delicate balance between soaring computational demands and the constraints of current technology. This includes addressing bottlenecks in data throughput, ensuring cyber-security in the face of sophisticated AI-oriented threats, and being aware of the carbon footprint associated with running large-scale AI operations.Innovations like quantum computing bring future prospects for AI scalability, while developments in homomorphic encryption present potential breakthroughs in data security. Sustainable AI is an emerging concept, focusing on optimizing algorithms to reduce electrical consumption, promoting environmentally friendly AI operations.

Case Studies

An example of a company at the forefront of AI infrastructure is NVIDIA. Their AI platform houses powerful GPUs in combination with deep learning software, enabling businesses to scale up their AI applications efficiently. In contrast, IBM’s AI infrastructure focuses on building holistic, integrated AI solutions, with hardware like the Power Systems AC922 laying the groundwork for robust, enterprise-level AI workflows.

Startups, too, are making waves. EmergingAI’ innovative design, the LLM serving software, stands to revolutionize AI operations by offering AI cluster management, in-depth observability, and real-time scheduling.

The Role of Data Centers in Powering AI’s Future

Introduction

Data centers, the beating heart of the digital world, are the unprecedented infrastructure demanded by AI’s voracious appetite for data and computational power, surpassing traditional setups as the silent engines powering the AI revolution.

Data centers have transcended beyond their initial roles as mere repositories of information. They have evolved into dynamic and incredibly sophisticated centers of computation, powering not just enterprise-level IT operations but also the complex algorithms that AI systems demand. These facilities, with their racks of humming servers and sprawling webs of networking cables, are critical in teaching machines how to learn, analyze, and act.

This blog casts a spotlight on the symbiotic relationship between AI and data centers, exploring how this partnership is critical not only to the present state of AI but also to its future horizons. The journey will walk through history, the demanding infrastructure requirements, the operational efficiencies, the pressing energy concerns, and real-world case studies that underline the synergy of data centers and AI.

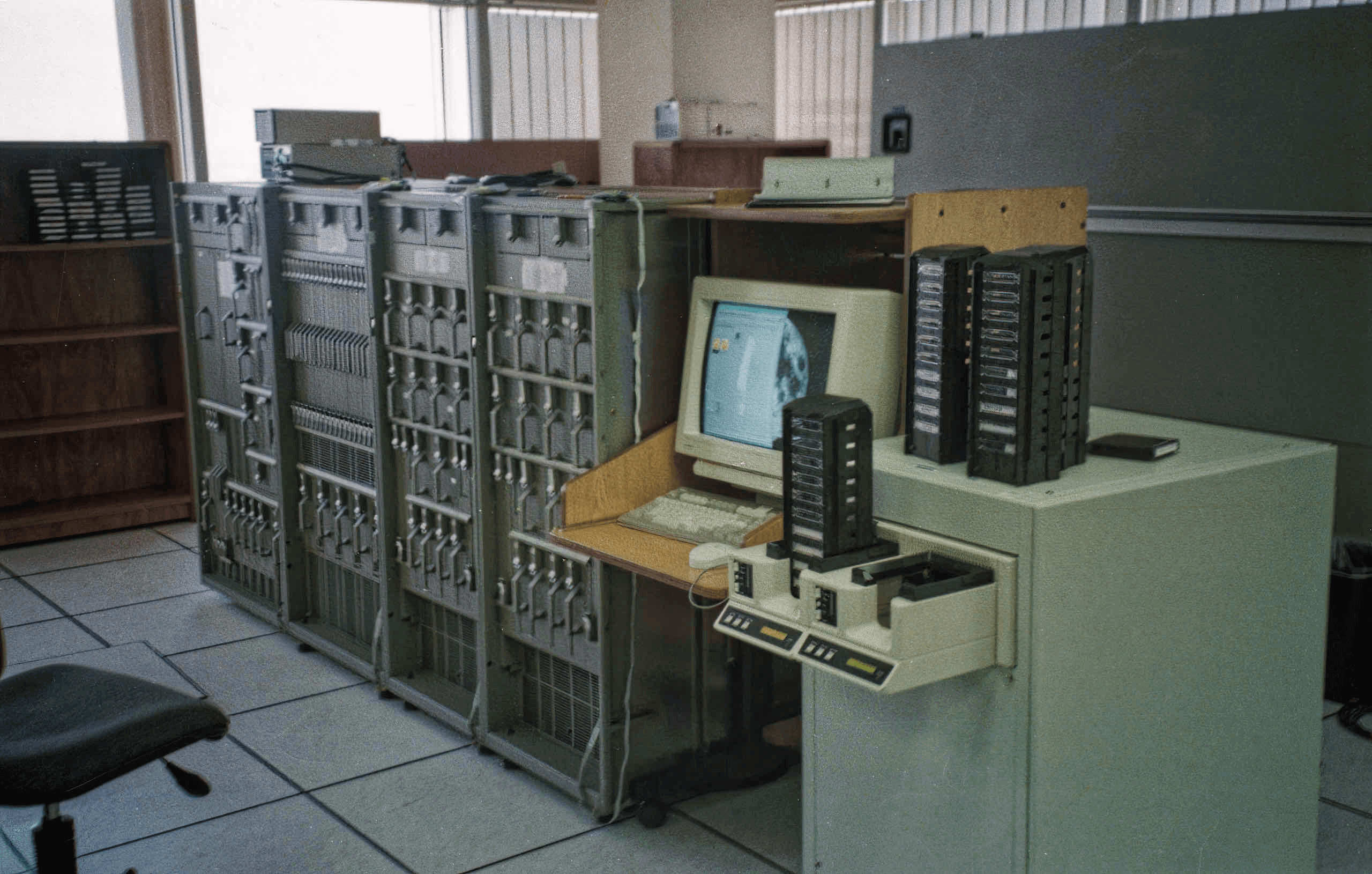

Historical Context of Data Centers and Computing

Before we can fully appreciate the role of data centers in the AI era, we must look back at their genesis and evolution. Initially, they were conceived as large rooms dedicated to housing the mainframe computers of the 20th century. These were substantial machines that required significant space and a controlled environment. Over time, as technology advanced, so too did the design and operation. They became repositories for servers that stored and managed the burgeoning volumes of data the internet age brought forth.

The advent of cloud computing further redefined data centers, transforming them from static storage facilities to dynamic, networked systems essential for delivering a range of services over the internet. They adapted to support a multitude of applications, web hosting, and data analytics, enabling businesses and consumers to leverage powerful computing resources remotely.

The progression from mere data storage to complex computing hubs is inextricably linked with advances in computer technology. As processors shrank in size but expanded in capability, the concentration of computing power within data centers increased. The efficiencies gained in processing, storage, and networking paved the way for today’s highly interconnected and cloud-dependent world. With these advances, they have become agile support systems for a variety of computing needs, including the computationally intensive tasks of AI.

As the digital revolution unfolded, data centers evolved to become not just keepers of information but also sophisticated nerve centers of computational activity. They are now poised at the frontier of the AI revolution, offering the infrastructure that is indispensable to machine learning’s progression.

The retrospect into the past lays a foundation for understanding the critical transformation of data centers from their origin to the present day—a transformation that has mirrored the trajectory of computing itself. Our next sections will delve into the synergistic relationship between AI and these evolved data centers—one that is vital for powering AI’s future.

The Symbiosis between AI and Data Centers

Catalyzing AI’s Operating Power

The growth of artificial intelligence has been inextricably linked to the evolution of data center capabilities. Powering AI requires more than just strong algorithms; it necessitates an infrastructure capable of handling vast amounts of data and lightning-fast computations. Within the controlled environments of modern data centers, AI has found the fertile ground needed for its complex workloads, all thanks to high-performance computing systems that are the linchpin of AI operations.

AI’s Brain and Brawn

In turn, the advancement and widespread implementation of artificial intelligence have profoundly influenced the architectural design and operational procedures of data centers. Through robust analytics and pattern recognition, AI bolsters the efficiency and reliability of data center operations, enhancing everything from workload distribution to energy use. The alignment of AI’s growth with these technological meccas ensures that as AI models become more sophisticated, data center designs continue to adapt and advance in response.

The Reciprocal Evolution

The data center and AI advancement cycle is reciprocal—data centers provide AI the environment it needs to flourish, while AI continually redefines what is required of that environment. As we witness enterprises like Google apply AI to optimize data center energy efficiency or Amazon Web Services (AWS) offer accessible machine learning services through its vast cloud infrastructure, this intertwined progression becomes increasingly evident. AI and data centers are not just growing side by side; they are co-evolving, united by a vision of a smarter future.

Refining with AI’s Own Tools

Perhaps one of the most intriguing aspects of this symbiosis is that AI has begun to refine the very data centers it relies on. Machine learning algorithms now predictively manage infrastructure load and maintenance, turning data centers into self-optimizing entities. Such applications exemplify the dynamic potential of AI to revitalize the operations within data centers, ensuring they remain at the forefront of technological innovation, ready to power AI’s relentless advancement.

There are several AI tools and technologies that have been instrumental in refining data center operations. Here are some examples:

Google’s DeepMind AI for Data Center Cooling

Google has applied its DeepMind machine learning algorithms to the problem of energy consumption in data centers. Their system uses historical data to predict future cooling requirements and dynamically adjusts cooling systems to improve energy efficiency. In practice, this AI-powered system has achieved a reduction in the amount of energy used for cooling by up to 40 percent, according to Google.

WhaleFfux AI for Computing Power Management

The WhaleFlux AI computing power management platform is a multi-tenant platform

solution for computing power cluster management, designed to optimize and automate the allocation, scheduling and management of computing resources.

The platform enables private cloud management, multi-GPU cloud management and GPU cluster deep observation.

NVIDIA’s AI Platform for Predictive Maintenance

NVIDIA has developed AI platforms that integrate deep learning and predictive analytics to perform predictive maintenance within data centers. These tools analyze operational data in real-time to predict hardware failures before they happen, significantly reducing downtime and maintenance costs.

IBM Watson for Data Center Management

IBM’s Watson uses AI to proactively manage IT infrastructure. By analyzing data from various sources within the data center, Watson can identify trends, anticipate outages, and optimize workloads across the data center environment, thereby enhancing operational efficiency and resilience.

Infrastructure: The Backbone of AI Operations

A New Class of Hardware

Data centers have become the proving grounds for AI’s most demanding workloads, reshaping the landscape of computational hardware. Cutting-edge GPUs are now the mainstay in these environments, accelerating complex mathematical computations at the core of machine learning tasks. With dedicated AI processors, such as Google’s TPUs, data centers are pushing beyond traditional computation limits, vastly improving the efficiency and speed of AI training and inferencing phases.

High-Speed Networking: The Connective Tissue

None of this computational power could be fully harnessed without advancements in networking technology. High-speed networks within data centers facilitate the rapid transmission of data, crucial for collaborative AI processing. Technological marvels like NVIDIA’s Mellanox networking solutions exemplify the significant leaps made in ensuring data centers can operate at the speed AI demands.

Advances in Storage: The Data Storehouses

AI’s insatiable demand for data necessitates not merely large storage capacities but also swift data retrieval systems. Innovations in solid-state drive technology and software-defined storage have transformed data centers into highly efficient data storehouses, capable of feeding AI models with the necessary data volumes at unprecedented speeds.

Conclusion of Data Centers

The intertwining of AI and data center marks a pivotal shift in our digital epoch. Data centers, the once silent sentinels of data and servers, have evolved into the lifeblood of AI’s advancement, offering the computational might and data processing prowess necessary for AI to thrive. The transformative influence of AI, in turn, is streamlining these hubs of technology into smarter, more efficient operations.