Introduction: The Dawn of the “AI-Native” Computer Science Era

For over half a century, Computer Science (CS) has been defined by the art of writing explicit instructions for machines. We built compilers, optimized databases, and designed intricate algorithms to solve human problems. However, the meteoric rise of Generative AI has sent a shockwave through the industry. Suddenly, the “black box” of neural networks is performing tasks—coding, debugging, and architecting—that were once the exclusive domain of human engineers.

This shift has ignited a polarizing debate: Is AI and computer science a partnership, or is AI a “replacement engine” for the very field that created it? If you browse any tech forum today, you will encounter the same anxious questions: Will computer science be replaced by ai? Is a CS degree still worth it?

The truth is more nuanced than a simple “yes” or “no.” We are witnessing an evolution. We are moving away from the era of “Hand-Coded Logic” and into the era of “AI Orchestration.” In this new landscape, the traditional boundaries of software engineering are blurring, giving way to a more integrated stack where hardware, models, and autonomous agents work in unison. Companies like WhaleFlux are at the forefront of this transition, providing the infrastructure—from Elastic AI Compute to AI Agent Platforms—that allows computer scientists to stop wrestling with raw code and start building intelligent systems.

In this article, we will explore why AI computer science is the most significant career pivot in history, why the focus has shifted from “0-to-1 pre-training” to “strategic fine-tuning,” and how platforms like WhaleFlux are ensuring that the human computer scientist remains the most critical component of the tech stack.

1. The Symbiosis: Understanding AI in Computer Science

To understand the future, we must first define the relationship between ai and computer science. AI is not a separate entity; it is a specialized branch of CS that has grown so powerful it is now “recursive”—it is being used to improve the very discipline from which it emerged.

Traditionally, computer science was about deterministic systems (Input A always yields Output B). AI computer scienceintroduced probabilistic systems (Input A yields the most likely Output B). This transition hasn’t made CS obsolete; it has simply added a new layer of complexity.

The Shift from Coding to Orchestration

In the past, a software engineer spent 80% of their time writing boilerplate code and 20% on system design. Today, AI handles the boilerplate. This allows the modern computer scientist to focus on:

- System Architecture: How do different AI models interact?

- Data Lineage: Is the data used for training clean and ethical?

- Compute Efficiency: How do we run these models without burning through a million-dollar budget?

This is where the concept of a Unified AI Platform becomes essential. WhaleFlux recognizes that the modern CS professional needs more than just a code editor. They need a dashboard that manages the entire lifecycle of an AI application—from the raw NVIDIA GPU compute to the final Autonomous Agent deployment.

2. The Strategic Shift: Why Fine-Tuning is the Real Winner

A common misconception when discussing AI in computer science is that every innovation requires building a model from scratch (0-to-1 pre-training). While companies like OpenAI or Google focus on pre-training foundational models, the rest of the world is realizing that the real value lies in Fine-tuning.

Why Not 0-to-1 Pre-training?

Pre-training a foundation model requires tens of thousands of GPUs, months of time, and billions of dollars. It is a feat of “Brute Force CS.” For 99% of businesses and developers, this is neither practical nor necessary.

The Power of Fine-Tuning on WhaleFlux

Fine-tuning is the process of taking a pre-trained model (like Llama 3 or Mistral) and training it on a smaller, domain-specific dataset to make it an expert in a particular field. This is the “Best AI for computer science” strategy today.

WhaleFlux specifically focuses on this middle-to-end stage of the AI lifecycle. Instead of asking you to build a brain from scratch, WhaleFlux provides:

Elastic AI Compute:

High-performance GPUs (H100, A100, etc.) optimized for the intense but shorter bursts of activity required for fine-tuning.

AI Models & Data Management:

A streamlined environment to upload your proprietary data and refine existing models.

Cost Efficiency:

By focusing on fine-tuning rather than pre-training, companies can achieve “SOTA” (State of the Art) results at 1/100th of the cost.

3. Will AI Take Over Computer Science Jobs?

The fear that AI will take over computer science jobs is understandable but largely misplaced. History shows that when a tool makes a task easier, the demand for that task doesn’t vanish—it explodes.

The Jevons Paradox in Software

When it becomes easier and cheaper to build software (thanks to AI), companies don’t stop building software; they decide to build ten times more of it. We are entering an era where every small business will want its own custom AI agent, every internal tool will need a natural language interface, and every hardware device will need embedded intelligence.

AI won’t replace the computer scientist; it will replace the manual coder. The jobs that are “at risk” are those that involve repetitive, low-level tasks. The jobs that are “exploding” are those that involve:

- AI Infrastructure Management: Managing GPU clusters and ensuring AI Observability.

- Prompt Engineering & Model Steering: Guiding AI to produce accurate, safe results.

- Agentic Workflow Design: Using platforms like the WhaleFlux AI Agent Platform to create autonomous systems that can actually do work, not just talk about it.

4. Redefining the Tech Stack: Compute, Models, and Agents

The traditional “Full Stack Developer” is being replaced by the “AI Stack Orchestrator.” To stay relevant, CS professionals must master a new four-layer stack, which is exactly how WhaleFlux is structured:

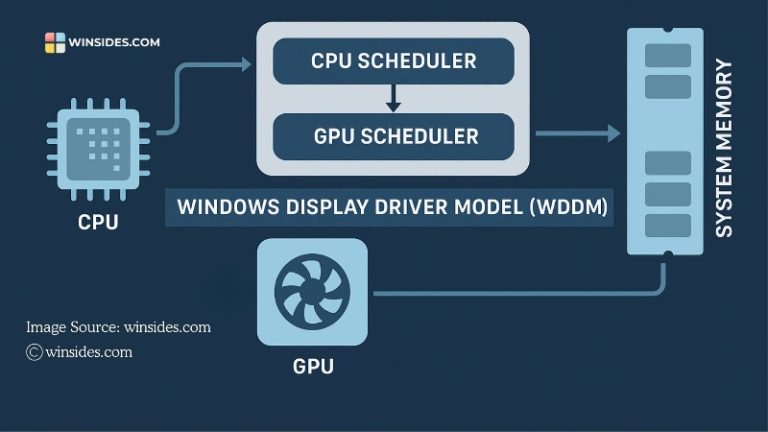

Layer 1: AI Compute & Observability

Hardware is the new bottleneck. You cannot do AI computer science without a deep understanding of GPU utilization. WhaleFlux’s AI Observability tools are game-changers here. They provide full-stack visibility, allowing engineers to:

- Reduce hardware failure rates by 98%.

- Slash compute costs by up to 70% through better resource allocation.

- Monitor GPU health in real-time to ensure fine-tuning jobs don’t crash halfway through.

Layer 2: AI Models & Data

This layer involves the selection and “polishing” of models. As mentioned, WhaleFlux facilitates efficient fine-tuning, allowing CS professionals to turn generic AI into specialized tools.

Layer 3: Knowledge & Reasoning

This is where RAG (Retrieval-Augmented Generation) and vector databases come in. It’s about giving the AI a “memory” and a “library” of your specific business data.

Layer 4: AI Agent Platform

This is the pinnacle of the new CS. A “Model” is just a brain in a jar; an “Agent” is a brain with hands and eyes. WhaleFlux’s AI Agent Platform allows developers to build autonomous entities that can observe a system, reason about a problem, and take action.

5. What is the Best AI for Computer Science Success?

If you are a student or a professional asking what the best AI for computer science is, the answer isn’t a specific chatbot. It is a Platform Mindset.

To succeed in 2026 and beyond, you need a platform that unifies these disparate elements. If you spend all your time configuring Linux drivers for GPUs, you aren’t being a computer scientist; you’re being a mechanic. WhaleFlux acts as the “Operating System for AI,” handling the “mechanic” work (infrastructure, observability, compute scaling) so you can focus on the “architect” work (fine-tuning and agent design).

Conclusion: Embracing the AI-Powered Future

So, will computer science be replaced by AI?

The answer is a resounding no. Computer science is not dying; it is graduating. We are moving from the “Digital Age” to the “Intelligence Age.” In this new era, the most successful computer scientists will be those who view AI as their most powerful collaborator.

The future of the field belongs to those who can bridge the gap between raw power and intelligent action. Whether it is through the Elastic AI Compute that powers a fine-tuning job, the AI Observability that keeps a global cluster running, or the AI Agent Platform that automates a complex business process, the tools are now here to amplify human creativity.

WhaleFlux is more than just a service provider; it is the infrastructure for this evolution. By focusing on the critical stages of fine-tuning and agent orchestration, WhaleFlux ensures that enterprises and developers can harness the power of AI without the prohibitive costs or complexity of the past.

The “Computer Scientist” of tomorrow won’t just write code; they will lead a digital workforce of AI agents. The question isn’t whether you will be replaced—it’s what you will build now that the limits have been removed.

Frequently Asked Questions (FAQ)

1. Does WhaleFlux support 0-to-1 model pre-training?

No. WhaleFlux is specialized for the Fine-tuning and inference stages of the AI lifecycle. We provide the elastic compute and model management tools necessary to adapt existing foundation models to specific data and use cases, which is the most cost-effective path for 99% of businesses.

2. Will AI take over computer science jobs in the next few years?

AI will automate many routine programming tasks, but it will not take over the role of a computer scientist. The field is shifting toward AI Orchestration. Professionals who master tools like WhaleFlux to manage GPU resources and deploy AI agents will find themselves in higher demand than ever.

3. What is “AI Observability,” and why is it important for CS?

In ai computer science, hardware is the most expensive asset. AI Observability (like that offered by WhaleFlux) provides deep monitoring of GPU clusters. It can reduce hardware failures by 98% and optimize costs by 70%, making it a critical skill for modern infrastructure engineers.

4. Why should I choose fine-tuning over building a model from scratch?

Building a model from scratch (pre-training) is extremely expensive and time-consuming. Fine-tuning allows you to leverage the “intelligence” of models like Llama 3 while customizing them with your own data. WhaleFlux makes this process seamless and affordable.

5. How does the WhaleFlux AI Agent Platform differ from a standard chatbot?

A chatbot simply answers questions. An AI Agent on the WhaleFlux platform can “Observe, Reason, and Act.” It can be integrated into your business workflows to autonomously perform tasks, making it a functional tool rather than just a conversational interface.