Introduction: The Quest for the Best NVIDIA GPU

“What is the best NVIDIA GPU for our deep learning projects?” This question echoes through conference rooms and Slack channels in AI companies worldwide. Teams spend countless hours analyzing benchmarks, comparing specifications, and debating the merits of different hardware configurations. However, the truth is that the “best” GPU isn’t just about raw specs or peak performance numbers. It’s about finding the right tool for your specific workload and, more importantly, implementing systems to manage that tool effectively to maximize your return on investment. Selecting your hardware is only half the battle—the real challenge lies in optimizing its utilization to justify the substantial investment these powerful processors require.

The AI industry’s rapid evolution has created an incredibly diverse hardware landscape. What constitutes the “best” NVIDIA GPU for a startup fine-tuning smaller models differs dramatically from what a research institution training massive foundational models requires. This guide will help you navigate these complex decisions while introducing a critical component often overlooked in hardware selection: intelligent resource management that ensures whatever hardware you choose delivers maximum value.

Contenders for the Crown: Breaking Down the Best NVIDIA GPUs

The NVIDIA ecosystem offers several standout performers, each excelling in specific scenarios:

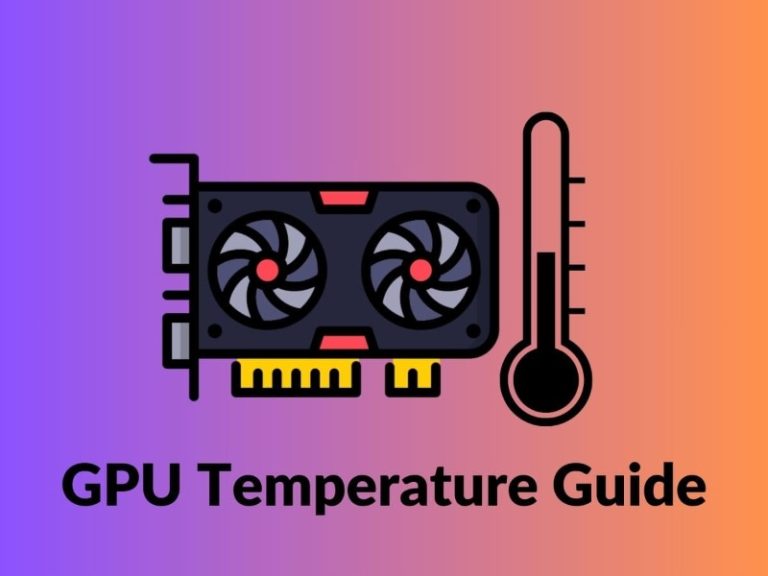

The NVIDIA H100 represents the current performance king for large-scale training and high-performance computing. With its transformative Transformer Engine and dedicated tensor cores optimized for AI workloads, the H100 delivers unprecedented performance for training the largest models. For organizations pushing the boundaries of what’s possible in AI, the H100 is often the default choice despite its premium price point.

The NVIDIA H200 stands as the memory powerhouse for massive model inference. Building on the H100’s architecture, the H200 doubles the high-bandwidth memory using groundbreaking HBM3e technology. This massive memory capacity—up to 141GB—makes it ideal for inference workloads with enormous models that won’t fit in other GPUs’ memory. For companies deploying models with billions of parameters, the H200 eliminates memory constraints that previously hampered performance.

The NVIDIA A100 serves as the versatile workhorse for general AI workloads. While newer than the H100 and H200, the A100 remains incredibly relevant for most AI tasks. Its 40GB and 80GB memory options provide substantial capacity for both training and inference, while its mature software ecosystem ensures stability and compatibility. For many organizations, the A100 represents the sweet spot of performance, availability, and cost-effectiveness.

The NVIDIA RTX 4090 emerges as the cost-effective developer champion for prototyping and mid-scale tasks. While technically a consumer-grade card, the 4090’s impressive 24GB of memory and strong performance make it surprisingly capable for many AI workloads. For research teams, startups, and developers, the 4090 offers exceptional value for experimentation, model development, and smaller-scale production workloads.

The key takeaway is clear: there is no single “best” GPU. The optimal choice depends entirely on your specific use case, budget constraints, and scale of operations. An organization training massive foundational models will prioritize different characteristics than a company fine-existing models for specific applications.

Beyond the Hardware: The True Cost of Owning the “Best” NVIDIA GPU

Purchasing powerful hardware is only the beginning of your AI infrastructure journey. The hidden costs of poor utilization, scheduling overhead, and management complexity often undermine even the most carefully selected hardware investments. Many organizations discover that their expensive GPU clusters sit idle 60-70% of the time due to inefficient job scheduling, resource allocation problems, and operational overhead.

The resource management bottleneck represents the critical differentiator for AI enterprises today. It’s not just about owning powerful GPUs—it’s about extracting maximum value from them. Teams often find themselves spending more time managing their infrastructure than developing AI models, with DevOps engineers constantly fighting fires instead of optimizing performance.

This is where simply owning the best NVIDIA GPU is not enough. Intelligent management platforms like WhaleFlux become critical to unlocking true value from your hardware investments. The right management layer can transform your GPU cluster from a cost center into a competitive advantage, ensuring that whatever hardware you choose operates at peak efficiency.

Introducing WhaleFlux: The Intelligence Behind Your GPU Power

So what exactly is WhaleFlux? It’s an intelligent GPU resource management layer that sits atop your hardware infrastructure, whether on-premises or in the cloud. WhaleFlux is specifically designed for AI enterprises that need to maximize the value of their GPU investments while minimizing operational overhead.

The core value proposition of WhaleFlux is simple but powerful: it ensures that whichever best NVIDIA GPU you choose—H100, H200, A100, or 4090—it operates at peak efficiency, dramatically improving utilization rates and reducing costs. By implementing sophisticated scheduling algorithms and optimization techniques, WhaleFlux typically helps organizations achieve 85-95% utilization rates compared to the industry average of 30-40%.

WhaleFlux provides flexible access to top-tier GPUs, not just ownership. Through both purchase and rental options (with a minimum one-month term), teams can match the perfect hardware to each task without long-term lock-in or massive capital expenditure. This approach allows organizations to use H100s for model training, H200s for memory-intensive inference, A100s for general workloads, and RTX 4090s for development—all managed through a unified interface that optimizes the entire workflow.

How WhaleFlux Maximizes Your Chosen NVIDIA GPU

WhaleFlux delivers value through several interconnected mechanisms that transform how organizations use their GPU resources:

The platform eliminates underutilization through smart scheduling that ensures no GPU cycle goes to waste. By automatically matching workloads to available resources and queuing jobs efficiently, WhaleFlux makes your chosen hardware significantly more cost-effective. This intelligent scheduling accounts for factors like job priority, resource requirements, and estimated runtime to optimize the entire workflow.

WhaleFlux dramatically simplifies management by removing the DevOps burden of orchestrating workloads across different GPU types and clusters. The platform provides a unified management interface that handles resource allocation, monitoring, and optimization automatically. This means your engineering team can focus on developing AI models rather than managing infrastructure.

The platform accelerates deployment by providing a stable, optimized environment that gets models from training to production faster. With consistent configurations, automated monitoring, and proactive issue detection, WhaleFlux reduces the friction that typically slows down AI development cycles. Teams can iterate more quickly and deploy more reliably, giving them a significant competitive advantage.

The WhaleFlux Advantage: Summary of Benefits

When you implement WhaleFlux to manage your NVIDIA GPU infrastructure, you gain several compelling advantages:

• Access to the Best NVIDIA GPUs: Deploy H100, H200, A100, and RTX 4090 as needed for different workloads

• Maximized ROI: Drive utilization rates above 90%, slashing the effective cost of compute by 40-70%

• Reduced Operational Overhead: A single platform to manage your entire GPU fleet, freeing engineering resources

• Strategic Flexibility: Choose between purchase and rental models to fit your financial strategy and project needs

Conclusion: The Best GPU is a Well-Managed GPU

The best NVIDIA GPU for deep learning isn’t necessarily the most expensive or most powerful model on the market. It’s the one that best serves your project’s specific needs AND is managed with maximum efficiency. Hardware selection matters, but management makes the difference between an expense and an investment.

WhaleFlux serves as the force multiplier that ensures your investment in the best NVIDIA GPU translates directly into competitive advantage, not just impressive hardware specs on a spreadsheet. By optimizing utilization, simplifying management, and accelerating deployment, WhaleFlux helps AI enterprises extract maximum value from their hardware investments.

Ready to maximize the ROI of your AI infrastructure? Let WhaleFlux help you select and manage the best NVIDIA GPU for your specific needs. Contact our team today for a personalized consultation, or learn more about our optimized GPU solutions and how we can help you reduce costs while improving performance.