Introduction: The Engine of AI – Choosing the Right GPU

The revolutionary advancements in artificial intelligence, from large language models like GPT-4 to generative image systems, are fundamentally powered by one piece of hardware: the Graphics Processing Unit (GPU). These are not the simple graphics cards of gaming past; they are sophisticated, parallel processing supercomputers designed to handle the immense mathematical calculations required for AI. However, with NVIDIA offering a range of options—from the data center beast H100 to the consumer-grade RTX 4090—selecting the right GPU has become a critical strategic decision that directly impacts performance, project timelines, and budget.

Making the wrong choice can mean wasting thousands of dollars on underutilized resources or encountering frustrating bottlenecks that slow down development. This guide will help you navigate the NVIDIA landscape to find the perfect engine for your AI ambitions. The good news is that you don’t have to make this choice alone or commit to a single card without flexibility. WhaleFlux provides access to this full spectrum of high-performance NVIDIA GPUs, allowing businesses to test, scale, and choose the perfect fit for their specific projects, whether through rental or purchase.

Part 1. Beyond Gaming: Why GPU Specs Matter for AI

When evaluating GPUs for AI, traditional gaming benchmarks like clock speed and frame rates become almost irrelevant. The performance indicators that truly matter are tailored to the unique demands of machine learning workloads. Understanding these will help you decipher the comparison charts.

Tensor Cores and FP8 Precision:

Think of Tensor Cores as specialized workers on the GPU whose only job is to perform matrix multiplication and addition—the fundamental math behind neural networks. Newer architectures like Hopper (H100, H200) introduce FP8 (8-bit floating point) precision, which allows these cores to process data at double the speed of the previous FP16 standard without a significant loss in accuracy for AI tasks. This is crucial for training massive LLMs where time literally equals money.

VRAM (Video RAM):

The type, amount, and bandwidth of a GPU’s memory are arguably its most important features for AI. Large models must be loaded entirely into VRAM to be trained or run efficiently.

- HBM2e (High Bandwidth Memory): Used in H100, H200, and A100 cards, this is advanced memory stacked right next to the GPU core. It offers tremendous bandwidth (over 2 TB/s on the H200) and large capacities (up to 141 GB), allowing you to work with enormous models and datasets without slowing down.

- GDDR6X: Used in the RTX 4090, this memory is fast and excellent for gaming and consumer applications, but its bandwidth and capacity are lower than HBM2e. It can still handle many AI tasks but may become a limiting factor for the very largest models.

Interconnect (NVLink vs. PCIe):

In a multi-GPU server, cards need to communicate and share data rapidly. The standard PCIe slot is a highway, but NVIDIA’s NVLink technology is a hyper-fast, dedicated tunnel. For example, NVLink can connect two GPUs to act as one large, unified memory pool, which is essential for training models that are too big for a single card’s VRAM. This is a key differentiator between professional/data center cards (which have NVLink) and consumer cards (which do not).

Part 2. NVIDIA GPU Card Comparison: Breaking Down the Contenders

Let’s put these specs into context by comparing the four most relevant NVIDIA GPUs for AI workloads today.

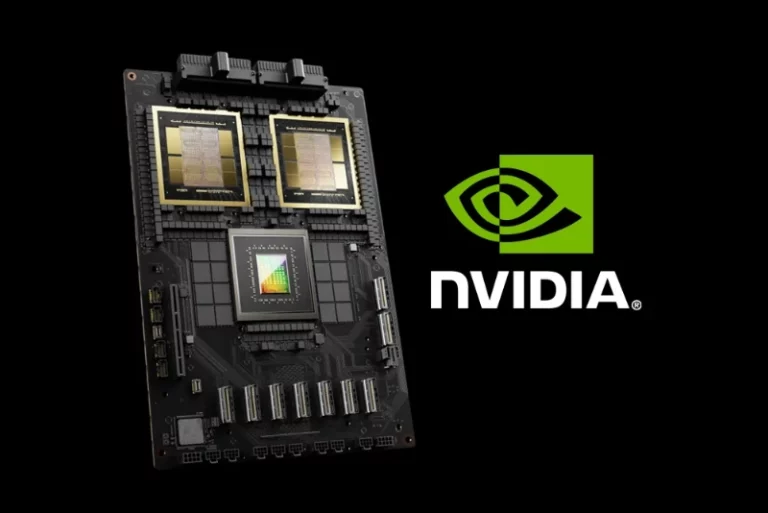

NVIDIA H100 (Hopper)

- Best Use Case: Hyperscale LLM and AI training; High-Performance Computing (HPC).

- Key Strength: Raw computational power. It features the most advanced Tensor Cores supporting FP8, a dedicated Transformer Engine to accelerate LLMs, and blistering speed. It’s designed to be the foundation of the world’s most powerful AI supercomputers.

- Ideal User: Large enterprises and research institutions training frontier AI models from the ground up. If you are building the next GPT, this is your starting point.

NVIDIA H200 (Hopper)

- Best Use Case: Massive-scale AI inference and giant model training.

- Key Strength: Unprecedented memory. The H200 is the first GPU to feature 141 GB of next-generation HBM3e memory with over 2 TB/s of bandwidth. This allows it to hold and process colossal models for inference with incredible speed and efficiency, reducing the need for complex multi-card setups.

- Ideal User: Companies that need to deploy and run the largest models at scale with the lowest possible latency and highest throughput.

NVIDIA A100 (Ampere)

- Best Use Case: General enterprise AI training and inference; a versatile workhorse.

- Key Strength: Proven reliability and performance-per-dollar in the data center. While older than the H100, the A100’s 40GB or 80GB of HBM2e memory and powerful Tensor Cores make it more than capable for the vast majority of enterprise AI projects, from recommender systems to mid-sized LLM fine-tuning.

- Ideal User: Established AI teams that need a reliable, powerful, and versatile GPU for a wide range of production workloads without the premium cost of the newest architecture.

NVIDIA RTX 4090 (Ada Lovelace)

- Best Use Case: AI prototyping, research, and mid-scale inference on a budget.

- Key Strength: Cost-effectiveness and accessibility. It offers tremendous computational power for its price and fits in a standard desktop workstation. However, its 24GB of GDDR6X memory and lack of NVLink can be a hard ceiling for larger models.

- Ideal User: Individual researchers, startups, and development teams who need powerful hardware for experimentation, model development, and running smaller inference tasks without the overhead of data center infrastructure.

Part 3. From Comparison to Deployment: The Hidden Infrastructure Costs

Selecting the right card is a major victory, but it’s only half the battle. The next step—deploying and managing these GPUs—introduces a set of often-overlooked challenges that can erode your ROI.

- Multi-GPU Cluster Complexity: Operating a single GPU is straightforward. Managing a cluster of them—especially a heterogeneous mix of H100s and A100s—is incredibly complex. Efficiently distributing workloads (e.g., using Kubernetes with NVIDIA device plugins), ensuring correct driver compatibility, and handling networking between nodes requires specialized MLOps expertise.

- Cost of Idle Resources: A GPU that is not running a job is burning money. In manually managed environments, it’s common to see significant idle time due to scheduling inefficiencies, job queues, or developers holding onto resources “just in case.” For expensive hardware like the H100, this idle time represents a massive financial drain.

- Operational Overhead: The hidden cost is your team’s time. Engineers and IT staff spend countless hours provisioning machines, maintaining drivers, debugging cluster issues, and manually scheduling jobs instead of focusing on core AI research and development.

Part 4. WhaleFlux: Your Strategic Partner in GPU Deployment

Choosing the right card is only half the battle. Maximizing its ROI requires intelligent management. This is where WhaleFlux transforms your GPU strategy from a complex infrastructure problem into a competitive advantage.

WhaleFlux is an intelligent GPU resource management tool designed specifically for AI enterprises. It directly addresses the hidden costs of deployment:

- Unified Management: WhaleFlux provides a single pane of glass to manage your entire fleet, whether it’s a homogeneous cluster of A100s or a heterogeneous mix of H100s, H200s, and RTX 4090s. It abstracts away the underlying complexity, allowing your team to focus on submitting jobs, not configuring hardware.

- Intelligent Orchestration: This is the core of WhaleFlux. Its smart scheduler doesn’t just assign jobs to open GPUs; it dynamically allocates workloads to the most suitable available GPU based on the job’s requirements. It ensures your high-priority training task gets on the H100, while a smaller inference job runs on an A100, maximizing the utilization of every card in your cluster and slashing costs from idle resources.

- Simplified Access: Ultimately, the best GPU is the one you can access and use efficiently. WhaleFlux offers access to all these compared GPUs (H100, H200, A100, RTX 4090) for purchase or long-term rental (with a minimum one month commitment). This model provides the stability and performance consistency required for serious AI work, avoiding the unpredictability of ephemeral hourly cloud instances. With WhaleFlux, you get both the hardware and the intelligent software layer to make it sing.

Part 5. Conclusion: Making an Informed Choice for Your AI Future

There is no single “best” GPU for AI. The ideal choice is a strategic decision that depends entirely on your specific use case—whether it’s large-scale training, high-throughput inference, or agile prototyping—as well as your budget constraints.

The journey doesn’t end with the purchase order. The true differentiator for modern AI teams is not just owning powerful hardware but being able to wield it with maximum efficiency and minimal operational drag. Partnering with a solution like WhaleFlux future-proofs your investment. It ensures that no matter which NVIDIA GPU you select today or tomorrow, your infrastructure will be optimized to deliver peak performance and cost-efficiency, allowing your team to innovate faster.

Part 6. Call to Action (CTA)

Ready to deploy the ideal GPU for your AI workload and supercharge your productivity?

Contact the WhaleFlux team today for a personalized consultation. We’ll help you choose, configure, and optimize your perfect GPU cluster.

Explore our GPU options and leverage our expertise to build a smarter, more efficient AI infrastructure.