Introduction: The AI Gold Rush and the GPU Bottleneck

Artificial Intelligence isn’t just the future; it’s the roaring present. Every day, new large language models (LLMs), generative AI applications, and groundbreaking machine learning projects emerge, pushing the boundaries of what’s possible. But this incredible innovation has a voracious appetite: computational power. At the heart of this revolution lies the Graphics Processing Unit (GPU), the workhorse that makes complex AI model training and inference possible.

For startups aiming to ride this wave, this creates a critical bottleneck. Accessing and, more importantly, managing this immense computational power—especially the multi-GPU clusters needed for modern LLMs—is a monumental challenge. It’s notoriously complex to set up and notoriously, prohibitively expensive to maintain. This leaves many promising AI ventures stuck, struggling to scale not because of their ideas, but because of their infrastructure.

This blog post will guide you through the complex landscape of cloud GPU providers and cloud GPU cost. We’ll move beyond the surface-level pricing to uncover the hidden expenses and explore how to find a sustainable, efficient solution that empowers your growth instead of stifling it.

Part 1. Navigating the Cloud GPU Jungle: A Market Overview

Before we dive into solutions, let’s map out the territory. When we talk about cloud based GPU power, we’re generally referring to two main types of providers.

The Major Cloud GPU Providers

First, there are the hyperscalers—the tech giants whose names you know well. This includes Google Cloud GPU (part of the Google Cloud Platform), Amazon Web Services (AWS), and Microsoft Azure. They offer a vast array of services, with GPU instances being one of many. Then, there are more specialized offerings, like NVIDIA GPU cloud services, which are tailored specifically for AI and high-performance computing workloads. These providers form the backbone of the cloud gpu providers market.

The Pricing Conundrum

The standard model for almost all these providers is pay-as-you-go, or hourly billing. You turn on a GPU instance, and the clock starts ticking. While this seems flexible, it’s the source of major financial pain for startups.

- Unpredictable Bills: Your cloud gpu cost can spiral out of control quickly. A model that takes longer to train than expected, a spike in user inference requests, or even a forgotten idle instance can lead to a shocking invoice at the end of the month. Scouring the internet for the cheapest gpu cloud based on hourly rates often feels like a futile exercise, as the total cost for sustained workloads is rarely clear.

- The “Free” Illusion: You might have encountered free cloud GPU options like Google Colab. These are fantastic for learning and tiny experiments. But for any serious development or production deployment, they are immediately limiting due to strict usage caps, low-power hardware, and lack of reliability. You simply cannot build a business on them.

Part 2. The Hidden Costs: Beyond the Hourly Rate

The hourly rate is just the tip of the iceberg. The true cloud gpu cost is the Total Cost of Ownership (TCO), which includes significant hidden expenses that can sink a startup’s budget.

Management Overhead

Provisioning, configuring, and monitoring a cloud based GPU cluster is not a simple task. It requires deep expertise. You need to manage drivers, Kubernetes clusters, containerization, and networking to ensure all those expensive GPUs can talk to each other efficiently. This isn’t a one-time setup; it’s an ongoing demand on your team’s time. The need for dedicated DevOps engineers to handle this infrastructure is a massive hidden cloud gpu cost that often gets overlooked in initial budgeting. You’re not just paying for the GPU; you’re paying for the people and time to make it work.

Underutilization & Inefficiency

This is the silent budget killer. Imagine renting a massive, powerful truck to deliver a single pizza every hour. That’s what happens with poorly managed GPU clusters. GPUs can sit idle due to:

- Software Bottlenecks: Your code or pipeline might not be optimized to keep the GPU fed with data, causing it to sit idle between tasks.

- Poor Scheduling: Jobs might not be orchestrated to maximize cluster usage, leaving GPUs empty while others are overloaded.

This waste happens even on the cheapest gpu cloud provider. You are literally paying for nothing. Furthermore, achieving optimal performance for LLM training and inference is difficult. Without the right tools, you’re leaving a significant amount of your purchased computational power (and money) on the table.

Part 3. A Smarter Path: Optimizing for Efficiency and Predictability

So, if the problem isn’t just the price tag but the total cost and complexity of ownership, the solution must address both. The goal shifts from simply finding a provider to maximizing the value from every single computation (every FLOP) of your NVIDIA GPU cloud computing investment.

This is where a new category of tool comes in: cloud gpu management software for startups. These tools are designed to move beyond basic provisioning and tackle the core issues of optimization and automation. They help you squeeze every drop of value from your hardware, turning raw power into efficient, actionable results.

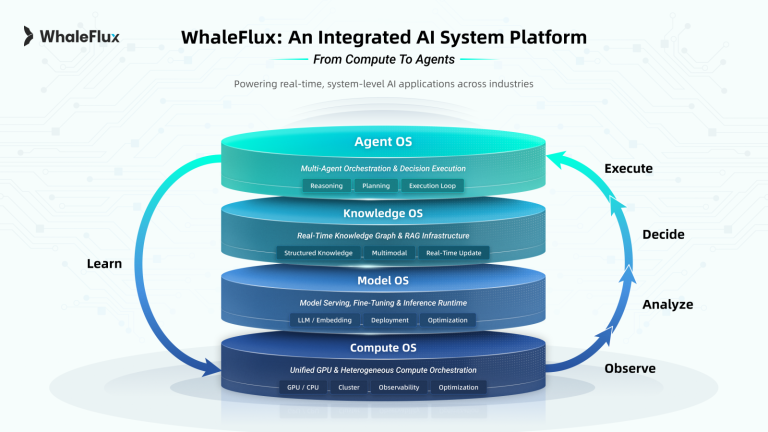

Part 4. Introducing WhaleFlux: Intelligent GPU Resource Management for AI Enterprises

This brings us to the solution. WhaleFlux is a smart GPU resource management tool built from the ground up for AI enterprises. It is the direct answer to the challenges of cost, complexity, and inefficiency we’ve outlined. Our value proposition is clear: we optimize multi-GPU cluster efficiency to drastically lower your cloud gpu cost while simultaneously accelerating the deployment speed and stability of your large language models.

How does WhaleFlux achieve this? Through a set of powerful features designed to solve these core problems:

- Intelligent Orchestration: Think of WhaleFlux as a brilliant air traffic controller for your GPU cluster. It doesn’t just hand over the keys; it automatically schedules and manages workloads across all your GPUs. It ensures that jobs are placed where there is available capacity, maximizing the utilization of every single GPU you’re paying for. This dramatically reduces waste and ensures your investment is actively working for you.

- Performance Boost: WhaleFlux isn’t just about management; it’s about enhancement. Our software is fine-tuned to enhance the stability and speed of large language model deployments. This means your models train faster and serve inference requests more reliably, getting your AI products to market quicker and providing a better experience for your users.

- Cost Transparency & Control: We bring clarity to your cloud spending. WhaleFlux provides detailed insights into how your resources are being used and what it costs. This moves you away from the unpredictable, scary billing cycles of hourly models and towards a predictable, understandable cost structure.

Part 5. The WhaleFlux Advantage: Power and Flexibility

What makes WhaleFlux different from generic gpu cloud providers? It’s our combination of top-tier hardware and a customer-aligned commercial model.

Top-Tier Hardware Stack

We provide access to a curated selection of the most powerful GPUs on the market. Whether you need the sheer power of the NVIDIA H100 and NVIDIA H200 for training massive models, the proven reliability of the NVIDIA A100 for a variety of tasks, or the cost-effectiveness of the NVIDIA RTX 4090 for inference and development, we have you covered. This allows you to choose the right tool for your specific job, ensuring performance and cost-effectiveness.

Simplified, Predictable Commercial Model

Here is a key differentiator that truly aligns our success with yours: WhaleFlux supports purchase or rental terms, but we do not support hourly usage. Our minimum rental period is one month.

We frame this intentionally as a major benefit, not a limitation. Here’s why:

- Encourages Long-Term Planning: It incentivizes you to think about efficiency and stable growth, not just short-term experiments.

- Eliminates Billing Surprises: You will never log into a portal to find a runaway hourly bill because a process got stuck. Your costs are predictable and stable.

- Aligns Our Interests: Because we don’t profit from your inefficiency or idle time, our team is deeply motivated to ensure our cloud gpu management software is working perfectly to maximize the value you get from your hardware. We are invested in your success. This model is designed for serious AI enterprises building for the long haul.

Part 5. Who is WhaleFlux For? (Ideal Customer Profile)

WhaleFlux is not for everyone. It is specifically designed for:

- AI startups and scale-ups that are running production-grade LLM workloads and need reliable, high-performance infrastructure.

- Technical teams that are tired of wrestling with the complexity and hidden costs of managing their own cloud google gpu or other cloud clusters and want to focus their DevOps resources on building product, not managing infrastructure.

- Companies that value performance stability and predictable budgeting over the fleeting, often illusory, flexibility of hourly flexibility.

Part 6. Conclusion: Building Your AI Future on a Stable Foundation

The cloud gpu market is complex and filled with hidden pitfalls. As we’ve seen, true savings and operational success don’t come from simply finding the lowest hourly rate. They come from intelligent management, maximizing efficiency, and achieving predictable costs.

This requires a partner that provides more than just raw power; it requires a partner that provides the intelligence to use that power effectively. WhaleFlux is that partner. We provide the best-in-class NVIDIA GPU cloud hardware and, more importantly, the sophisticated cloud gpu management software needed to tame it, optimize it, and turn it into your competitive advantage.

Ready to stop wrestling with cloud GPU providers and start truly optimizing your AI infrastructure?

Visit our website to learn how WhaleFlux can help you tame your GPU costs and deploy your models faster. Let’s build the future of AI on a stable, efficient foundation.