Introduction

The transition from “AI as a curiosity” to “AI as a utility” has been the defining narrative of the mid-2020s. However, as enterprises move past simple chat interfaces toward complex, autonomous AI Agent Workforces, they encounter a sobering reality: traditional software monitoring is insufficient for the non-deterministic nature of Large Language Models (LLMs).

In a world where a sub-optimal prompt or a drifting data distribution can cost millions in compute and reputation, AI Observability Platforms have emerged as the mission-critical “flight recorders” for the intelligence stack. This guide explores the architecture of modern observability, the top platforms dominating the market, and how foundational infrastructure like WhaleFlux is redefining the efficiency of these data-hungry systems.

The Anatomy of AI Observability

Traditional observability relies on the “Three Pillars”: Metrics, Logs, and Traces. For AI-driven systems, these pillars must evolve into a multi-dimensional framework that understands context, semantics, and cost.

1. Telemetry and Data Integration Pipelines

The modern AI-driven observability data integration pipeline is no longer a passive collector. It must intercept high-frequency interactions between the user, the model, and external tools (MCPs). This requires a low-latency “sidecar” architecture that captures inputs, outputs, and intermediate thought chains without degrading the user experience.

2. Semantic Monitoring & LLM Evaluation

Unlike a SQL query that either works or fails, an LLM output can be grammatically perfect but factually disastrous. Observability platforms now utilize “Evaluator Models” to score outputs for hallucination, sentiment, and safety in real-time.

3. Infrastructure Saturation & Cost Control

With GPUs being the “new oil,” observability must extend down to the silicon. Tracking GPU saturation and token-per-second (TPS) efficiency is vital for maintaining a healthy ROI. This is where the synergy between observability software and high-performance infrastructure becomes apparent.

The Ecosystem: Top AI Observability Platforms

As we look at the landscape in 2025 and 2026, several key players have defined the standard for best AI-powered observability platforms.

LangSmith: The Developer’s Choice

Born from the LangChain ecosystem, LangSmith AI observability platform features focus heavily on the debugging lifecycle. It excels at visualizing complex “chains” and “graphs,” allowing developers to see exactly where an agent lost its way. Its testing and versioning capabilities make it the gold standard for rapid prototyping.

WhyLabs: The Enterprise Guardrail

As a leader among top AI observability platforms, WhyLabs focuses on data health and model drift. It is particularly adept at identifying when the “real world” has changed so much that your model’s training data is no longer relevant, triggering automated retraining alerts through predictive analytics.

WhaleFlux: The Architectural Backbone

While software platforms monitor the logic, the underlying performance depends on the infrastructure. This is where WhaleFlux enters the conversation.

WhaleFlux is an integrated AI infrastructure platform designed for Industrial-Scale AI. While many observability tools struggle with the overhead of data collection, WhaleFlux provides a Hardened Control Plane that synchronizes compute, models, and agents. By utilizing WhaleFlux’s 99.9% Production SLA and built-in infrastructure telemetry, enterprises can ensure that their observability data integration pipelines are not just capturing logs, but are running on a resilient foundation that optimizes GPU scheduling and reduces TCO by 40-70%.

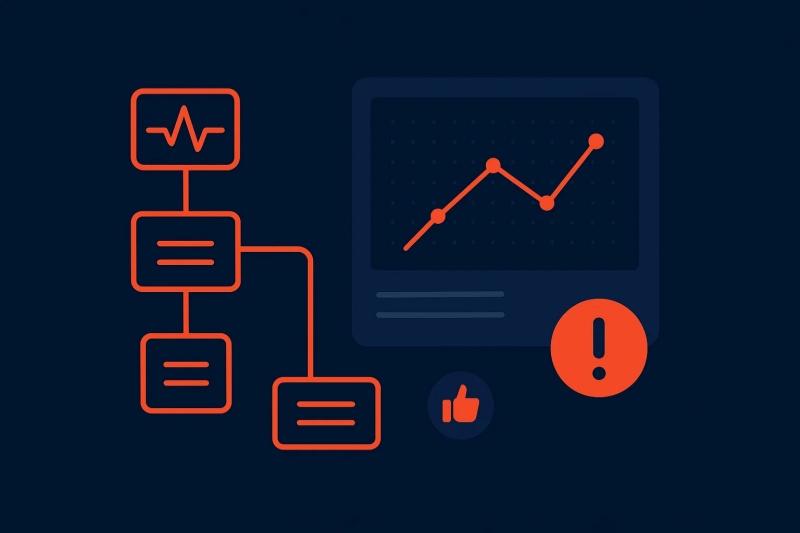

Advanced Trends: Anomaly Detection & Predictive Analytics 2025-2026

The current frontier is the shift from reactive monitoring to proactive prevention.

Best AI-Powered Observability for Anomaly Detection

In 2025, the best AI-powered observability platforms for anomaly detection began using “Unsupervised Shadow Models.” These shadows run alongside production agents, predicting the expected output range. If the production model deviates—perhaps due to a subtle prompt injection attack or a hardware-level glitch—the system triggers an automated failover.

Top Observability Platforms with AI Predictive Analytics

Modern platforms now utilize predictive analytics to forecast GPU demand. By analyzing historical traffic patterns and model complexity, these systems can pre-provision clusters on WhaleFlux, ensuring that an enterprise never hits a “Cold Start” latency spike during peak hours.

Bridging the Gap: From Data to Decision

The ultimate goal of an AI-driven observability platform is to turn complexity into Production-Grade Execution. To achieve this, an enterprise must integrate three distinct layers:

- The Silicon Layer (WhaleFlux): Ensuring hardware-level isolation and maximum GPU utilization.

- The Orchestration Layer: Managing the “Refinery” of fine-tuned models.

- The Intelligence Layer (LangSmith/WhyLabs): Monitoring the semantic accuracy of the agents.

When these layers work in synergy, AI moves from a “Black Box” to a transparent, auditable, and scalable business asset.

Conclusion

AI Observability is the difference between a prototype that “sometimes works” and an Autonomous AI Workforce that drives a global enterprise. By selecting the right combination of software tools like LangSmith or WhyLabs, and anchoring them on a resilient, high-performance foundation like WhaleFlux, organizations can finally achieve the “three nines” of AI reliability.

As we progress through 2026, the focus will continue to shift toward deterministic outcomes. In this high-stakes environment, being able to see into the heart of your AI isn’t just a technical luxury—it is a competitive necessity.

Frequently Asked Questions (FAQ)

1. What is the main difference between traditional monitoring and AI observability?

Traditional monitoring tracks “known-unknowns” (uptime, CPU, RAM). AI observability tracks “unknown-unknowns” (semantic drift, hallucination, and the reasoning logic of autonomous agents), requiring semantic analysis rather than just threshold alerts.

2. How does WhaleFlux improve AI observability performance?

WhaleFlux provides high-fidelity infrastructure telemetry and hardware-level isolation. By reducing data friction and providing a unified control plane, it ensures that the overhead of monitoring doesn’t degrade the performance of your AI Agent Workforces.

3. Is LangSmith suitable for large-scale enterprise production?

Yes, especially when paired with a hardened infrastructure. LangSmith’s features are excellent for debugging complex logic, while an integrated stack like WhaleFlux handles the scale, security, and 24/7 monitoring required for mission-critical apps.

4. Can these platforms help in reducing the cost of AI operations?

Absolutely. Platforms like WhaleFlux typically see a 40-70% reduction in TCO through intelligent GPU scheduling and model quantization. Observability tools contribute by identifying “token waste” and optimizing prompt lengths.

5. How do I choose between the top observability platforms for 2025?

If your focus is on developer experience and agent tracing, look at LangSmith. If your priority is data integrity, drift detection, and enterprise compliance, WhyLabs is a leader. For a full-stack approach that covers everything from silicon to agent execution, WhaleFlux provides the most resilient foundation.