We are currently witnessing a “Compute Renaissance”—a period of unprecedented transformation where the boundaries of what we can simulate, predict, and build are being rewritten. For decades, Moore’s Law provided a predictable roadmap for performance. But as we push into the frontiers of generative AI and complex molecular modeling, the industry has shifted its focus from single-core speed to massively parallel architectures and quantum-classical hybrid systems.

This evolution isn’t just about adding more raw power; it’s about how that power talks to itself and how it stays alive under pressure. From the ubiquity of GPU Parallel Computing to the cutting-edge promise of NVQLink Quantum-GPU interconnects, the hardware landscape is becoming exponentially more complex. However, in this race for “the next big thing,” many organizations overlook a fundamental truth: Performance is an illusion if it isn’t backed by reliability.In the following sections, we will explore the trajectory of modern acceleration and the critical role of stability-first systems like WhaleFlux in securing our computational future.

1. The Power of GPU Parallel Computing

The shift from serial to parallel computing defines the modern AI era. While a CPU acts as a high-speed “single-lane” processor for complex logic, a GPU functions as a “thousand-lane” highway. By breaking massive problems into smaller, simultaneous tasks, GPU parallel computing has reduced the training time of Large Language Models (LLMs) from years to days. This high-throughput architecture is the bedrock of every modern data center.

2. High-Performance Computing (HPC) and NVLink

As clusters grow, the bottleneck shifts from individual chip speed to interconnect bandwidth. NVIDIA’s NVLink solved this by providing a high-speed, direct GPU-to-GPU bridge that far exceeds standard PCIe limits. In the world of High-Performance Computing (HPC), NVLink allows thousands of GPUs to act as a single, unified computational engine, moving data at terabyte-per-second speeds to keep the “parallel highway” moving without congestion.

3. The Reliability Anchor: WhaleFlux

Even with the fastest interconnects, massive scale introduces massive risk. A single hardware “hiccup” in a DGX cluster can crash a million-dollar training job. This is where WhaleFlux becomes indispensable.

Designed with a “stability before scale” philosophy, WhaleFlux is a Self-Healing System that monitors GPU cluster health in real-time. By innovating in failure prediction, WhaleFlux identifies degraded components before they fail, automatically rerouting tasks to healthy nodes. For teams pushing the limits of HPC, WhaleFlux provides the operational “safety net” that turns raw hardware power into consistent, reliable results.

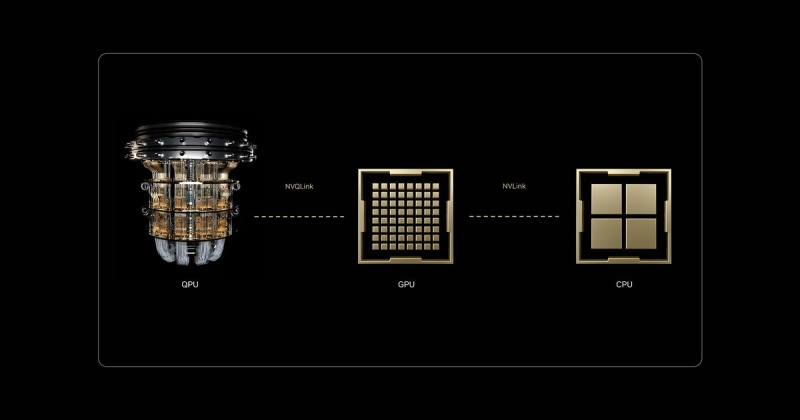

4. The Future: NVQLink and Quantum-GPU Convergence

The next frontier isn’t just more GPUs; it’s the integration of Quantum Processing Units (QPUs). While GPUs are masters of parallel math, Quantum units excel at specific, hyper-complex optimization problems.

The missing link has been communication, which is where NVQLink enters the frame. Unlike standard interconnects, NVQLink is a dedicated, low-latency link specifically designed for Quantum-GPU computing. It allows the GPU to handle classical data pre-processing while offloading “unsolvable” algorithms to the QPU in real-time. This hybrid architecture, powered by NVQLink, represents the most significant leap in computing history since the invention of the transistor.

Conclusion: Architecting the Future of Resilient Compute

The journey from standard GPU acceleration to the quantum-integrated clusters of tomorrow is not a straight line—it is a leap in complexity. As NVLink and NVQLink continue to dissolve the barriers between different processing units, the “computer” is no longer a box under a desk; it is a sprawling, interconnected living organism.

In this high-stakes environment, the philosophy of “stability before scale” is no longer optional. Innovation without resilience is merely a gamble. By integrating failure prediction and self-healing capabilities through WhaleFlux, we ensure that the next generation of breakthroughs—whether in climate science, medicine, or artificial intelligence—is built on a bedrock of ironclad uptime. The future belongs to those who can not only harness the speed of the quantum era but also master the art of keeping those systems running.

Frequently Asked Questions

1. What is the difference between NVLink and NVQLink?

NVLink is designed for high-speed communication between multiple GPUs. NVQLink is a specialized interconnect designed to bridge the gap between GPUs and Quantum Processing Units (QPUs), enabling hybrid quantum-classical computing.

2. Why is WhaleFlux necessary for these high-speed systems?

The faster and larger a system becomes, the more devastating a single failure is. WhaleFlux provides failure prediction and self-healing, ensuring that the complex web of GPUs and QPUs remains operational without manual intervention.

3. How does “Parallel Computing” benefit AI?

AI involves billions of repetitive math operations (matrix multiplications). Parallel computing allows a GPU to perform thousands of these operations at the exact same time, rather than one by one.

4. Can NVQLink work with existing GPU clusters?

Yes, the goal of NVQLink is to allow existing high-performance GPU environments to integrate Quantum accelerators, creating a hybrid system that can solve problems previously thought impossible.

5. Is High-Performance Computing (HPC) only for big tech companies?

While big tech leads the way, HPC is now essential in medicine (drug discovery), finance (risk modeling), and climate science. Tools like WhaleFlux make this power more accessible by reducing the complexity of maintaining such large systems.