From AI Models to

Production, Simplified.

Tired of fragmented AI toolchains and infrastructure headaches? WhaleFlux consolidates the entire model lifecycle into a single, automated workflow, allowing your team to focus on innovation instead of operations.

AI Model Excellence, Delivered

Unlock the full potential of your AI models with our end-to-end platform. Maximize performance and accelerate your workflows from start to finish.

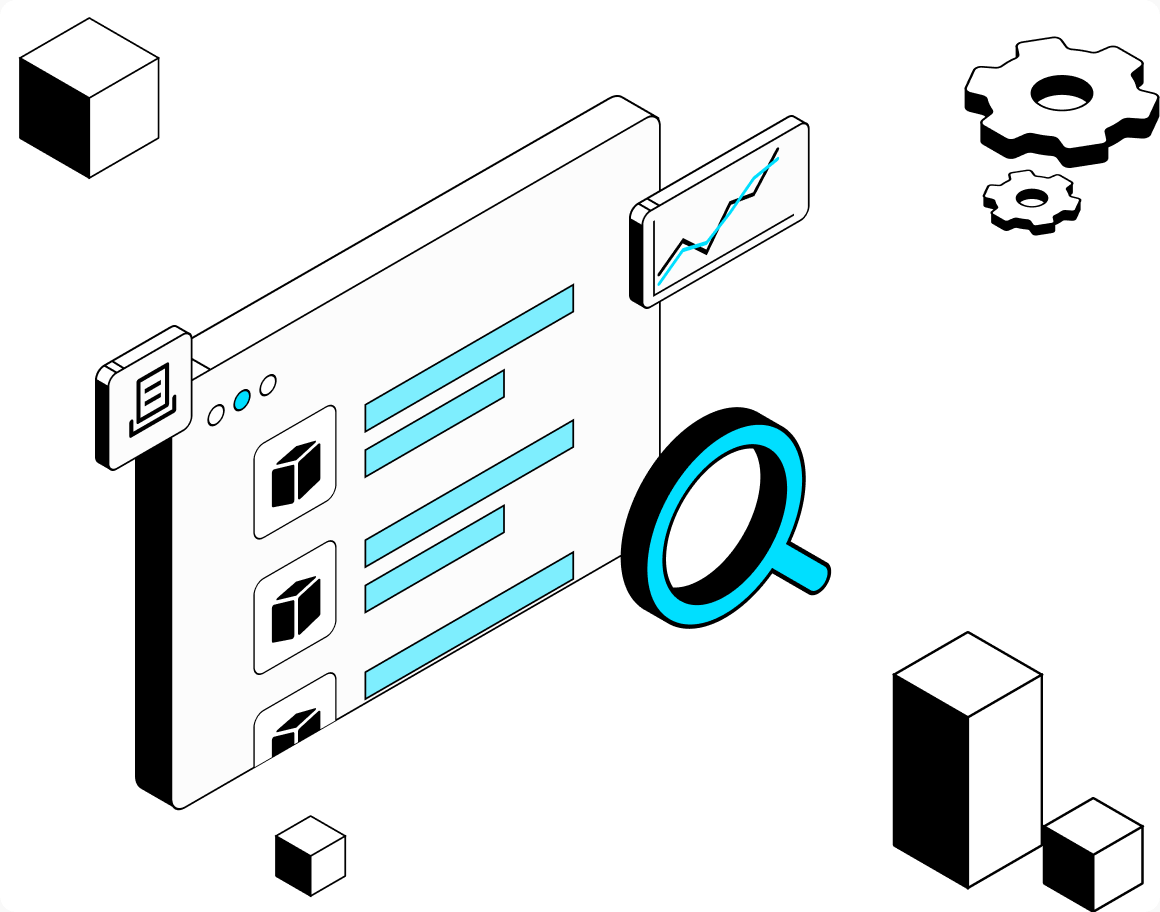

Find the Perfect AI Model for Your Business

Challenge:

Too many models, too little clarity.

WhaleFlux enables you to:

Easily explore and compare diverse AI models in one unified hub.

Instantly test and evaluate models without complex setup.

Identify the ideal model for your enterprise datasets.

Compare performance side-by-side for confident selection.

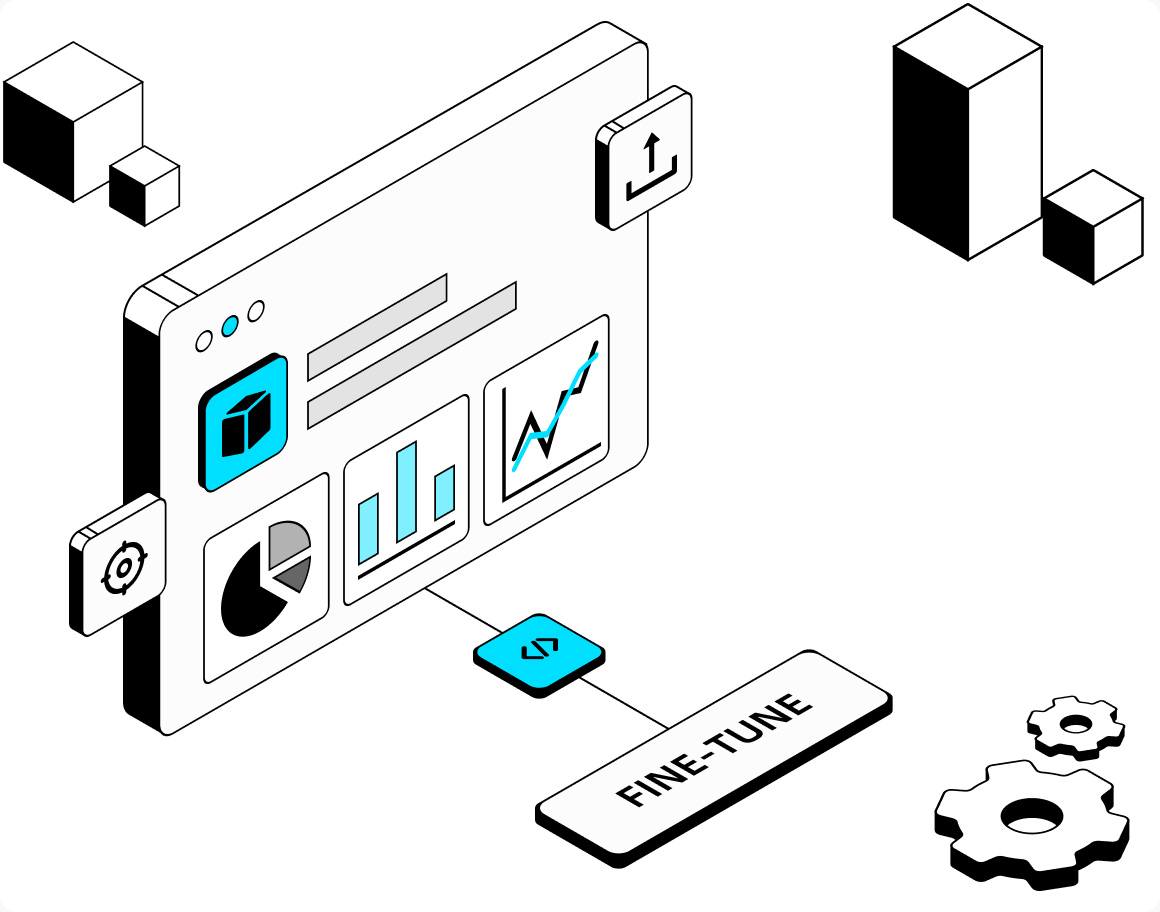

Make Any AI Model Your Own

Challenge:

Generic models fall short of your specific business needs.

WhaleFlux enables you to:

Accelerate fine-tuning with pre-built templates.

Manage and refine training data in one unified workspace.

Boost inference speed without sacrificing model quality.

Track your model fine-tuning progress and metrics in real-time.

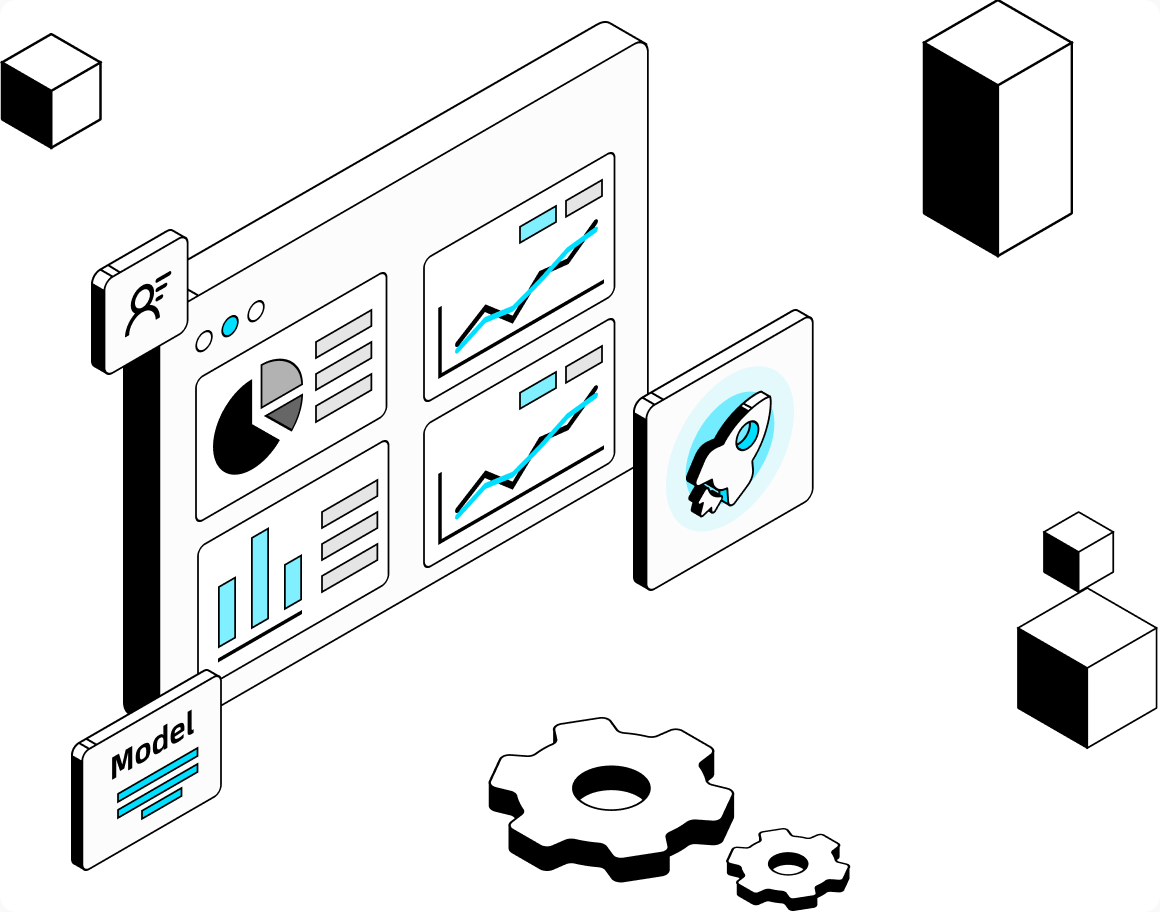

Deploy Models to Production with Ease

Challenge:

Complex deployments and lack of performance visibility.

WhaleFlux enables you to:

Deploy production-ready APIs instantly using customizable serving templates.

Ensure low-latency AI services that auto-scale to handle user demand.

Monitor API health, inference throughput, and GPU utilization.

Access granular reports and scale compute capacity based on live traffic.

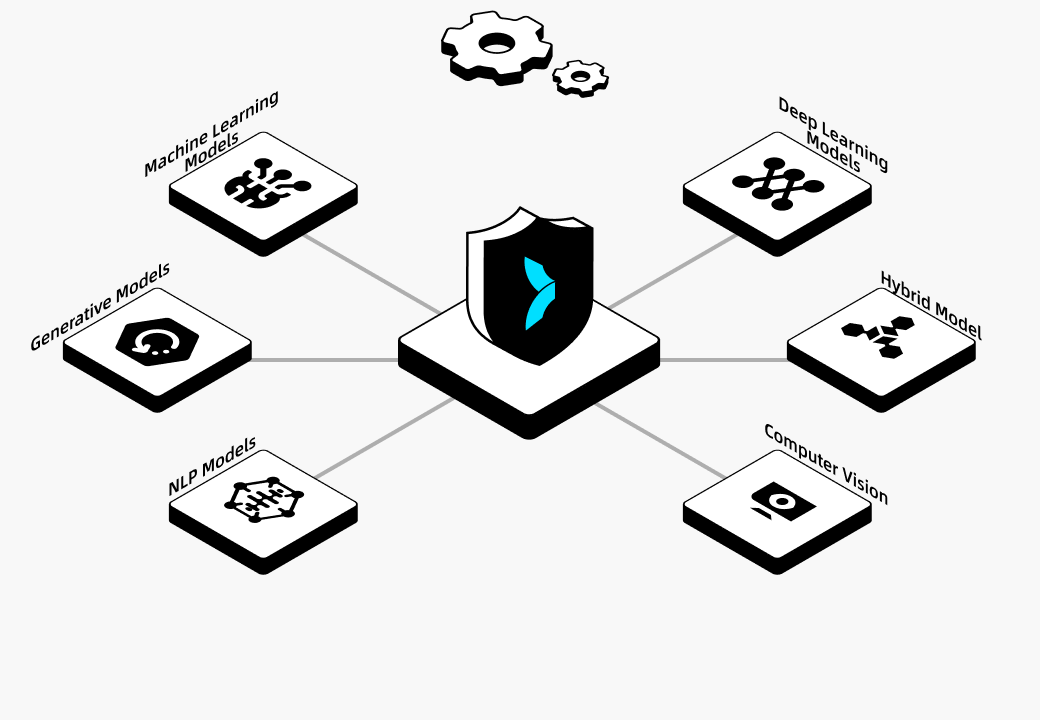

The Foundation for Your AI Success

We treat your security with the seriousness it deserves.

Your AI models and proprietary data are protected by enterprise-grade safeguards.

Unified & Seamless

An end-to-end platform where your data, models, and workloads flow seamlessly across every stage.

GPU-Optimized Efficiency

Intelligent orchestration that maximizes hardware utilization and minimizes compute costs.

Enterprise-Ready Reliability

Built for 24/7 production environments, backed by robust infrastructure and expert support.

Frequently Asked Questions

Everything you need to know about WhaleFlux AI Models.

Our platform supports a wide range of models, including popular open-source models (like Llama 3, Mistral), custom fine-tuned versions, and optimized quantized models. Manage them all in a centralized hub, regardless of their original framework.

Use our smart filtering to narrow models by parameters, tasks, and publishers. Leverage our automated evaluation suite to run benchmarks and compare multiple models side-by-side on your proprietary data, ensuring an objective, data-driven choice.

Beyond intelligent GPU scheduling, we provide integrated model quantization tools. These tools compress your models to lower inference latency and reduce memory footprints—ultimately slashing GPU compute costs without sacrificing accuracy.

Absolutely. Our fine-tuning module provides pre-configured templates for tasks like SFT and DPO, alongside intuitive dataset management tools. This allows you to efficiently create specialized models tailored to your business context, with zero deep ML engineering required.

Security is our priority. We ensure strict job isolation to keep your workloads and data completely separated. We also offer robust access controls and private model repositories, giving you full control over your AI assets.

With our model serving templates, you can deploy fine-tuned or quantized models as stable, scalable API endpoints in just a few clicks. We provide comprehensive monitoring, logging, and auto-scaling to ensure your services run reliably 24/7.

Our centralized dataset management provides full version control. You can easily import, refine, and track different dataset versions, ensuring data consistency and reproducibility across all your fine-tuning experiments.

We maintain complete logs and automated checkpointing for all fine-tuning jobs. You can instantly review failure reasons and resume interrupted jobs from the last checkpoint, saving time and compute resources.

Every quantization job is tracked in our system. You can monitor real-time status, review logs, and compare pre- and post-quantization performance metrics to ensure your optimized models maintain the right balance of accuracy and efficiency.

Yes, our model evaluation dashboard allows you to select multiple models (base, fine-tuned, or quantized) and run comprehensive comparisons across key metrics like accuracy, latency, and throughput. Automated benchmarking reports provide clear, data-driven insights.