Build Trustworthy AI: The Critical Role of Your Centralized Knowledge Base

In the race to adopt generative AI, enterprises have encountered a sobering reality check. The very large language models (LLMs) that promise unprecedented efficiency and innovation also harbor a critical flaw: they can, with supreme confidence, present fiction as fact. These “hallucinations” or fabrications aren’t mere bugs; they are inherent traits of models designed to predict the next most plausible word, not to act as verified truth-tellers. For a business, the cost of an AI confidently misquoting a contract, inventing a product feature, or misstating a financial regulation is measured in lost trust, legal liability, and operational chaos.

This crisis of trust threatens to stall the transformative potential of AI. But the solution isn’t to abandon the technology. It’s to ground it in unshakeable reality. The path to Trustworthy AI does not start with a more complex model; it starts with a more organized, accessible, and authoritative foundation: your Centralized Knowledge Base.

The Trust Deficit: Why Raw LLMs Fall Short in Business

A raw, general-purpose LLM is a brilliant but untetered polymath. Its knowledge is broad, static, and fundamentally anonymous.

1. The Black Box of Training:

An LLM’s “knowledge” is a probabilistic amalgamation of its training data—a snapshot of the internet up to a certain date. You cannot ask it, “Where did you learn this?” or “Show me the source document.” This lack of provenance is anathema to business processes requiring audit trails and accountability.

2. The Static Mind:

The world moves fast. Market conditions shift, products iterate, and policies are updated. An LLM frozen in time cannot reflect current reality, making its outputs potentially obsolete or misleading the moment they are generated.

3. The Generalist Trap:

Your company’s value lies in its unique intellectual property—proprietary methodologies, nuanced customer agreements, specialized technical documentation. A generalist LLM has zero innate knowledge of this private universe, leading to generic or, worse, incorrect answers when asked domain-specific questions.

Trustworthy AI, therefore, must be knowledgeable, current, and specialized. It must provide answers that are not just plausible, but verifiably correct.

The Centralized Knowledge Base: The Cornerstone of AI Trust

Imagine if your AI, before answering any question, could consult a single, curated, and constantly updated library containing every piece of information critical to your business. This is the power of pairing AI with a Centralized Knowledge Base.

This is not merely a data dump. A true Centralized Knowledge Base for AI is:

1. Unified:

It aggregates siloed information from across the organization—Confluence wikis, SharePoint repositories, CRM records, ERP databases, Slack archives, and legacy document systems—into a single logical access point.

2. Structured for Retrieval:

Content is processed (cleaned, chunked) and indexed, often using vector embeddings, to allow for semantic search. This means the AI can find information based on meaning and intent, not just keyword matching.

3. Authoritative & Governed:

It represents the “single source of truth.” Governance protocols ensure that only approved, vetted information enters the base, and outdated content is deprecated. This curation is what separates a knowledge base from a data lake.

4. Dynamic:

It is connected to live data sources or has frequent update cycles, ensuring the AI’s foundational knowledge reflects the present state of the business.

The Technical Architecture: From Knowledge to Trusted Answer

This is where the technical magic happens, primarily through a pattern called Retrieval-Augmented Generation (RAG).

1. The Query:

An employee asks, “What is the escalation protocol for a Priority-1 outage in the EU region?”

2. The Retrieval:

The system queries the Centralized Knowledge Base. Using semantic search, it retrieves the most relevant, authoritative chunks of text—the latest incident response playbook, the specific EU compliance annex, and the relevant team contact list.

3. The Augmentation:

These retrieved documents are fed to the LLM as grounding context, alongside the original user question.

4. The Grounded Generation:

The LLM is now instructed: “Answer the user’s question based solely on the provided context below. Do not use your prior knowledge. Cite the source documents for your answer.”

This architecture flips the script. The LLM transitions from a generator of original content to a synthesizer and communicator of verified information. The trust shifts from the opaque model to the transparent, curated knowledge base.

The Implementation Challenge: It’s Not Just Software

Building this system at an enterprise scale is a significant undertaking. The challenges are multifaceted:

1. Data Integration:

Connecting and normalizing data from dozens of disparate, often legacy, systems.

2. Pipeline Engineering:

Creating robust, automated pipelines for ingestion, embedding, and indexing that can handle constant updates without breaking.

3. Performance at Scale:

A RAG system’s user experience hinges on speed. This requires executing two computationally heavy tasks in near real-time: high-speed semantic search across billions of vector embeddings, and running a large LLM inference with a massively expanded context window (the original prompt plus the retrieved documents).

This final challenge—performance at scale—is where the rubber meets the road and where infrastructure becomes the critical enabler or blocker. Deploying and managing the embedding models and multi-billion parameter LLMs required for a responsive, trustworthy AI system demands immense, efficient, and reliable GPU compute power.

This is precisely the challenge that WhaleFlux is designed to solve. As an intelligent GPU resource management platform built for AI enterprises, WhaleFlux transforms complex infrastructure from a bottleneck into a strategic asset. It optimizes workloads across clusters of high-performance NVIDIA GPUs—including the flagship H100 and H200 for training and largest models, the data center workhorse A100, and the versatile RTX 4090 for development and inference. By maximizing GPU utilization and streamlining deployment, WhaleFlux ensures that the retrieval and generation steps of your RAG pipeline are fast, stable, and cost-effective. It provides the observability tools needed to monitor system health and the flexible resource provisioning (through purchase or tailored rental agreements) to scale your Trustworthy AI initiative with confidence. In essence, WhaleFlux provides the powerful, efficient, and manageable computational backbone required to turn the architectural blueprint of a knowledge-grounded AI into a high-performance, production-ready reality.

Use Cases: Trust in Action

When AI is anchored in a centralized knowledge base, it unlocks reliable, high-value applications:

1. Customer Support that Actually Helps:

Agents and chatbots provide answers directly from the latest technical manuals, warranty terms, and service bulletins, slashing resolution time and eliminating policy guesswork.

2. Compliant and Accurate Financial Reporting:

Analysts can query an AI to draft reports or summaries, with every statement grounded in the latest SEC filings, internal audit notes, and accounting standards, ensuring compliance.

3. Onboarding and Expertise Transfer:

New hires interact with an AI that is an expert on internal processes, past project post-mortems, and cultural guidelines, dramatically accelerating proficiency and preserving institutional knowledge.

4. Legal & Contractual Safety:

Legal teams can use AI to review clauses or assess risk, with the model referencing the exact language of master service agreements, regulatory frameworks, and past case summaries stored in the knowledge base.

Conclusion: Trust is a Technical Achievement

Building trustworthy AI is not an abstract goal; it is a concrete engineering outcome. It is achieved by intentionally constructing a system where the LLM’s extraordinary ability to understand and communicate is deliberately constrained and guided by a definitive source of truth. Your Centralized Knowledge Base is that source.

It moves AI from being an entertaining but risky novelty to a reliable, accountable, and invaluable colleague. It transforms AI outputs from something you must skeptically fact-check into something you can inherently trust and act upon. In the age of AI, your competitive advantage will not come from using the biggest model, but from building the most trustworthy one. And that trust is built, byte by byte, in your knowledge base.

5 FAQs on Building Trustworthy AI with a Centralized Knowledge Base

1. What’s the difference between using a Centralized Knowledge Base with RAG versus fine-tuning an LLM on our documents?

Fine-tuning adjusts the style and biases of an LLM’s existing knowledge, teaching it to write or respond in a certain way. It is poor at adding new, specific factual knowledge and is static and expensive to update. RAG with a Centralized Knowledge Base dynamically retrieves and uses your specific facts at the moment of query. This makes RAG superior for ensuring accuracy, providing source citations, and handling constantly changing information, which is core to building trust.

2. How do we ensure the information in our Centralized Knowledge Base is itself accurate and maintained?

The knowledge base requires a governance layer separate from the AI. This involves: 1) Clear Ownership: Assigning data stewards for different domains (e.g., legal, product, support). 2) Defined Processes: Establishing workflows for submitting, reviewing, and publishing new content, and for archiving old content. 3) Integration with Source Systems: Where possible, directly pull from primary systems of record (e.g., CRM, official docs repo) to avoid copy/paste errors. The AI is only as trustworthy as the knowledge you feed it.

3. Is our data safe when used in such a system?

A properly architected RAG system with a centralized knowledge base can enhance security. Unlike sending data to a public API, this architecture can be deployed entirely within your private cloud or VPC. The knowledge base and AI models are accessed internally. Access controls from the knowledge base layer can also propagate, ensuring users only retrieve information they are authorized to see. Always verify your specific implementation meets your compliance standards (SOC 2, HIPAA, GDPR).

4. How do we measure the “trustworthiness” of our AI outputs?

Key metrics include: Citation Accuracy: Does the cited source actually support the generated answer? Answer Relevance: Does the answer directly address the query based on the context? Hallucination Rate: The percentage of answers containing unsupported factual claims. User Feedback: Direct thumbs up/down ratings on answer quality and correctness. Operational Metrics:Reduction in escalations or corrections needed in domains like customer support.

5. Our POC works, but scaling the system is slow and expensive. How can WhaleFlux help?

Scaling a production-grade, knowledge-grounded AI system introduces massive computational demands for vector search and LLM inference. WhaleFlux directly addresses this by providing optimized, managed access to the necessary NVIDIA GPU power (like H100, A100 clusters). It eliminates infrastructure complexity, maximizes hardware utilization to lower cost per query, and provides the observability to ensure system stability. This allows your team to focus on refining the knowledge and application logic, while WhaleFlux ensures the underlying engine performs reliably and efficiently at scale.

How RAG Supercharges Your AI with a Live Knowledge Base

Imagine an AI that doesn’t just generate eloquent text based on its training data, but one that can instantly reference your company’s latest reports, answer specific questions about yesterday’s meeting notes, or provide accurate customer support based on real-time policy changes. This isn’t science fiction; it’s the reality made possible by Retrieval-Augmented Generation (RAG) powered by a live knowledge base. This powerful combination is transforming how enterprises deploy AI, moving from static, sometimes inaccurate chatbots to dynamic, informed, and trustworthy intelligent agents.

The Limitations of Traditional LLMs

Large Language Models (LLMs) are remarkable knowledge repositories, but they come with inherent constraints:

Static Knowledge:

Their knowledge is frozen at the point of their last training cut-off. They are oblivious to recent events, new products, or internal company developments.

Hallucinations:

When asked about information beyond their training data, they may generate plausible-sounding but incorrect or fabricated answers.

Lack of Source Grounding:

Traditional LLM responses don’t cite sources, making it difficult to verify the origin of the information, a critical requirement in business and legal contexts.

Domain Blindness:

Generic models lack deep, specific knowledge of proprietary internal data, industry jargon, or confidential company processes.

These limitations create significant risks and reduce the utility of AI for mission-critical business applications. This is where RAG comes to the rescue.

What is RAG and How Does It Work?

Retrieval-Augmented Generation (RAG) is a hybrid architecture that elegantly marries the creative and linguistic prowess of an LLM with the precision and dynamism of an external knowledge base.

Think of it as giving your AI a powerful, constantly-updated reference library and teaching it how to look things up before answering.

Here’s a simplified breakdown of the RAG process:

1. The Live Knowledge Base:

This is the cornerstone. It can be any collection of documents—PDFs, Word docs, Confluence pages, Slack channels, SQL databases, or real-time data streams. The key is that this base is live; it can be updated, amended, and expanded at any moment.

2. Indexing & Chunking:

The documents are broken down into manageable “chunks” (e.g., paragraphs or sections). These chunks are then converted into numerical representations called embeddings—dense vectors that capture the semantic meaning of the text. These embeddings are stored in a specialized, fast-retrieval database known as a vector database.

3. The Retrieval Step:

When a user asks a question (the query), it too is converted into an embedding. The system performs a lightning-fast similarity search across the vector database to find the chunks whose embeddings are most semantically relevant to the query.

4. The Augmented Generation Step:

The retrieved, relevant text chunks are then packaged together with the original user query and fed into the LLM as context. The instruction to the LLM is essentially: “Using only the provided context below, answer the user’s question. If the answer is not in the context, say you don’t know.”

This elegant dance between retrieval and generation solves the core problems:

- Accuracy & Freshness: Answers are grounded in the live knowledge base, ensuring they are current and factual.

- Reduced Hallucinations: By constraining the LLM to the provided context, fabrications plummet.

- Source Citation: The system can easily provide references to the exact documents and passages used, building trust and enabling verification.

- Customization: The AI’s expertise is defined by the documents you provide, making it an instant expert on your unique domain.

Building Your Live Knowledge Base: The Technical Core

The “live” aspect of RAG is what makes it transformative for businesses. Implementing it requires careful consideration:

Data Ingestion Pipeline:

A robust, automated pipeline is needed to continuously ingest data from various sources (APIs, cloud storage, internal databases, web scrapers). Tools like Apache Airflow or Prefect can orchestrate this flow.

Embedding Models:

The choice of model (e.g., OpenAI’s text-embedding-ada-002, open-source models like BGE-M3 or Snowflake Arctic Embed) significantly impacts retrieval quality. It must align with your language and domain.

Vector Database:

This is the workhorse. Systems like Pinecone, Weaviate, or Milvus are built to handle millions of vectors and perform sub-second similarity searches, even under heavy load. They must support constant, real-time updates without performance degradation.

The LLM:

The final generator. This can be a proprietary API (GPT-4, Claude) or a self-hosted open-source model (Llama 3, Mistral). The choice here balances cost, latency, data privacy, and control.

The Computational Challenge: Why RAG Demands Serious GPU Power

Running a live RAG system at enterprise scale is computationally intensive. The process is not a single API call but a cascade of operations:

Query Embedding:

Encoding the user’s question in real-time.

Vector Search:

A high-dimensional nearest-neighbor search across millions of vectors.

LLM Context Processing:

The generator LLM must now process a much larger input context (the original prompt plus the retrieved passages), which drastically increases the computational load compared to a simple query. This is where inference speed and stability become critical for user experience.

Deploying and managing the necessary infrastructure—especially for the embedding models and the LLM—requires significant GPU resources. This is often the hidden bottleneck that slows down AI deployment and inflates costs.

This is precisely where a platform like WhaleFlux becomes a strategic accelerator.

WhaleFlux is an intelligent GPU resource management platform designed specifically for AI enterprises. It optimizes the utilization of multi-GPU clusters, allowing businesses to run demanding RAG workloads—from embedding generation to large-context LLM inference—more efficiently and cost-effectively. By intelligently orchestrating workloads across a fleet of powerful NVIDIA GPUs(including the latest H100, H200, and A100, as well as versatile options like the RTX 4090), WhaleFlux ensures your live knowledge base is not just smart, but also fast and reliable. It simplifies deployment, maximizes hardware efficiency, and provides the observability tools needed to keep complex AI systems running smoothly. For companies building mission-critical RAG systems, such infrastructure optimization is not a luxury; it’s a necessity for maintaining a competitive edge.

Real-World Superpowers: Use Cases

A RAG system with a live knowledge base unlocks transformative applications:

Dynamic Customer Support:

A support bot that instantly knows about the latest product update, a just-issued service bulletin, or a specific customer’s contract details, providing accurate, personalized answers.

Corporate Intelligence & Onboarding:

New employees can query an AI that knows all HR policies, recent project documentation, and team directories, drastically reducing ramp-up time.

Real-Time Financial & Market Analysis:

An analyst can ask, “Summarize the risks mentioned in our last five earnings call transcripts,” with the AI pulling and synthesizing information from the most recent documents.

Healthcare Diagnostics Support:

A system that augments a doctor’s knowledge by retrieving the latest medical research, clinical guidelines, and similar patient case histories in seconds.

Conclusion

RAG with a live knowledge base is more than a technical upgrade; it’s a paradigm shift for enterprise AI. It moves AI from being a gifted but unreliable storyteller to a precise, knowledgeable, and up-to-date expert consultant. It bridges the gap between the vast, static knowledge of pre-trained models and the dynamic, specific needs of a business.

While the architectural design is crucial, its real-world performance hinges on robust, scalable, and efficient computational infrastructure. Building this intelligent, responsive “second brain” for your AI requires not just smart software, but also powerful and wisely managed hardware. By combining the RAG architecture with a platform like WhaleFlux for optimal GPU resource management, enterprises can truly supercharge their AI initiatives, unlocking unprecedented levels of accuracy, relevance, and operational efficiency.

5 FAQs on RAG and Live Knowledge Bases

1. What’s the main advantage of RAG over just fine-tuning an LLM on my data?

Fine-tuning teaches the LLM how to speak in a certain style or about certain topics from your data, but it doesn’t reliably add new factual knowledge and is expensive to update. RAG, on the other hand, directly provides the LLM with the specific facts it needs from your live knowledge base at the moment of query. This makes RAG superior for dynamic information, source citation, and reducing hallucinations, as the model’s core knowledge isn’t altered.

2. How “live” can the knowledge base truly be?

The latency depends on your ingestion pipeline. If your system is connected to a real-time data stream (e.g., a news feed or transaction log), and your vector database supports real-time updates, the “retrieval” step can access information that was added milliseconds ago. For most business applications, updates on an hourly or daily basis are sufficiently “live” to provide a major advantage over static models.

3. Isn’t this just a fancy search engine?

It’s a significant evolution. A search engine returns a list of documents. A RAG system understandsthe question, finds the most relevant information within those documents, and then synthesizes a coherent, natural language answer based on that information. It completes the last mile from information retrieval to knowledge delivery.

4. What are the biggest challenges in building a production RAG system?

Key challenges include: designing an effective chunking strategy for your documents, ensuring the retrieval quality is high (poor retrieval leads to poor answers), managing the latency of the multi-step process, handling document updates and deletions in the vector index, and scaling the computationally expensive LLM inference to handle the augmented context prompts reliably.

5. How can WhaleFlux help in deploying and running such a system?

WhaleFlux addresses the core infrastructure challenges. Deploying the embedding models and LLMs required for a responsive RAG system demands powerful, scalable GPU resources. WhaleFlux optimizes the utilization of NVIDIA GPU clusters (featuring the H100, A100, and other high-performance models), ensuring your inference runs fast and stable while controlling cloud costs. Its platform provides the management, observability, and efficiency needed to take a RAG proof-of-concept into a high-traffic, mission-critical production environment.

Building a “Knowledge Base” It Can Actually Use

In the race to deploy powerful AI, many organizations focus overwhelmingly on model selection—scouring the latest benchmarks for the largest, most sophisticated large language model (LLM). Yet, even the most advanced model, when deployed in isolation, often disappoints. It hallucinates facts, struggles with domain-specific queries, and fails to leverage the organization’s most valuable asset: its proprietary data. The true differentiator isn’t just the model itself; it’s the specialized knowledge base you build for it.

Think of your AI as an immensely talented but generalist new hire. Without access to the company drive, past project reports, customer feedback logs, and technical manuals, its usefulness is severely limited. A knowledge base equips your AI with this context, transforming it from a generic chatterbox into a precise, informed, and reliable expert tailored to your business.

But building a knowledge base your AI can actually use—one that consistently delivers accurate, relevant, and actionable insights—is a significant engineering challenge. It’s more than just dumping documents into a folder. It requires a strategic architecture designed for machine understanding, seamless integration, and scalable performance.

The “Why”: Beyond the Hype of Raw Model Power

Why is a knowledge base non-negotiable?

Curbing Hallucinations:

LLMs are probabilistic pattern generators. Without grounded, verifiable sources, they confidently invent answers. A knowledge base provides the “source of truth” that the model can retrieve from, citing real documents and data, thereby dramatically improving accuracy and trustworthiness.

Enabling Domain Expertise:

Your competitive edge lies in what you know that others don’t. A knowledge base infused with your proprietary research, product specs, and internal processes allows your AI to operate at expert levels in your niche.

Dynamic Information Access:

Unlike static, fine-tuned models that become outdated, a well-architected knowledge base can be updated in near real-time. New pricing sheets, updated regulations, or the latest support tickets can be made instantly available to the AI.

Cost and Efficiency:

Constantly retraining or fine-tuning massive models on new data is prohibitively expensive and slow. A retrieval-augmented generation (RAG) approach, which pairs a model with a dynamic knowledge base, is a far more agile and cost-effective way to keep your AI current.

The “How”: Blueprint for an Actionable Knowledge Base

Building an effective system involves several key pillars:

1. Ingestion & Processing: From Chaos to Structure

This is the foundational step. You must gather data from all relevant sources: PDFs, Word docs, Confluence pages, Salesforce records, database exports, and even structured data from APIs. The magic happens in processing: chunking text into semantically meaningful pieces, extracting metadata (source, author, date), and converting everything into a unified format. The goal is to break down information silos and create a normalized pool of “knowledge chunks.”

2. Vectorization & Embedding: The Language of AI

For an AI to “understand” and retrieve text, it needs a numerical representation. This is where embedding models come in. Each text chunk is converted into a high-dimensional vector (a list of numbers) that captures its semantic meaning. Sentences about “quarterly sales targets” and “Q3 revenue goals” will have vectors that are mathematically close to each other in this “vector space,” even if the wording differs.

3. The Vector Database: The AI’s Memory Core

These vectors are stored in a specialized database optimized for similarity search—the vector database. When a user asks a question, that query is also vectorized. The database performs a lightning-fast search to find the stored vectors (knowledge chunks) that are most semantically similar to the query. This is the core retrieval mechanism.

4. Retrieval Augmented Generation (RAG): The Intelligent Synthesis

In a RAG pipeline, the retrieved relevant chunks are not the final answer. They are passed as context, along with the original user query, to the LLM (e.g., an OpenAI model or an open-source Llama 2/3 variant running in-house). The system instructs the model: “Using only the following context, answer the question…” The LLM then synthesizes a coherent, natural-language answer grounded in the provided sources. This combines the precision of retrieval with the linguistic fluency of generation.

5. Infrastructure: The Often-Overlooked Engine

This entire pipeline—running embedding models, querying vector databases, and hosting the inference engine for the LLM—demands serious, scalable computational power, particularly from GPUs. The embedding and inference stages are intensely parallelizable tasks that run orders of magnitude faster on GPUs. However, managing a multi-GPU cluster efficiently is a major operational hurdle. Under-provision, and your knowledge base responds sluggishly, crippling user experience. Over-provision, and you hemorrhage money on idle cloud GPUs. The stability of your GPU resources directly impacts the reliability and speed of your AI’s access to its knowledge.

Here is where a specialized tool can become a secret weapon in its own right. ConsiderWhaleFlux, a smart GPU resource management platform designed for AI enterprises. WhaleFlux optimizes the utilization of multi-GPU clusters, ensuring that the computational heavy-lifting behind your knowledge base—from embedding generation to LLM inference—runs efficiently and stably. By dynamically managing workloads across a fleet of NVIDIA GPUs (including the H100, H200, A100, and RTX 4090), it helps drastically reduce cloud costs while accelerating deployment cycles. WhaleFlux is more than just GPU management; it’s an integrated platform that also provides AI Model services, Agent frameworks, and Observability tools, offering a cohesive environment to build, deploy, and monitor sophisticated AI applications like a RAG-powered knowledge base. For companies needing tailored solutions, WhaleFlux further offers customized AI services, providing the flexible, powerful infrastructure foundation that makes advanced AI projects practically and economically viable.

6. Continuous Iteration: The Feedback Loop

Launching is just the beginning. You need observability tools to monitor: What queries are failing? Which retrieved documents are rated as helpful? Where is the model still hallucinating? This feedback loop is essential for curating your knowledge base, refining chunking strategies, and improving overall system performance.

Best Practices for Success

- Start with a High-Value, Contained Domain: Don’t boil the ocean. Begin with a specific department’s knowledge (e.g., HR policies or product support) to prove value and iterate.

- Prioritize Data Quality: Garbage in, garbage out. Clean, well-structured source documents yield vastly better results than messy scans or inconsistent formats.

- Implement Robust Access Controls: Your knowledge base will contain sensitive information. The retrieval system must respect user permissions, ensuring individuals only access chunks they are authorized to see.

- Cite Your Sources: Always design your AI’s responses to explicitly reference the documents it used. This builds user trust and allows for easy verification.

Conclusion

Your AI’s ultimate capability is not determined solely by the model you license, but by the quality, architecture, and accessibility of the knowledge you connect it to. Building a dynamic, well-engineered knowledge base moves AI from a fascinating experiment to a core operational asset. It turns generic intelligence into proprietary expertise. By combining a strategic RAG architecture with a powerful and efficiently managed infrastructure—the kind that platforms like WhaleFlux enable—you provide your AI with the secret weapon it needs to truly deliver on its transformative promise. The future belongs not to the organizations with the biggest AI models, but to those who can most effectively teach their AI what they know.

FAQs: Building an AI-Powered Knowledge Base

Q1: What’s the main difference between fine-tuning an LLM and using a RAG (Retrieval-Augmented Generation) system with a knowledge base?

A: Fine-tuning adjusts the model’s internal weights on a specific dataset, making it better at a style or domain but “locking in” knowledge at the time of training. It’s expensive to update. RAG keeps the general model unchanged but dynamically retrieves relevant information from an external knowledge base for each query. This allows for real-time updates, provides source citations, and is generally more cost-effective for leveraging proprietary data.

Q2: We have terabytes of documents. Is building a knowledge base too expensive and complex?

A: The complexity and cost are front-loaded in the design and infrastructure phase. Start with a focused, high-ROI subset of data to validate the pipeline. The long-term operational cost, especially using an efficient RAG approach, is typically much lower than constantly fine-tuning large models. Strategic use of GPU resources, managed through platforms like WhaleFlux, is key to controlling inference costs and ensuring scalable performance as your knowledge base grows.

Q3: Can the knowledge base handle highly structured data (like databases) alongside unstructured documents?

A: Absolutely. A robust knowledge base architecture can ingest both. Structured data from SQL databases or APIs can be converted into descriptive text chunks (e.g., “Customer [ID] purchased [Product] on [Date]”). When vectorized, this allows the AI to answer precise, data-driven questions by retrieving these structured facts, seamlessly blending them with insights from PDFs or wikis.

Q4: What kind of GPUs are necessary for running a private knowledge base system, and is buying or renting better?

A: The requirement depends on the scale of your knowledge base, user concurrency, and the size of the LLM used for inference. For production systems, NVIDIA GPUs like the A100 or H100 are common for their memory bandwidth and parallel processing power. The buy-vs-rent decision hinges on long-term usage patterns and capital expenditure strategy. Some integrated platforms offer flexible models. For instance, WhaleFlux provides access to a full suite of NVIDIA GPUs (including H100, H200, A100, and RTX 4090), allowing enterprises to procure or lease resources according to their specific needs, providing a middle path that prioritizes efficiency and control.

Q5: How does a tool like WhaleFlux specifically help a knowledge base project?

A: WhaleFlux addresses the critical infrastructure layer. It ensures that the GPU-intensive components of the knowledge base pipeline—embedding models and the LLM inference engine—run on optimally utilized, cost-effective NVIDIA GPU clusters. This directly translates to faster query response times, higher system stability under load, and lower cloud compute bills. Furthermore, as an integrated platform offering AI observability, it provides crucial monitoring tools to track the performance and accuracy of your knowledge base retrievals, creating a complete environment for development and deployment.

WhaleFlux Signals a Shift Toward Architecting Enterprise AI Systems as Enterprise AI Enters a New Phase in 2026

SAN FRANCISCO, Jan. 21, 2026 /PRNewswire/ — As enterprise AI adoption moves beyond experimentation and into production, the industry is entering a new phase where system reliability, governance, and long-term operability matter more than model performance alone. WhaleFlux today announced its positioning as an AI system builder, reflecting a broader shift in how organizations deploy AI at scale.

Over the past decade, rapid advances in foundation models have driven widespread AI experimentation. However, many enterprises now face a different bottleneck: building AI systems that can operate continuously within real-world constraints such as compliance, cost control, and operational stability. As a result, the focus is shifting from developing better models to engineering better systems.

From Model-Centric AI to System-Centric AI

WhaleFlux began as a GPU infrastructure management company and initiated a strategic expansion in early 2025 to address this emerging gap. Rather than focusing on standalone tools or individual models, the company has developed a system-level platform designed for long-running, workflow-oriented enterprise AI.

“At scale, AI systems fail not because models are weak, but because systems are fragile,” said Jolie Li, COO of WhaleFlux. “The challenge is no longer model development — it’s system engineering.”

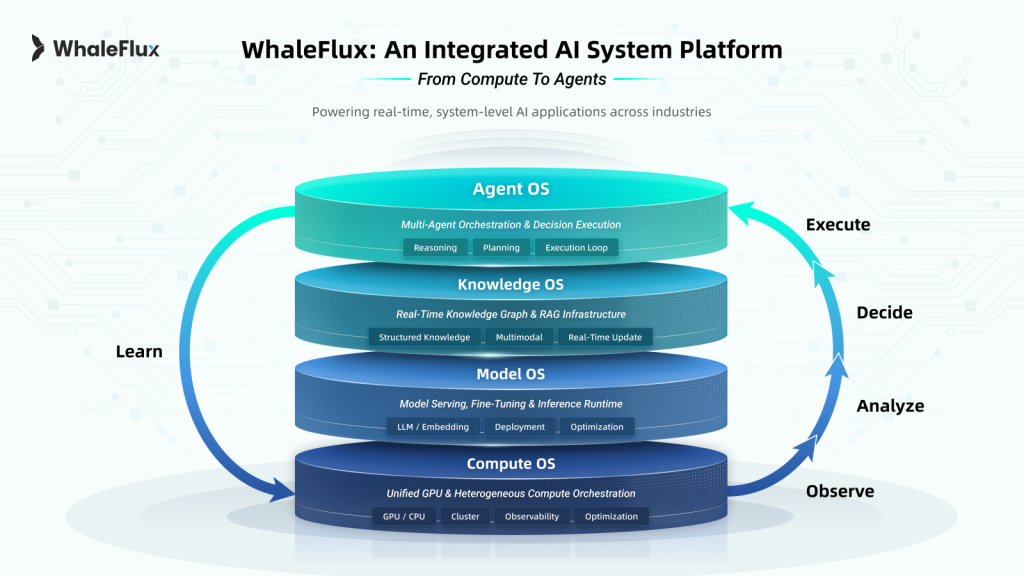

To support this transition, WhaleFlux consolidated its platform into a unified Compute–Model–Knowledge–Agent architecture, designed to provide enterprises with a stable foundation for production AI.

- Compute Layer — An autonomous scheduling and management engine for private GPU environments, enabling predictable performance, cost efficiency, and operational visibility across heterogeneous hardware.

- Model Layer — An optimized runtime environment for model serving, fine-tuning, and inference, ensuring the scalable deployment and optimization of LLMs and embeddings.

- Knowledge Layer — A secure enterprise knowledge foundation combining Retrieval-Augmented Generation (RAG) with structured access control, allowing AI agents to reason over private data while maintaining strict governance.

- Agent Layer — A workflow orchestration engine that enables multi-step, policy-aware execution, ensuring AI agents operate within predefined operational and compliance boundaries.

Together, these layers support AI workflows that are traceable, controllable, and designed to run reliably over time.

Validated in High-Stakes Industry Environments

Throughout 2025, WhaleFlux deployed its system architecture across regulated and mission-critical settings. In finance, institutional teams used on-premise AI agents for strategy evaluation and risk analysis while keeping sensitive data within private infrastructure. In healthcare, research institutions adopted federated learning workflows to enable collaborative pathology research without transferring patient data. In manufacturing, industrial producers applied AI-assisted modeling to complex chemical and reaction environments, improving visibility where traditional sensing methods are limited.

These deployments reflect growing demand for AI systems designed to operate under real-world constraints rather than controlled laboratory conditions. WhaleFlux also shared system-level insights at global industry events including NVIDIA GTC and GITEX Global.

Looking Ahead

As enterprises enter 2026, WhaleFlux expects AI adoption to increasingly shift toward agent-driven, workflow-oriented systems composed of multiple coordinated components. The company positions itself as an AI system builder, providing the architectural foundation enterprises use to design, deploy, and govern AI systems over time.

About WhaleFlux

Headquartered in San Francisco, WhaleFlux builds system platforms for enterprise AI environments. By integrating GPU compute scheduling, private knowledge management, and intelligent agent orchestration, WhaleFlux helps organizations transform AI capabilities into stable, production-ready systems.

For more information, visit www.whaleflux.com or follow us on LinkedIn

Media Contact

Niki Yan

Head of Marketing, WhaleFlux

Email: niki@whaleflux.com

Website: www.whaleflux.com

Beyond Generic Answers: Connect ChatGPT to Your Own Knowledge Base

Have you ever pushed ChatGPT to its limits, asking for insights on your latest proprietary research, details from an internal company handbook, or analysis of a confidential project report, only to be met with a polite deflection or a confident-sounding fabrication? This universal frustration highlights the core boundary of public large language models: their knowledge is vast but generic, static, and utterly separate from the private, dynamic, and specialized information that powers your business.

The promise of AI is not just in conversing about publicly available facts but in amplifying our unique expertise. The critical question for businesses today is no longer if they should use AI, but how to make it meaningfully interact with their most valuable asset: their internal knowledge. The solution lies in moving beyond the generic chat interface and connecting a powerful language model like ChatGPT directly to your own knowledge base.

This process transforms AI from a brilliant generalist into a specialized, in-house expert. Imagine a customer support agent that instantly references the latest product spec sheets and resolved tickets, a legal assistant that cross-references thousands of past contracts in seconds, or a research analyst that synthesizes findings from decades of internal reports. This is not science fiction; it’s an achievable architecture powered by a paradigm called Retrieval-Augmented Generation (RAG).

Why “Just ChatGPT” Isn’t Enough for Business

ChatGPT, in its standard form, operates as a closed system. Its knowledge is frozen in time at its last training data cut-off. This presents several insurmountable hurdles for professional use:

The Knowledge Cut-Off:

It is unaware of events, data, or documents created after its training period. Your 2023 annual report or Q1 2024 strategy document simply do not exist to it.

The Hallucination Problem:

When asked about unfamiliar topics, LLMs may “confabulate” plausible yet incorrect information. In a business context, an invented financial figure or product feature is not just unhelpful—it’s dangerous.

Lack of Source Verification:

You cannot ask it to “show its work.” There are no citations, footnotes, or links back to original source material, which is essential for auditability, compliance, and trust.

Data Privacy & Security:

Sending sensitive internal data directly into a public API poses significant confidentiality risks. Your proprietary information should not become part of a model’s latent training data.

Simply put, asking a generic AI about your specific business is like asking a world-renowned chef to prepare a gourmet meal… but locking them out of your kitchen and pantry. You need to let them in.

The Bridge: How to Connect ChatGPT to Your Data

The technical architecture to build this bridge is elegant and has become the industry standard for building knowledgeable AI assistants. It revolves around RAG. Here’s a breakdown of how it works, translating the technical process into a clear, step-by-step workflow.

Step 1: Building Your Digital Library (Indexing)

Before any question can be answered, your unstructured knowledge—PDFs, Word docs, Confluence pages, database entries, Slack histories—must be organized into a query-ready format.

Chunking:

Documents are broken down into semantically meaningful pieces (e.g., paragraphs or sections). This is crucial; you can’t search a 100-page manual as a single block.

Embedding:

Each text chunk is passed through an embedding model (like OpenAI’s own text-embedding-ada-002), which converts it into a high-dimensional vector. This vector is a numerical representation of the chunk’s semantic meaning. Think of it as creating a unique DNA fingerprint for the idea contained in the text.

Storage:

These vectors, alongside the original text, are stored in a specialized vector database(e.g., Pinecone, Weaviate, or pgvector). This database is engineered for one task: lightning-fast similarity search.

Step 2: The Intelligent Look-Up (Retrieval)

When a user asks your custom AI a question (e.g., “What was the Q3 outcome for Project Phoenix?”), the following happens in milliseconds:

- The user’s query is instantly converted into a vector using the same embedding model.

- This query vector is sent to the vector database with an instruction: “Find the K (e.g., 5) most semantically similar vectors to this one.”

- The database performs a nearest neighbor search and returns the text chunks whose vector “fingerprints” are closest to the question’s fingerprint—the most relevant passages from your entire corpus.

Step 3: The Informed Answer (Augmented Generation)

Here is where ChatGPT (or a similar LLM) finally enters the picture, but now it’s fully briefed. The retrieved relevant text chunks are packaged into a enhanced prompt:

Answer the user’s question based solely on the following context.

If the answer cannot be found in the context, state clearly that you do not have that information.

Context:

{Retrieved Text Chunk 1}

{Retrieved Text Chunk 2}

…

Question: {User’s Original Question}

This prompt is sent to the LLM. The model, now “augmented” with the retrieved context, generates a coherent, accurate answer that is directly grounded in your provided sources. The output can be designed to include citations (e.g., [Source 2]), creating full traceability.

The Infrastructure Imperative: It’s More Than Just Code

Building a robust, production-ready RAG system is a software challenge intertwined with a significant computational infrastructure challenge. The performance of the embedding model and the final LLM (like GPT-4) is critical to user experience. Slow retrieval or sluggish generation kills adoption.

This is where strategic GPU resource management becomes a core business differentiator, not an IT afterthought. Running high-throughput embedding models and large language models concurrently demands predictable, high-performance parallel computing. This typically requires dedicated access to powerful NVIDIA GPUs like the H100, A100, or RTX 4090 to ensure low-latency responses, especially under concurrent user loads.

However, simply provisioning GPUs is where costs can spiral and complexity blooms. Managing a cluster, optimizing utilization across the different stages of the RAG pipeline (embedding vs. LLM inference), ensuring stability, and controlling cloud spend are massive operational overheads for an AI engineering team.

This operational complexity is the exact problem WhaleFlux is designed to solve. WhaleFlux is an intelligent, all-in-one AI infrastructure platform that allows enterprises to move from experimental RAG prototypes to stable, scalable, and cost-efficient production deployments. By providing optimized management of multi-GPU clusters (featuring the full spectrum of NVIDIA GPUs, from the flagship H100 and H200 to the cost-effective A100 and RTX 4090), WhaleFlux ensures that the computational heart of your custom knowledge AI beats reliably. Its integrated suite—encompassing GPU Management, AI Model deployment, AI Agent orchestration, and AI Observability—means the entire pipeline can be monitored and tuned from a single pane of glass. For businesses looking to build a proprietary advantage, WhaleFlux also offers custom AI servicesto tailor the entire stack to specific needs, providing not just the tools but the expert partnership to deploy a knowledge-connected ChatGPT that truly reflects the unique intellectual capital of the organization.

Real-World Blueprints: What This Enables

This architecture unlocks transformative applications across every department:

Onboarding & HR:

A 24/7 assistant that answers questions about vacation policy, benefits, and IT setup, directly from the latest internal guides.

Enterprise Search:

A natural-language search engine across all internal wikis, documentation, and meeting notes. “Find all discussions about the Singapore market entry from last year.”

Customer Support:

Agents that have instant, cited access to the latest troubleshooting guides, product manuals, and engineering change logs.

Consulting & Legal:

Analysts who can instantly synthesize insights from a curated database of past client reports, case law, or regulatory filings.

Conclusion: From Generic Tool to Proprietary Partner

Connecting ChatGPT to your knowledge base is the definitive step from using AI as a novelty to embedding it as a core competency. It closes the gap between the model’s generalized intelligence and your organization’s specific wisdom. The technology stack—centered on RAG—is mature and accessible. The true differentiator for execution is no longer just the algorithm, but the ability to deploy and maintain the high-performance, scalable infrastructure it requires. By building this bridge, you stop asking generic questions and start building a proprietary intelligence that works for you.

FAQ: Connecting ChatGPT to Your Knowledge Base

Q1: What’s the difference between connecting ChatGPT via RAG and fine-tuning it on our data?

They serve different purposes. Fine-tuning adjusts the model’s internal weights to excel at a specific style or task format (e.g., writing emails in your company’s tone). RAG (Retrieval-Augmented Generation) provides the model with external, factual knowledge at the moment of query to answer specific content-based questions. For knowledge base access, RAG is preferred as it’s more dynamic (easy to update knowledge), traceable (provides sources), and avoids the risk of the model internalizing and potentially leaking sensitive data.

Q2: Is our data safe if we build this system?

With a properly architected private RAG system, your data remains under your control. Your documents are indexed in your own vector database (hosted on your cloud or private servers). The LLM (ChatGPT API or a self-hosted model) only receives relevant text chunks at query time and does not permanently store or use them for training. Choosing an infrastructure partner like WhaleFlux, which emphasizes secure, dedicated NVIDIA GPU clusters and private deployment models, further ensures your data never leaves your governed environment.

Q3: How complex and resource-intensive is it to build and run this in production?

The initial prototype can be built relatively quickly with modern frameworks. However, moving to a low-latency, high-availability production system is complex. It involves managing multiple services (embedding models, vector databases, LLMs), optimizing for speed and accuracy (“chunking” strategy, query routing), and scaling infrastructure. This requires significant NVIDIA GPU resources for inference. Platforms like WhaleFlux dramatically reduce this operational burden by providing a unified platform for GPU management, model deployment, and observability, turning infrastructure complexity into a managed service.

Q4: Can we use a model other than ChatGPT for the generation step?

Absolutely. While the article uses “ChatGPT” as a familiar example, the RAG architecture is model-agnostic. You can use the OpenAI GPT API, Anthropic’s Claude, or powerful open-source models like Meta’s Llama 3 or Mistral AI‘s models. The choice depends on factors like cost, latency, data privacy requirements, and desired performance. A platform like WhaleFlux is particularly valuable here, as its AI Model service simplifies the deployment and scaling of whichever LLM you choose on optimal NVIDIA GPU hardware.

Q5: We want to start with a pilot. What’s the first step, and how can WhaleFlux help?

Start by identifying a contained, high-value knowledge domain (e.g., your product FAQ or a specific department’s manual). The first steps are to gather those documents and prototype the RAG pipeline. WhaleFlux can accelerate this by providing immediate, hassle-free access to the right NVIDIA GPU resources (through rental or purchase plans) needed for development and testing. Their team can then help you design a scalable architecture and, using their custom AI services, assist in moving from a successful pilot to a full-scale, enterprise-wide deployment, managing the entire infrastructure lifecycle.

RAG Explained Simply: How AI “Looks Up” Answers in Your Documents

Have you ever asked a large language model (LLM) a question about a specific topic—like your company’s latest internal project report or a dense, 200-page technical manual—only to receive a confident-sounding but completely made-up answer? This common frustration, often called an “AI hallucination,” happens because models like ChatGPT are designed to generate fluent text based on their vast, static training data. They aren’t built to know your private, new, or specialized information.

But what if you could give an AI the ability to “look up” information in real-time, just like a skilled researcher would scan through a library of trusted documents before answering your question?

Enter Retrieval-Augmented Generation, or RAG. It’s a powerful architectural framework that is revolutionizing how businesses deploy accurate, trustworthy, and cost-effective AI. In simple terms, RAG gives an AI model a “search engine” and a “working memory” filled with your specific data, allowing it to ground its answers in factual sources.

The Librarian Analogy: From Black Box to Research Assistant

Imagine a traditional LLM as a brilliant, eloquent scholar who has memorized an enormous but fixed set of encyclopedias up to a certain date. Ask them about general knowledge, and they excel. Ask them about yesterday’s news, your company’s Q4 financials, or the details of an obscure academic paper, and they must guess or fabricate based on outdated or incomplete memory.

Now, imagine you pair this scholar with a lightning-fast, meticulous librarian. Your role is simple: you ask a question. The librarian (the retrieval system) immediately sprints into a vast, private archive of your choosing—your documents, databases, manuals, emails—and fetches the most relevant pages or passages. They hand these pages to the scholar (the generation model), who now synthesizes the provided information into a clear, coherent, and—crucially—source-based answer.

That is RAG in a nutshell. It decouples the model’s knowledge from its reasoning, breaking the problem into two efficient steps: first, find the right information; second, use it to formulate the perfect response.

Why RAG? The Limitations of “Vanilla” LLMs

To appreciate RAG’s value, we must understand the core challenges of standalone LLMs:

Static Knowledge:

Their world ends at their last training cut-off. They are unaware of recent events, new products, or your private data.

Hallucinations:

When operating outside their trained domain, they tend to “confabulate” plausible but incorrect information, a critical risk for businesses.

Lack of Traceability:

You cannot easily verify why an LLM gave a particular answer, posing audit and compliance challenges.

High Cost of Specialization:

Continuously re-training or fine-tuning a giant model on new data is computationally prohibitive, slow, and expensive for most organizations.

RAG elegantly solves these issues by making the model’s source material dynamic, verifiable, and separate from its core parameters.

How RAG Works: A Three-Act Play

Deploying a RAG system involves three continuous stages: Indexing, Retrieval, and Generation.

Act 1: Indexing – Building the Knowledge Library

This is the crucial preparatory phase. Your raw documents (PDFs, Word docs, web pages, database entries) are processed into a searchable format.

- Chunking: Documents are split into manageable “chunks” (e.g., paragraphs or sections). Getting the chunk size right is an art—too small loses context, too large dilutes relevance.

- Embedding: Each text chunk is converted into a numerical representation called a vector embedding. This is done using an embedding model, which encodes semantic meaning into a long list of numbers (a vector). Think of it as creating a unique “fingerprint” for the idea expressed in that text. Semantically similar chunks will have similar vector fingerprints.

- Storage: These vectors, along with their original text, are stored in a specialized database called a vector database. This database is optimized for one thing: finding the closest vector matches to a given query at incredible speed.

Act 2: Retrieval – The Librarian’s Sprint

When a user asks a question:

- The user’s query is instantly converted into its own vector embedding using the same model from the indexing phase.

- This query vector is sent to the vector database with a command: “Find the ‘K’ most similar vectors to this one.” This is typically done via a mathematical operation called nearest neighbor search.

- The database returns the text chunks whose vectors are closest to the query vector—the most semantically relevant passages from your entire library.

Act 3: Generation – The Scholar’s Synthesis

The retrieved relevant chunks are now packaged together with the original user query and fed into the LLM (like GPT-4 or an open-source model like Llama 3) as a prompt. The prompt essentially instructs the model: “Based only on the following context information, answer the question. If the answer isn’t in the context, say so.”

The LLM then generates a fluent, natural-language answer that is directly grounded in the provided sources. The final output can often include citations, allowing users to click back to the original document.

The Tangible Benefits: Why Businesses Are Racing to Adopt RAG

Accuracy & Reduced Hallucinations:

Answers are tied to source documents, dramatically lowering the rate of fabrication.

Dynamic Knowledge:

Update your AI’s knowledge by simply adding new documents to the vector database—no model retraining required.

Transparency & Trust:

Source citations build user trust and enable fact-checking, which is vital for legal, medical, or financial applications.

Cost-Effectiveness:

It’s far more efficient to update a vector database than to retrain a multi-billion parameter LLM. It also allows you to use smaller, faster models effectively, as you provide them with the necessary specialized knowledge.

Security & Control:

Knowledge remains in your controlled database. You can govern access, redact sensitive chunks, and audit exactly what information was used in a response.

Where RAG Shines: Real-World Applications

RAG is not a theoretical concept; it’s powering real products and services today:

- Enterprise Chatbots: Internal assistants that answer questions about HR policies, software documentation, or project histories.

- Customer Support: Agents that pull answers from product manuals, knowledge bases, and past support tickets to resolve issues instantly.

- Legal & Compliance: Tools that help lawyers search through case law, contracts, and regulations in natural language.

- Research & Development: Accelerating literature reviews by querying across thousands of academic papers and technical reports.

Powering the RAG Engine: The Critical Role of GPU Infrastructure

A RAG system’s performance—its speed, scalability, and reliability—hinges on robust computational infrastructure. The two most demanding stages are embedding generation and LLM inference.

Creating high-quality vector embeddings for millions of document chunks and running low-latency inference with a powerful LLM are both computationally intensive tasks that require potent, parallel processing power. This is where access to dedicated, high-performance NVIDIA GPUs becomes a strategic advantage, not just a technical detail. The parallel architecture of GPUs like the NVIDIA H100, A100, or even the powerful RTX 4090 is perfectly suited for the matrix operations at the heart of AI inference and embedding generation.

However, for an enterprise running mission-critical RAG applications, simply having GPUs isn’t enough. They need to be managed, optimized, and scaled efficiently. This is precisely the challenge that WhaleFlux is designed to solve.

WhaleFlux is an intelligent GPU resource management platform built for AI-driven enterprises. It goes beyond basic provisioning to optimize the utilization of multi-GPU clusters, ensuring that the computational engines powering your RAG system—from embedding models to large language models—run at peak efficiency. By dynamically allocating and managing NVIDIA GPU resources (including the latest H100, H200, and A100 series), WhaleFlux helps businesses significantly reduce cloud costs while dramatically improving the deployment speed and stability of their AI applications. For a complex, multi-component system like a RAG pipeline—which might involve separate models for retrieval and generation running concurrently—WhaleFlux’s ability to orchestrate and monitor these workloads across a unified platform is invaluable. It provides the essential infrastructure layer that turns powerful GPU hardware into a reliable, scalable, and cost-effective AI factory.

Related FAQs

1. Do I always need a vector database to build a RAG system?

While a vector database is the standard and most efficient tool for the retrieval stage due to its optimized similarity search capabilities, it is technically possible to use other methods (like keyword search with BM25) for simpler applications. However, for any system requiring semantic understanding—where a query like “strategies for reducing customer turnover” should match documents discussing “client retention tactics”—a vector database is the industry-standard and recommended choice.

2. How is RAG different from fine-tuning an LLM on my documents?

They are complementary but distinct approaches. Fine-tuning retrains the model’s internal weights to change its behavior and style, making it better at a specific task (like writing in your brand’s tone). RAG provides the model with external, factual knowledge at the time of query. The best practice is often to use RAG for accurate, source-grounded knowledge and combine it with a fine-tuned model for perfect formatting and tone.

3. What are the main challenges in implementing a production RAG system?

Key challenges include: Chunking Strategy (finding the optimal document split for preserving context), Retrieval Quality (ensuring the system retrieves the most relevant and complete information, handling multi-hop queries), and Latency (managing the combined speed of retrieval and generation to keep user wait times low). This last challenge is where GPU performance and management platforms like WhaleFlux become critical, as they directly impact the inference speed and overall responsiveness of the system.

4. How can WhaleFlux specifically help with deploying and running a RAG application?

WhaleFlux provides the integrated infrastructure backbone for the demanding components of a RAG pipeline. Its AI Model service can streamline the deployment and scaling of both the embedding model and the final LLM. Its GPU management core ensures these models have dedicated, optimized access to NVIDIA GPU resources (like H100 or A100 clusters) for fast inference. Furthermore, AI Observability tools allow teams to monitor the performance, cost, and health of each stage (retrieval and generation) in real-time, identifying bottlenecks and ensuring reliability. For complex deployments, WhaleFlux’s support for custom AI services means the entire RAG pipeline can be packaged and managed as a unified, scalable application.

5. We’re considering building a proof-of-concept RAG system. What’s the first step with WhaleFlux?

The first step is to define your performance requirements and scale. Contact the WhaleFlux team to discuss your projected needs: the volume of documents to index, the expected query traffic, and your choice of LLM. WhaleFlux will then help you select and provision the right mix of NVIDIA GPU resources (from the H100 for massive-scale deployment to cost-effective RTX 4090s for development) on a rental plan that matches your project timeline. Their platform simplifies the infrastructure setup, allowing your data science and engineering teams to focus on perfecting the RAG logic—chunking, prompt engineering, and evaluation—rather than managing servers and clusters.

From Data to Dialogue: Turning Static Files into an Interactive Knowledge Base with RAG

Imagine this: a new employee, tasked with preparing a compliance report, spends hours digging through shared drives, sifting through hundreds of PDFs named policy_v2_final_new.pdf, and nervously cross-referencing outdated wiki pages. Across the office, a seasoned customer support agent scrambles to find the latest technical specification to answer a client’s urgent query, bouncing between four different databases.

This chaotic scramble for information is the daily reality in countless organizations. Companies today are data-rich but insight-poor. Their most valuable knowledge—product manuals, internal processes, research reports, meeting notes—lies trapped in static files, inert and inaccessible. Traditional keyword-based search fails because it doesn’t understand context or meaning; it only finds documents that contain the exact words you typed.

The solution is not more documents or better filing systems. It’s a fundamental transformation: turning that passive archive into an interactive, conversational knowledge base. This shift is powered by a revolutionary AI architecture called Retrieval-Augmented Generation (RAG). In essence, RAG provides a bridge between your proprietary data and the powerful reasoning capabilities of large language models (LLMs). It doesn’t just store information; it understands it, reasons with it, and delivers it through natural dialogue.

This article will guide you through the journey from static data to dynamic dialogue. We’ll demystify how RAG works, explore its transformative benefits, and examine how integrated platforms are making this powerful technology accessible for every enterprise.

The Problem with the “Static” in Static Files

Traditional knowledge management systems are built on a paradigm of storage and recall. Data is organized in folders, tagged with metadata, and retrieved via keyword matching. This approach has critical flaws in the modern workplace:

Lack of Semantic Understanding:

Searching for “mitigating financial risk” won’t find a document that discusses “hedging strategies” unless those exact keywords are present.

No Synthesis or Summarization:

The system returns a list of documents, not an answer. The cognitive burden of reading, comparing, and synthesizing information remains entirely on the human user.

The “Hallucination” Problem with Raw LLMs:

One might think to simply feed all documents to a public LLM like ChatGPT. However, these models have no inherent knowledge of your private data and are prone to inventing plausible-sounding but incorrect information when asked about it—a phenomenon known as “hallucination”.

How RAG Brings Your Data to Life: A Three-Act Process

RAG solves these issues by creating a smart, two-step conversation between your data and an AI model. Think of it as giving the LLM a super-powered, instantaneous research assistant that only consults your approved sources.

Act 1: The Intelligent Librarian (Retrieval)

When you ask a question—”What’s the process for approving a vendor contract over $50k?”—the RAG system doesn’t guess. First, it transforms your question into a mathematical representation (a vector embedding) that captures its semantic meaning. It then instantly searches a pre-processed vector database of your company documents to find text chunks with the most similar meanings. This isn’t keyword search; it’s semantic search. It can find relevant passages even if they use different terminology.

Act 2: The Contextual Briefing (Augmentation)

The most relevant retrieved text chunks are then packaged together. This curated, factual context is what “augments” the next step. It ensures the AI’s response is grounded in your actual documentation.

Act 3: The Expert Communicator (Generation)

Finally, this context is fed to an LLM alongside your original question, with a critical instruction: “Answer the question based solely on the provided context.” The LLM then synthesizes a clear, concise, and natural language answer, citing the source documents. This process dramatically reduces hallucinations and ensures the output is accurate, relevant, and trustworthy.

Table: The RAG Pipeline vs. Traditional Search

| Aspect | Traditional Keyword Search | RAG-Powered Knowledge Base |

| Core Function | Finds documents containing specific words. | Understands questions and generates answers based on meaning. |

| Output | A list of links or files for the user to review. | A synthesized, conversational answer with source citations. |

| Knowledge Scope | Limited to pre-indexed keywords and tags. | Dynamically leverages the entire semantic content of all uploaded documents. |

| User Effort | High (must manually review results). | Low (receives a direct answer). |

| Accuracy for Complex Queries | Low (misses conceptual connections). | High (understands context and intent). |

Beyond Basic Q&A: The Evolving Power of RAG

The core RAG pattern is just the beginning. Advanced implementations are solving even more complex challenges:

Handling Multi-Modal Data:

Next-generation systems can process and reason across not just text, but also tables, charts, and images within documents, creating a truly comprehensive knowledge base.

Multi-Hop Reasoning:

For complex questions, advanced RAG frameworks can perform “multi-hop” retrieval. They break down a question into sub-questions, retrieve information for each step, and logically combine them to arrive at a final answer.

From Knowledge Graph to “GraphRAG”:

Some of the most effective systems now combine vector search with knowledge graphs. These graphs explicitly model the relationships between entities (e.g., “Product A uses Component B manufactured by Supplier C”). This allows for breathtakingly precise reasoning about connections within the data, moving beyond text similarity to true logical inference.

The Engine for Dialogue: Infrastructure Matters

Creating a responsive, reliable, and scalable interactive knowledge base is not just a software challenge—it’s an infrastructure challenge. The RAG pipeline, especially when using powerful LLMs, is computationally intensive. This is where a specialized AI infrastructure platform becomes critical.

Consider WhaleFlux, a platform designed specifically for enterprises embarking on this AI journey. WhaleFlux addresses the core infrastructure hurdles that can slow down or derail a RAG project:

Unified AI Service Platform:

WhaleFlux integrates the essential pillars for deployment: intelligent GPU resource management, model serving, AI agent orchestration, and observability tools. This eliminates the need to stitch together disparate tools from different vendors.

Optimized Performance & Cost:

At its core, WhaleFlux is a smart GPU resource management tool. It optimizes utilization across clusters of NVIDIA GPUs (including the H100, H200, A100, and RTX 4090 series), ensuring your RAG system has the compute power it needs for fast inference without over-provisioning and wasting resources. This directly lowers cloud costs while improving the speed and stability of model deployments.

Simplified Lifecycle Management:

From deploying and fine-tuning your chosen AI model (whether open-source or proprietary) to building sophisticated AI agents that leverage your new knowledge base, WhaleFlux provides a cohesive environment. Its observability suite is crucial for monitoring accuracy, tracking which documents are being retrieved, and ensuring the system performs reliably at scale.

From Concept to Conversation: Getting Started

Transforming your static files into a dynamic knowledge asset may seem daunting, but a practical, phased approach makes it manageable:

1. Start with a High-Value, Contained Use Case:

Don’t boil the ocean. Choose a specific team (e.g., HR, IT support) or a critical document set (e.g., product compliance manuals) for your pilot.

2. Curate and Prepare Your Knowledge:

The principle of “garbage in, garbage out” holds true. Begin with well-structured, high-quality documents. Clean PDFs, structured wikis, and organized process guides yield the best results.

3. Choose Your Path: Platform vs. Build:

You can assemble an open-source stack (using tools like Milvus for vector search and frameworks like LangChain), or leverage a low-code/no-code application platform like WhaleFlux that abstracts away much of the complexity. The platform approach significantly accelerates time-to-value and reduces maintenance overhead.

4. Iterate Based on Feedback:

Launch your pilot, monitor interactions, and gather user feedback. Use this to refine retrieval settings, add missing knowledge, and improve prompt instructions to the LLM.

The transition from static data to dynamic dialogue is more than a technological upgrade; it’s a cultural shift towards democratized expertise. An interactive knowledge base powered by RAG ensures that every employee can access the organization’s collective intelligence instantly and accurately. It turns information from a cost center—something that takes time to find—into a strategic asset that drives efficiency, consistency, and innovation. The technology, led by frameworks like RAG and powered by robust platforms, is ready. The question is no longer if you should build this capability, but how quickly you can start the conversation.

FAQs

1. What kind of documents work best for creating an interactive knowledge base with RAG?

Well-structured text-based documents like PDFs, Word files, Markdown wikis, and clean HTML web pages yield the best results. The system excels with manuals, standard operating procedures (SOPs), research reports, and curated FAQ sheets. While it can process scanned documents, they require an OCR (Optical Character Recognition) step first.

2. How does RAG ensure the AI doesn’t share inaccurate or confidential information from our documents?

RAG controls the AI’s output by grounding it only in the documents you provide. It cannot generate answers from its general training data unless that information is also in your retrieved context. Furthermore, a proper enterprise platform includes access controls and permissions, ensuring that sensitive documents are only retrieved and used to answer queries from authorized personnel.

3. Is it very expensive and technical to build and run a RAG system?

The cost and complexity spectrum is wide. While a custom-built, large-scale system requires significant technical expertise, the emergence of low-code application platforms and managed AI infrastructure services has dramatically lowered the barrier. These platforms handle much of the underlying complexity (vector database management, model deployment, scaling) and offer more predictable operational pricing, allowing teams to start with a focused pilot without a massive upfront investment.

4. We update our documents frequently. How does the knowledge base stay current?

A well-architected RAG system supports incremental updating. When a new document is added or an existing one is edited, the system can process just that file, generate new vector embeddings, and update the search index without needing a full, time-consuming rebuild of the entire knowledge base. This allows the interactive assistant to provide answers based on the latest information.

5. Can we use our own proprietary AI model with a RAG system, or are we locked into a specific one?

A key advantage of flexible platforms is model agnosticism. You are typically not locked in. You can choose to use a powerful open-source model (like Llama or DeepSeek), a commercial API (like OpenAI or Anthropic), or even a model you have fine-tuned internally. The platform’s role is to provide the GPU infrastructure and serving environment to run your model of choice efficiently and reliably.

How RAG Supercharges Your AI with a Live Knowledge Base

In the race to build truly useful enterprise AI, a silent but significant shift is underway. The initial wave of AI applications, powered by large language models (LLMs) alone, has hit a reliability ceiling. The Achilles’ heel—their static nature and tendency to “hallucinate”—has made them risky for high-stakes business decisions. The solution emerging as the new industry standard isn’t a single technology, but a powerful partnership: Retrieval-Augmented Generation (RAG) paired with a Live Knowledge Base.

Part 1: The Limitations of the Solo Act

To appreciate the duo, we must understand the shortcomings of the solo performer: the standalone LLM.

A model like GPT-4 is a masterpiece of pattern recognition, trained on a vast but frozen snapshot of the internet. Its knowledge is monolithic and static. Asking it about your company’s Q4 sales targets, the latest bug fixes in your software, or yesterday’s customer feedback is futile—it simply wasn’t trained on that data. Even when it has relevant knowledge, it operates as a “black box,” generating answers by predicting the most statistically likely next word, not by referencing verifiable facts. This leads to two critical problems:

- The Hallucination Problem: The AI confidently invents information, mixing real facts with plausible fiction, especially dangerous when dealing with proprietary or precise data.

- The Knowledge Expiry Problem: The world moves faster than any training cycle. A model trained on data up to 2023 is oblivious to everything since—market shifts, new regulations, internal policy updates.

This is where RAG enters as the essential first partner.

Part 2: RAG: The Bridge to External Truth

Retrieval-Augmented Generation (RAG) provides the crucial mechanism to ground an LLM in factual reality. The process is elegant:

- Retrieve: When a user asks a question, the system queries a separate, external database of information (your documents, wikis, reports) to find the most relevant chunks of text.

- Augment: These retrieved text snippets are formatted as “context” or “source material.”

- Generate: The LLM is then instructed: “Answer the user’s question based solely on this provided context.” This simple directive forces the model to anchor its response in the retrieved facts, dramatically reducing hallucinations.

RAG solves the grounding problem. But for this system to be powerful, the database it queries must be more than a static repository—it must be alive.

Part 3: The Live Knowledge Base: The Beating Heart

This is the second, equally vital member of the duo. A Live Knowledge Base is not just a digital filing cabinet. It is a dynamic, continuously updated ecosystem of an organization’s intelligence. It ingests information from a multitude of living sources:

- Real-time Data Streams: CRM updates (Salesforce), support tickets (Zendesk), live analytics dashboards.

- Collaborative Hubs: The latest project briefs from Notion, updated pages from Confluence, code documentation from GitHub.

- Structured Databases: Product inventories, customer records, ERP data.